User Guide

Introduction

Overview

Welcome to the Camunda BPM user guide! Camunda BPM is a Java-based framework for process automation. This document contains information about the features provided by the Camunda BPM platform.

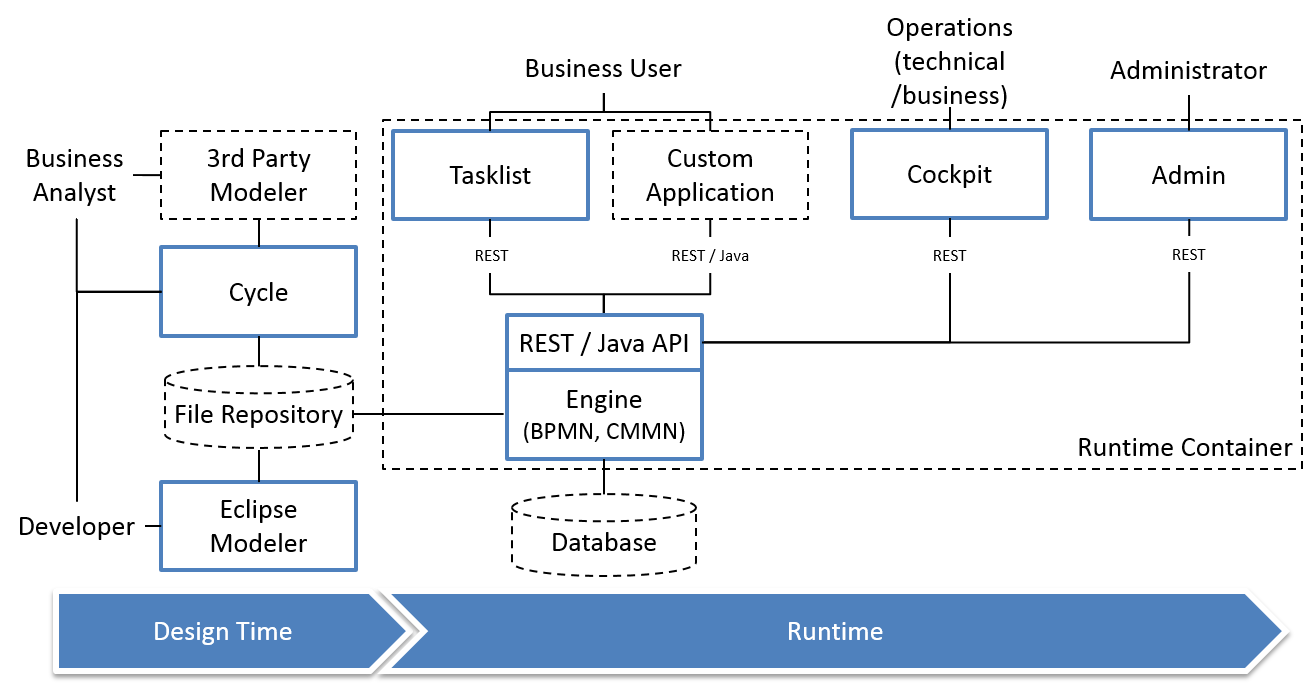

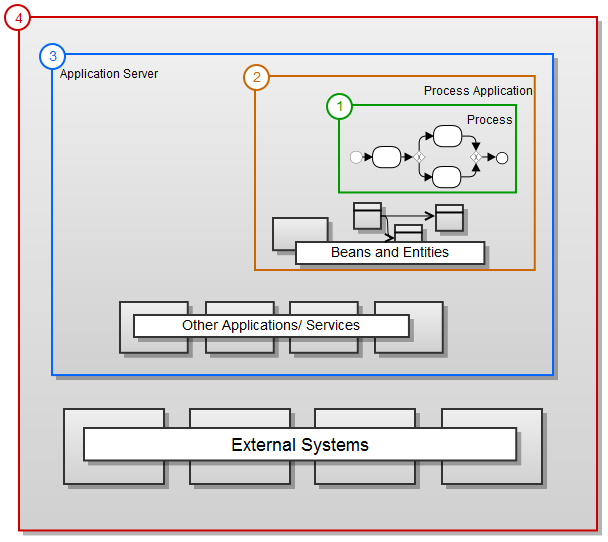

Camunda BPM is built around the process engine component. The following illustration shows the most important components of Camunda BPM along with some typical user roles.

Process Engine & Infrastructure

- Process Engine The process engine is a java library responsible for executing BPMN 2.0 processes and workflows. It has a lightweight POJO core and uses a relational database for persistence. ORM mapping is provided by the mybatis mapping framework.

- Spring Framework Integration

- CDI / Java EE Integration

- Runtime Container Integration (Integration with application server infrastructure.)

Web Applications

- REST API The REST API allows you to use the process engine from a remote application or a JavaScript application. (Note: The documentation of the REST API is factored out into an own document.)

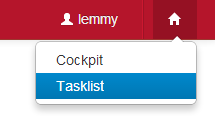

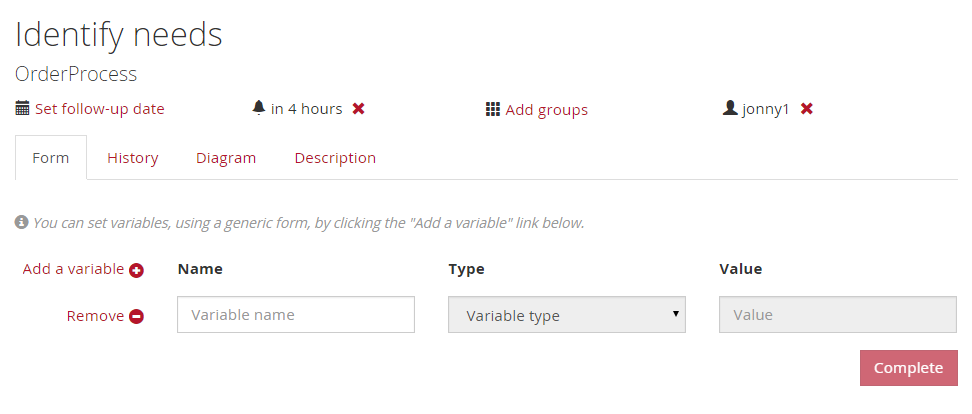

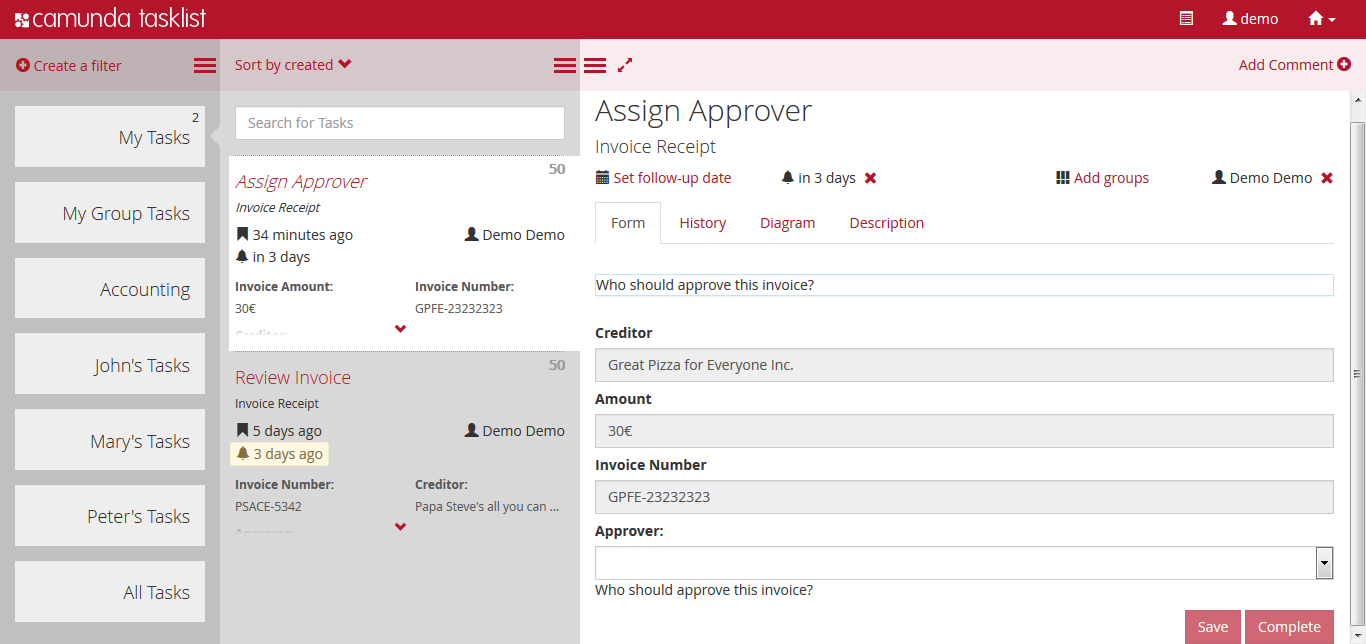

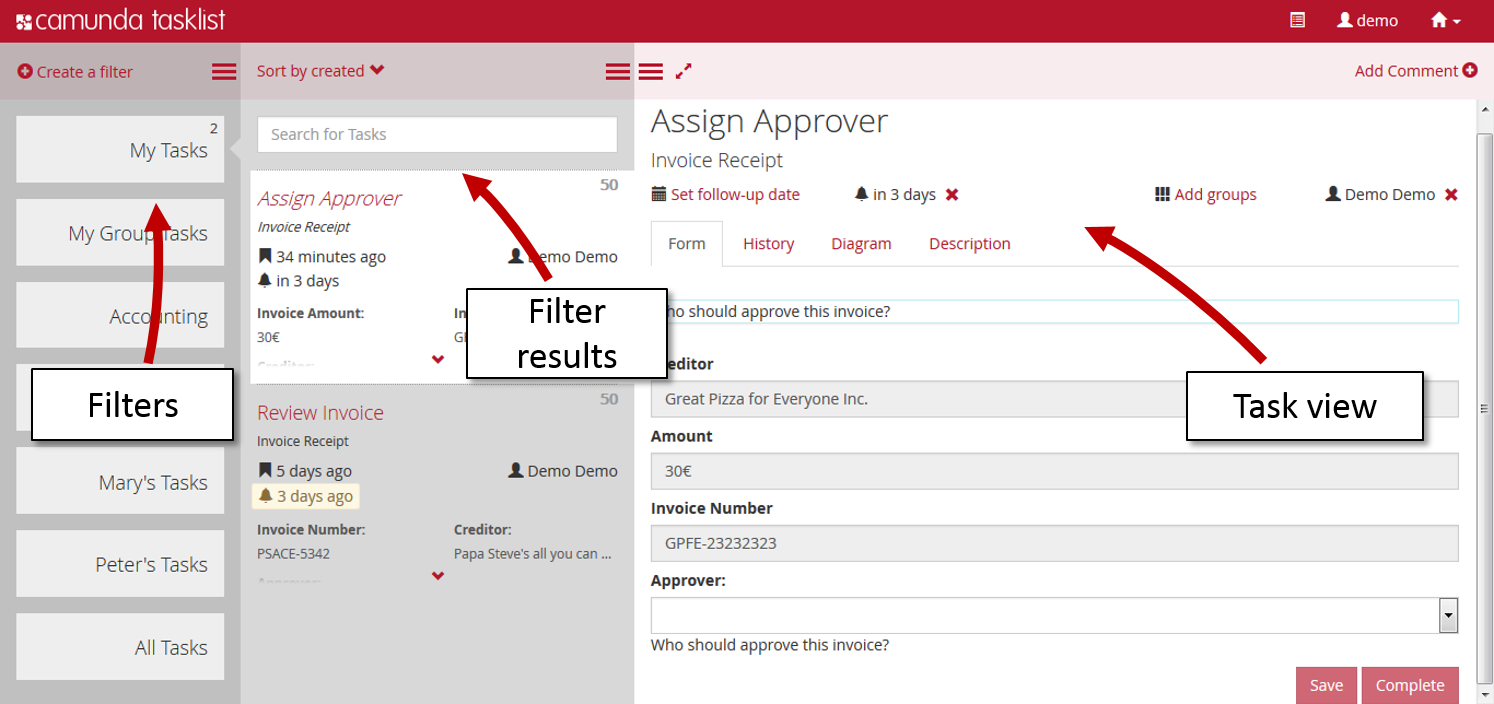

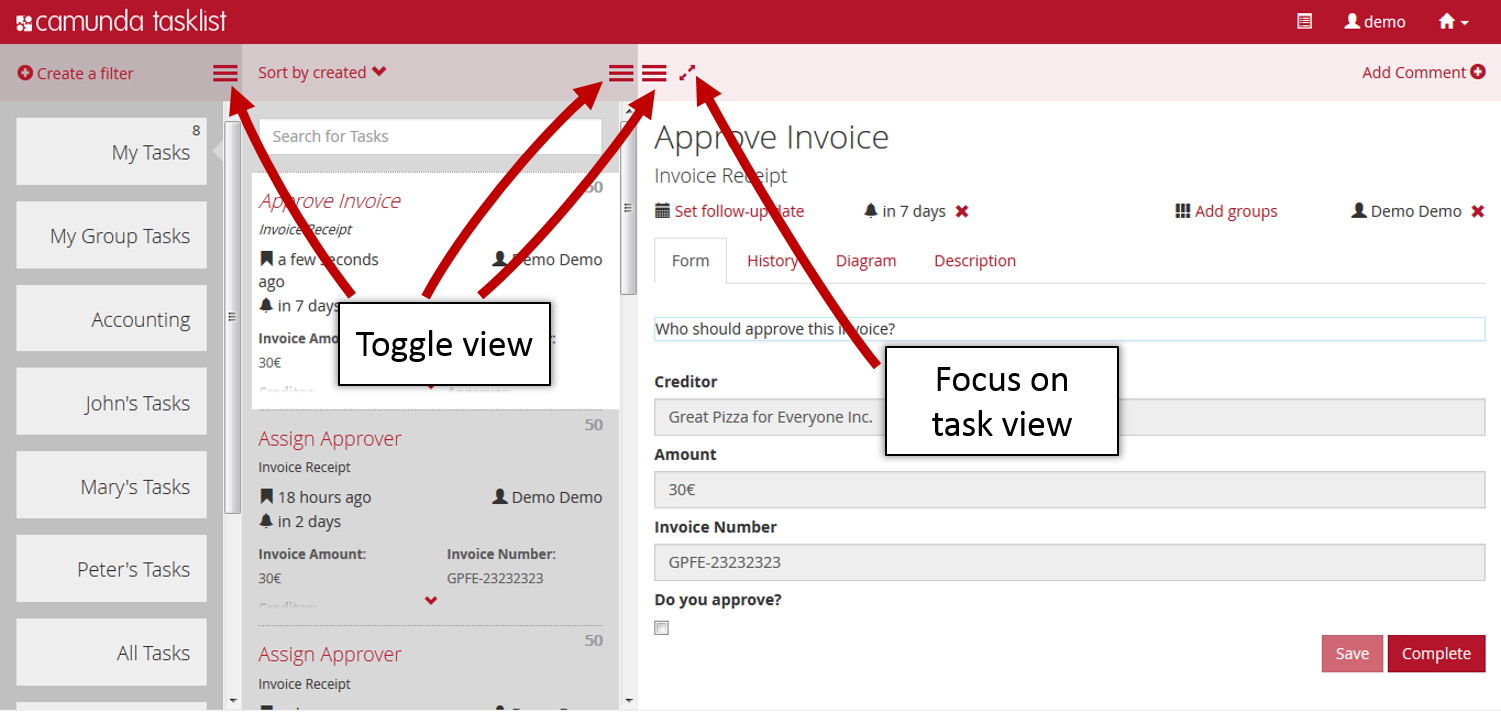

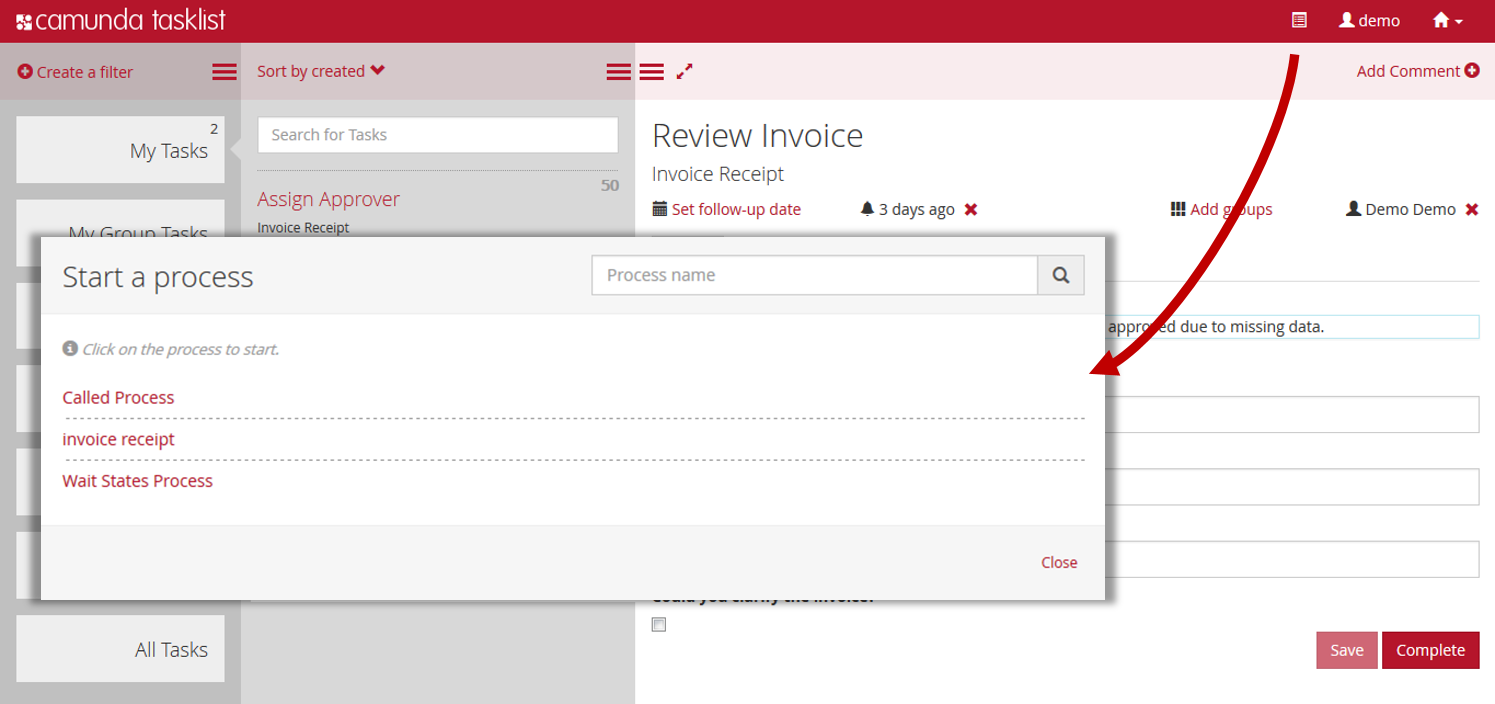

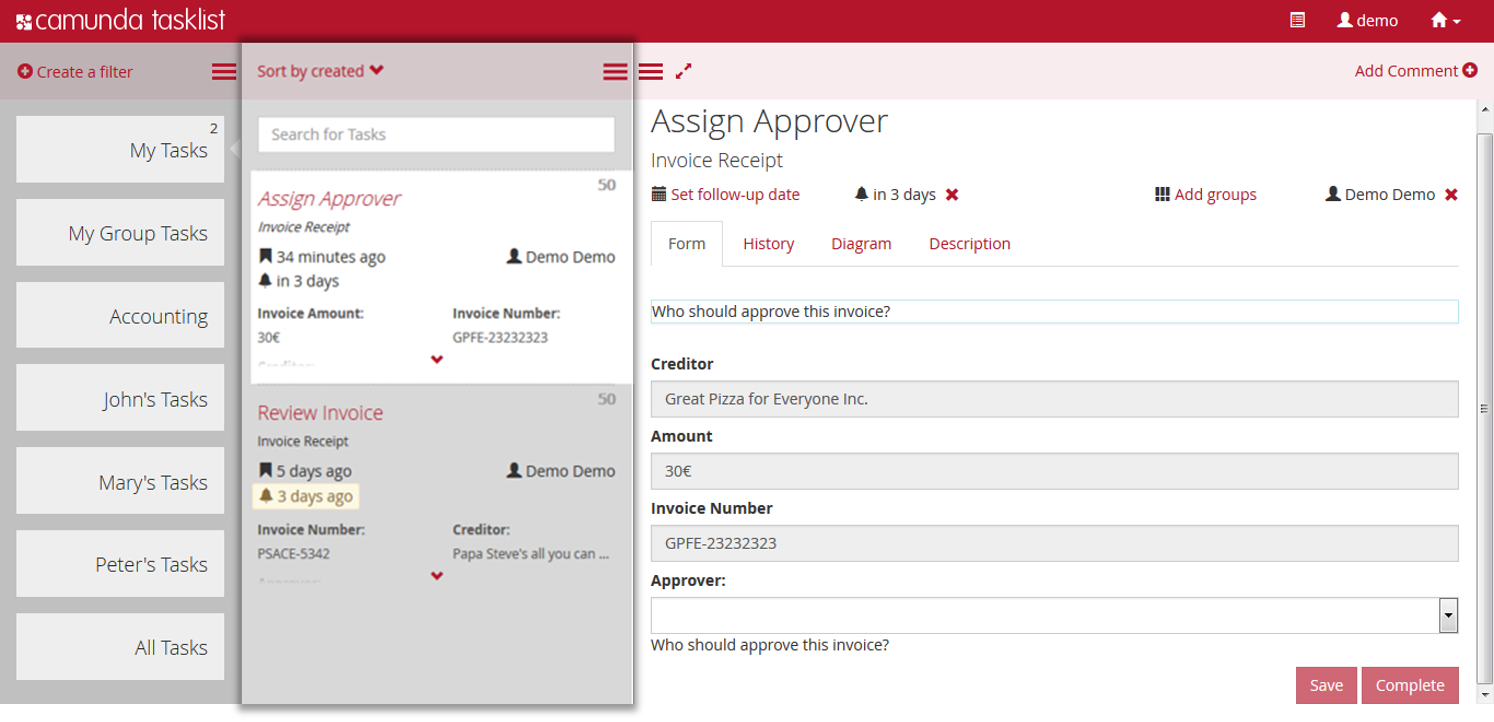

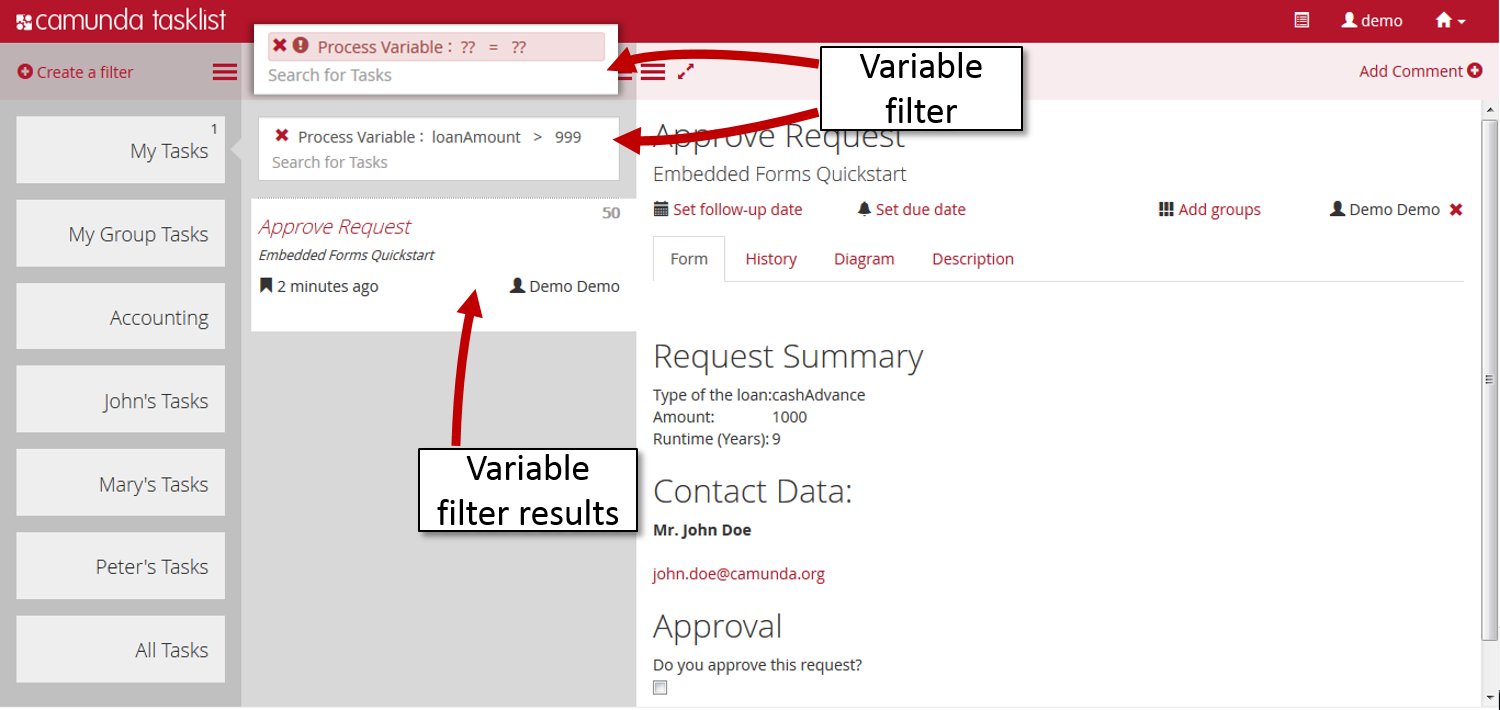

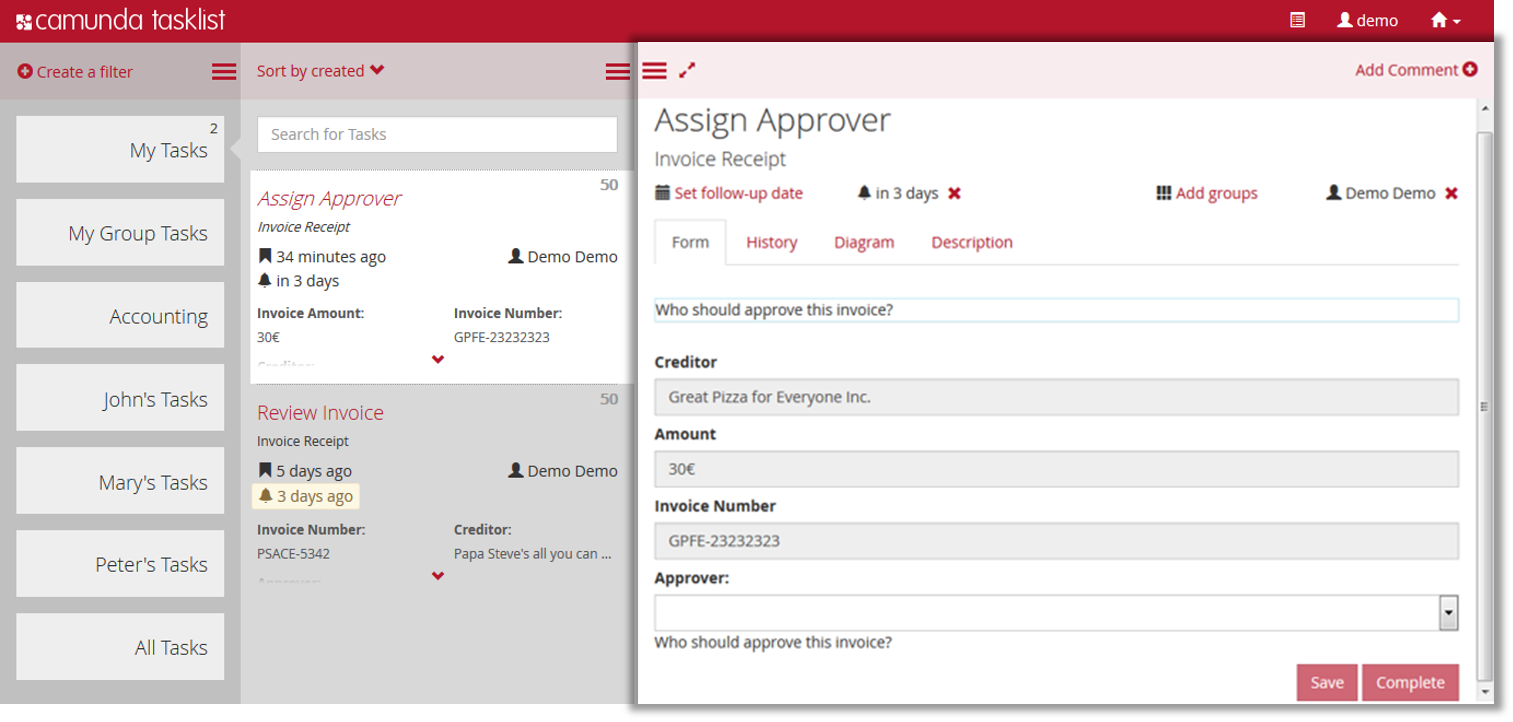

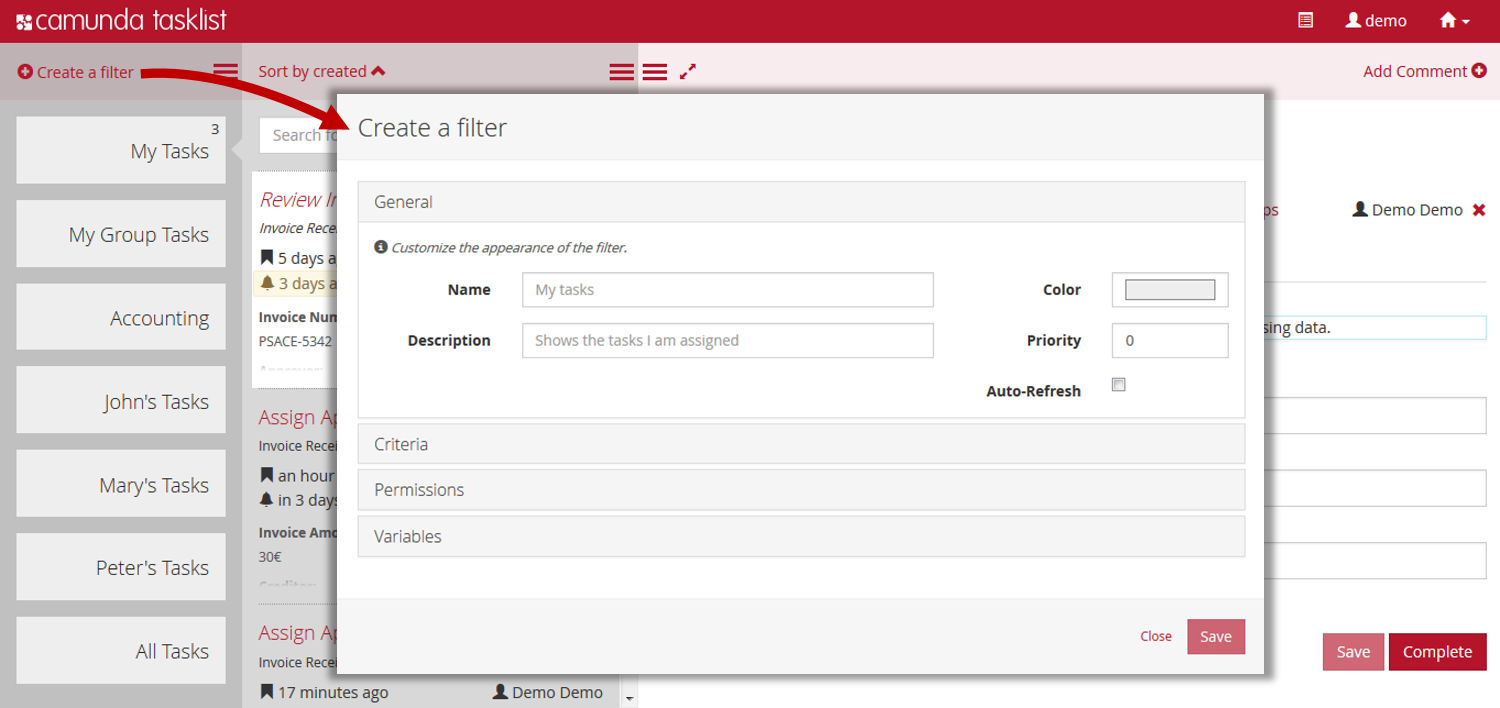

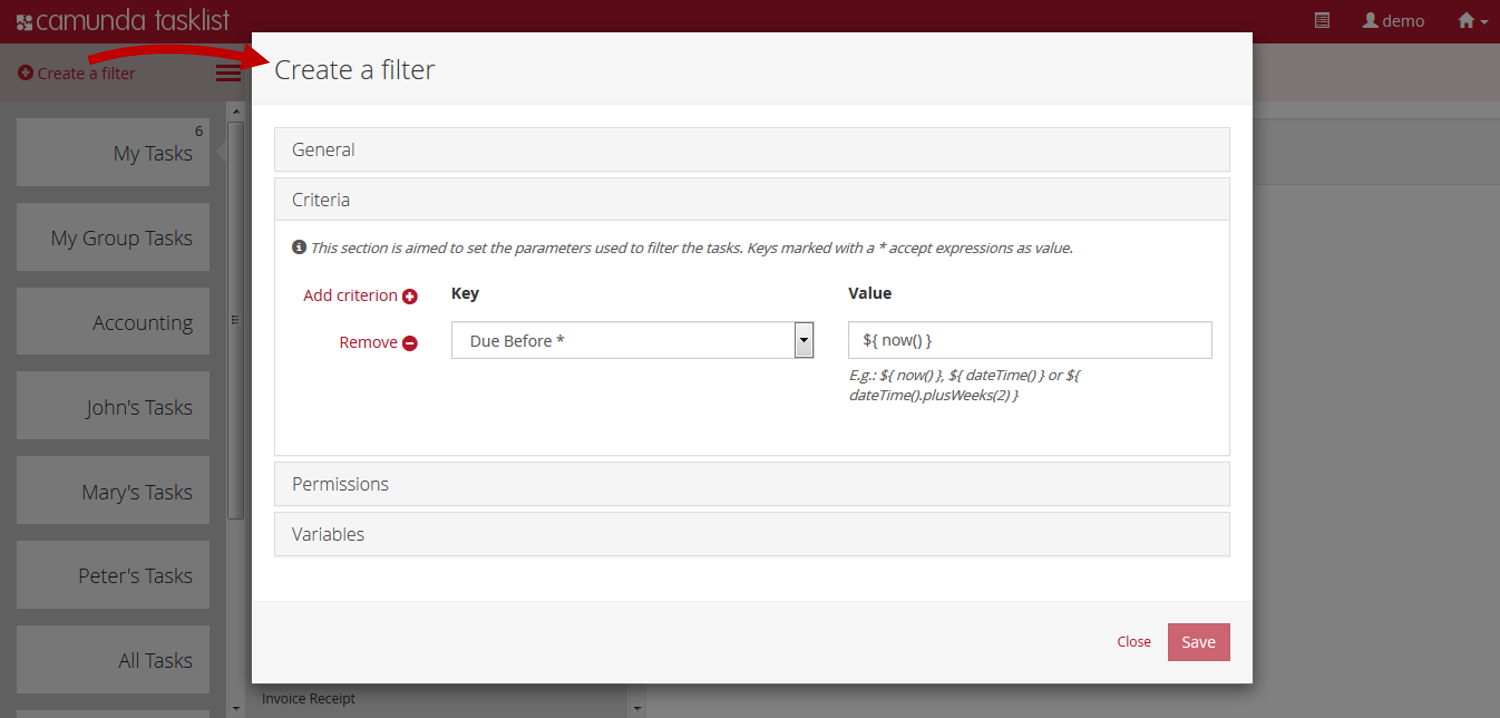

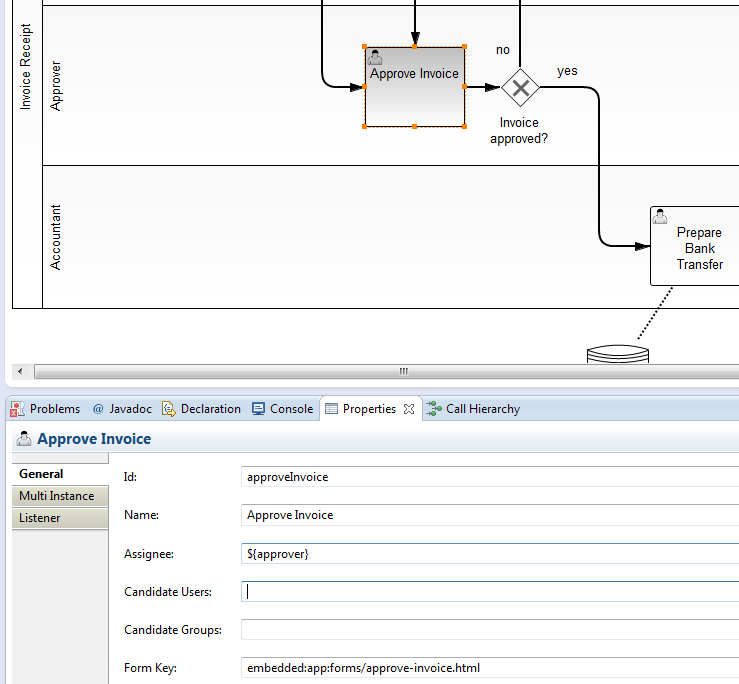

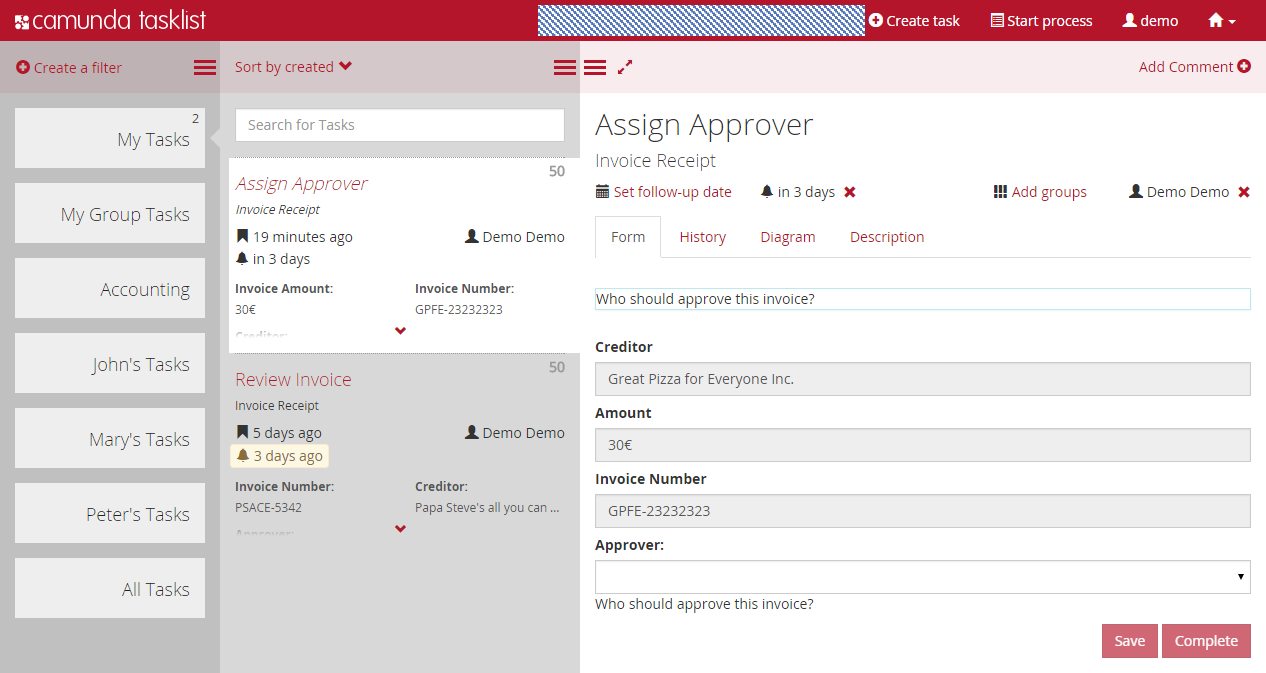

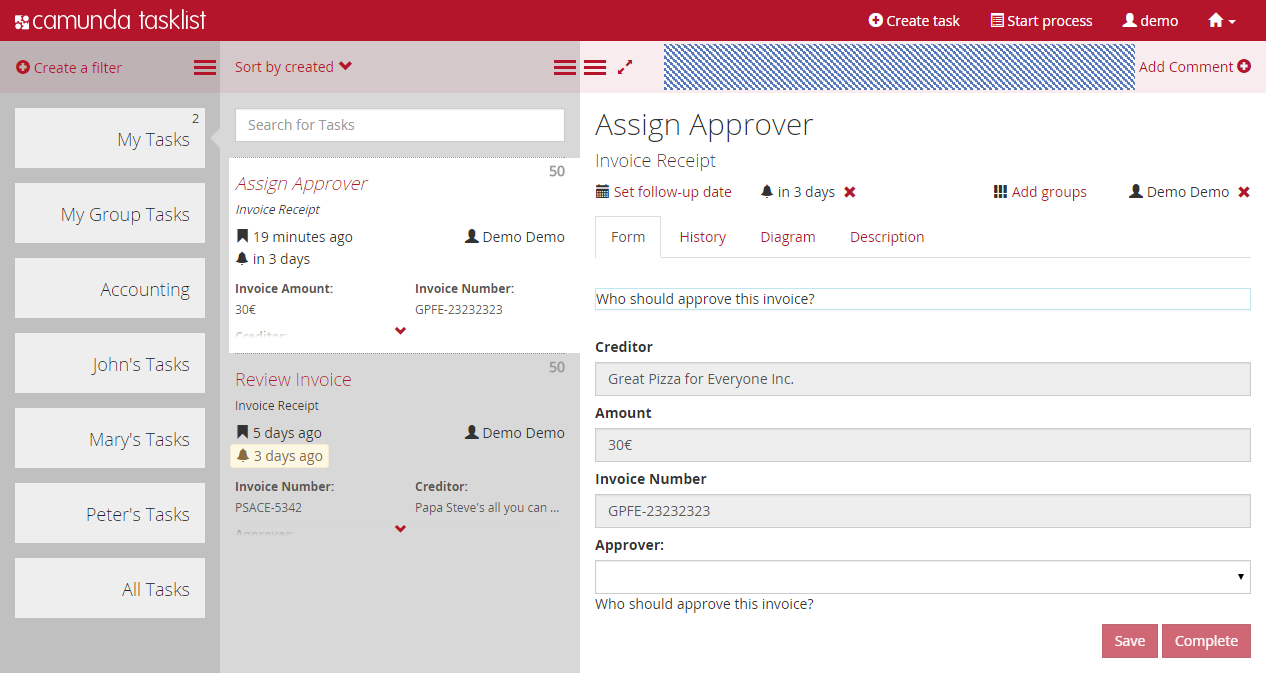

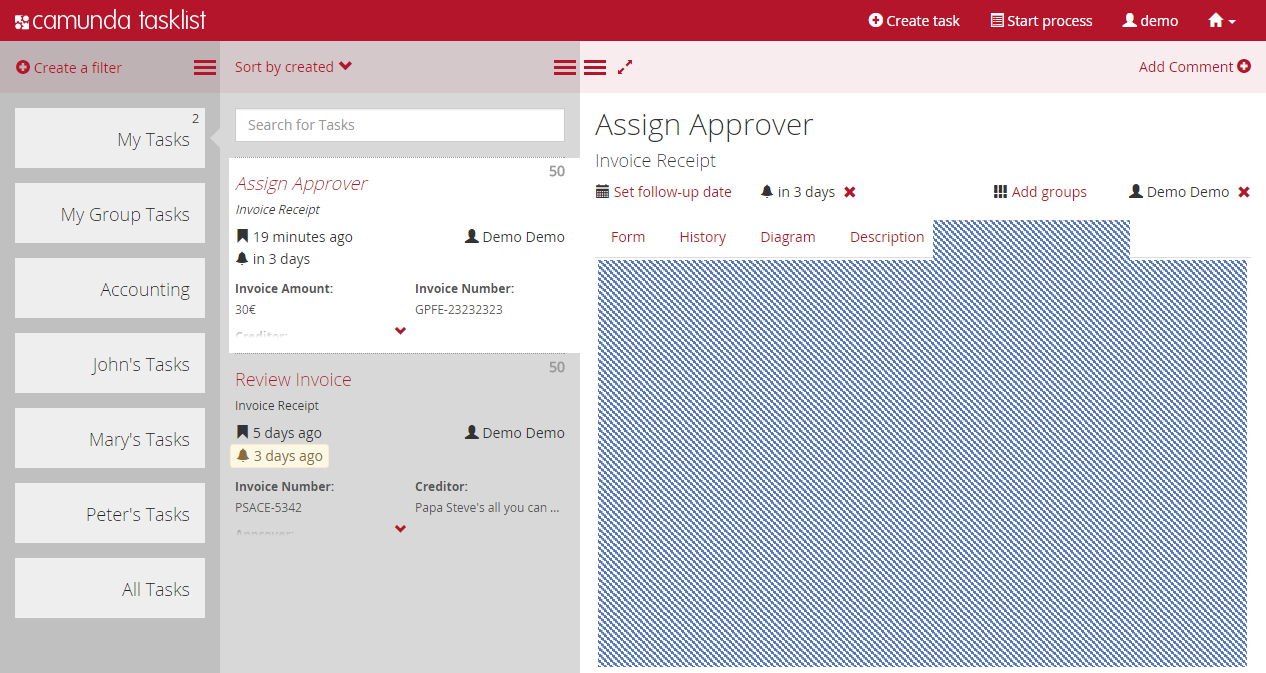

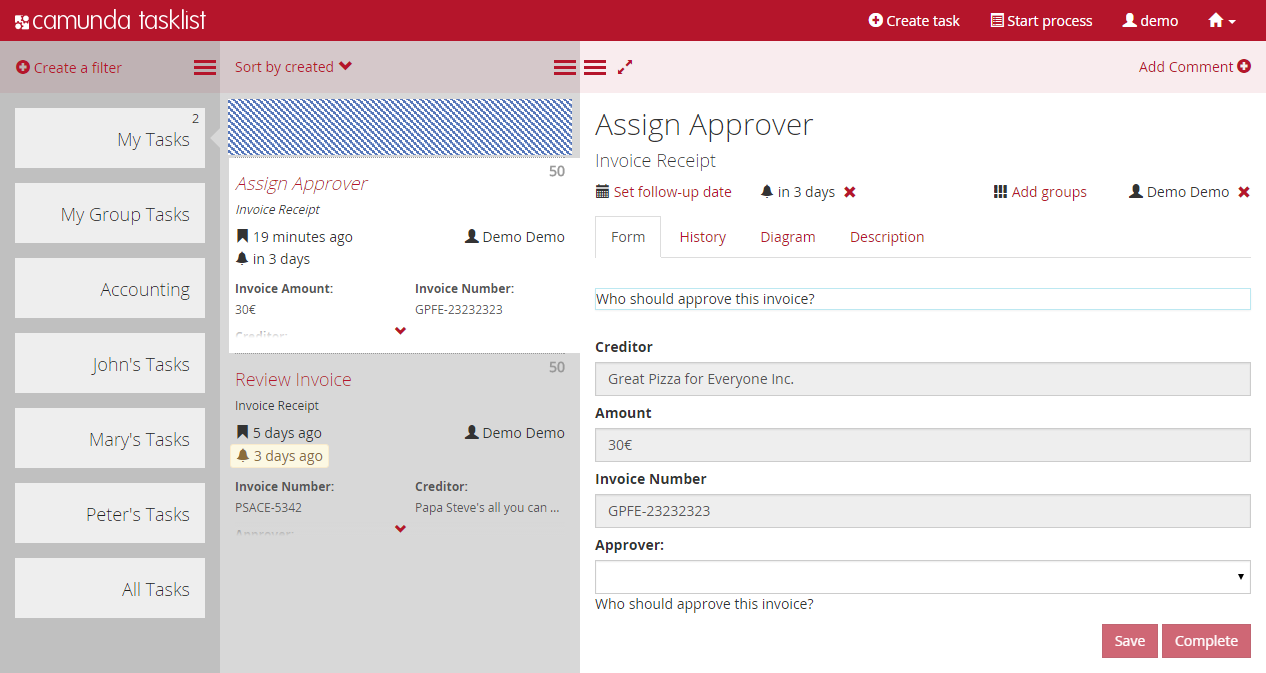

- Camunda Tasklist A web application for human workflow management and user tasks that allows process participants to inspect their workflow tasks and navigate to task forms in order to work on the tasks and provide data input.

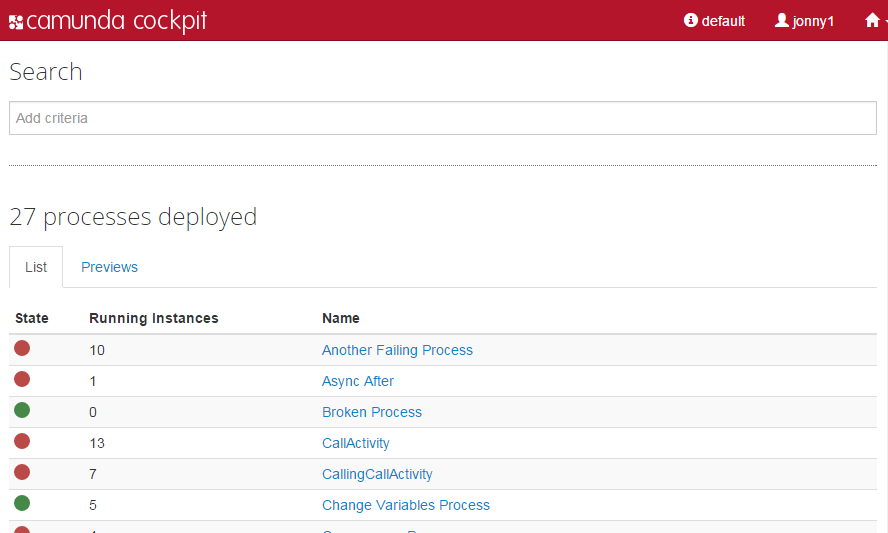

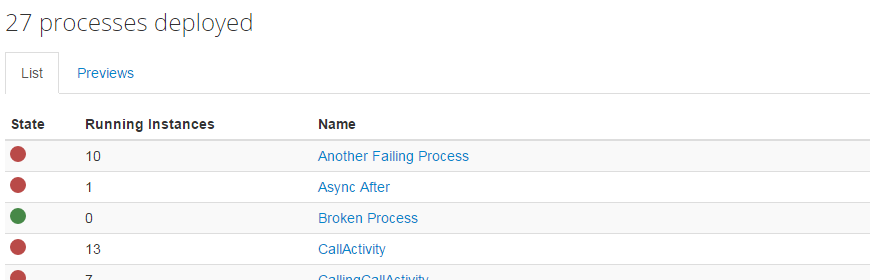

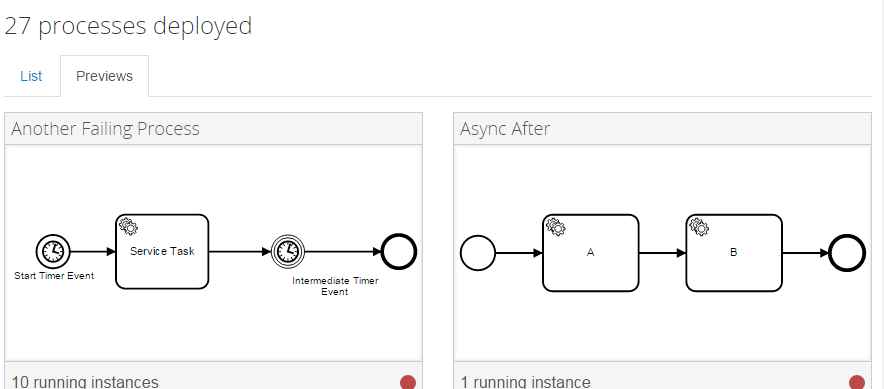

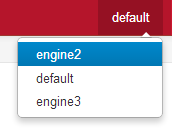

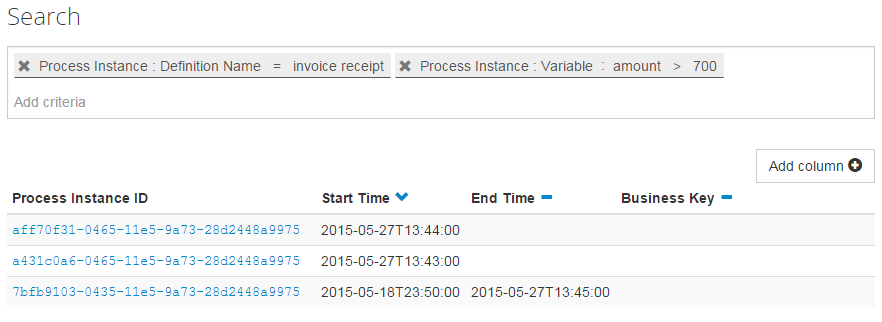

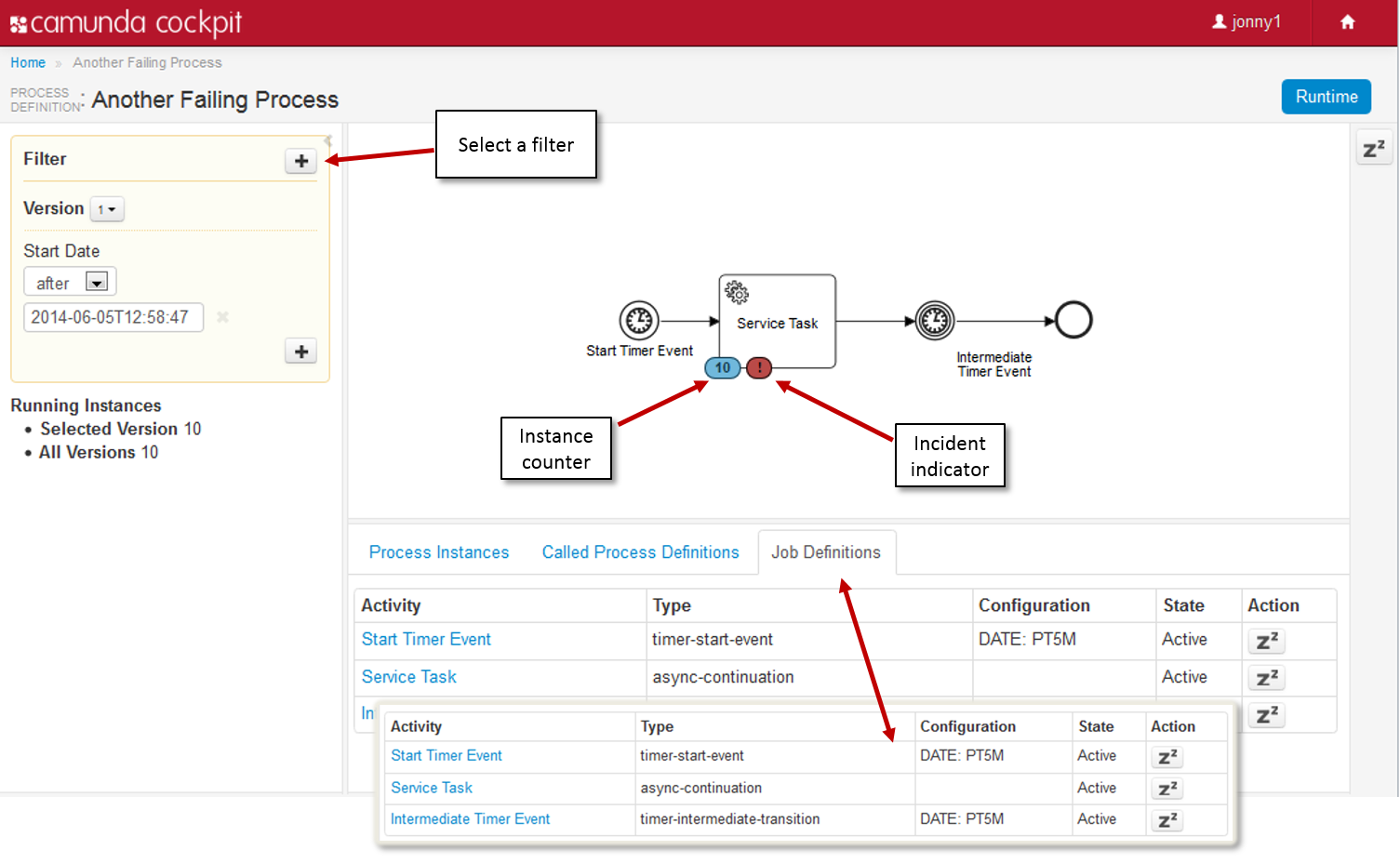

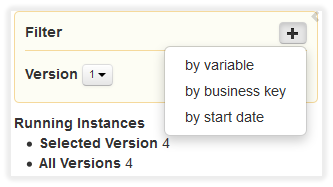

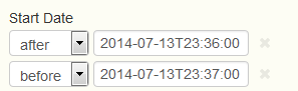

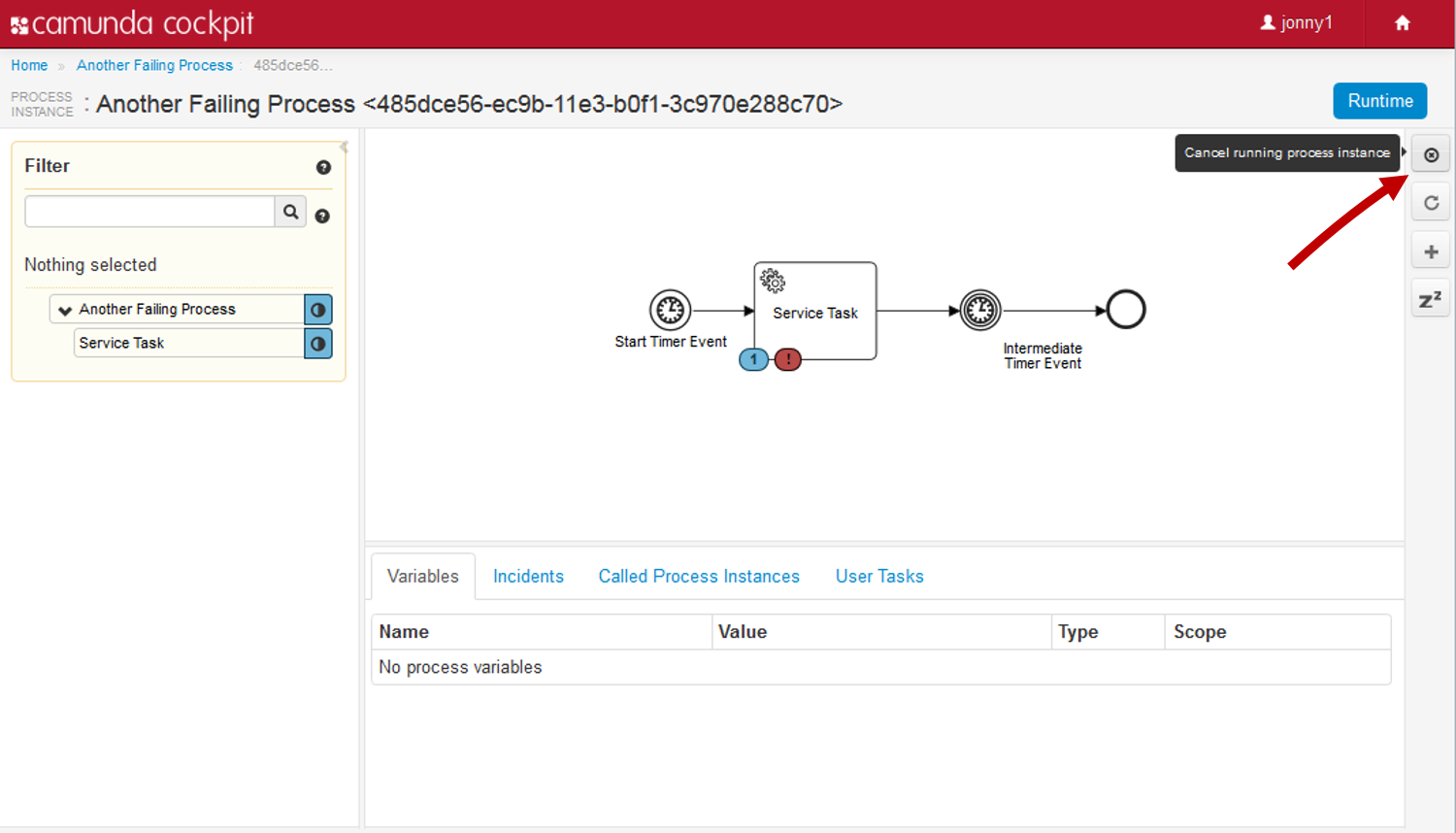

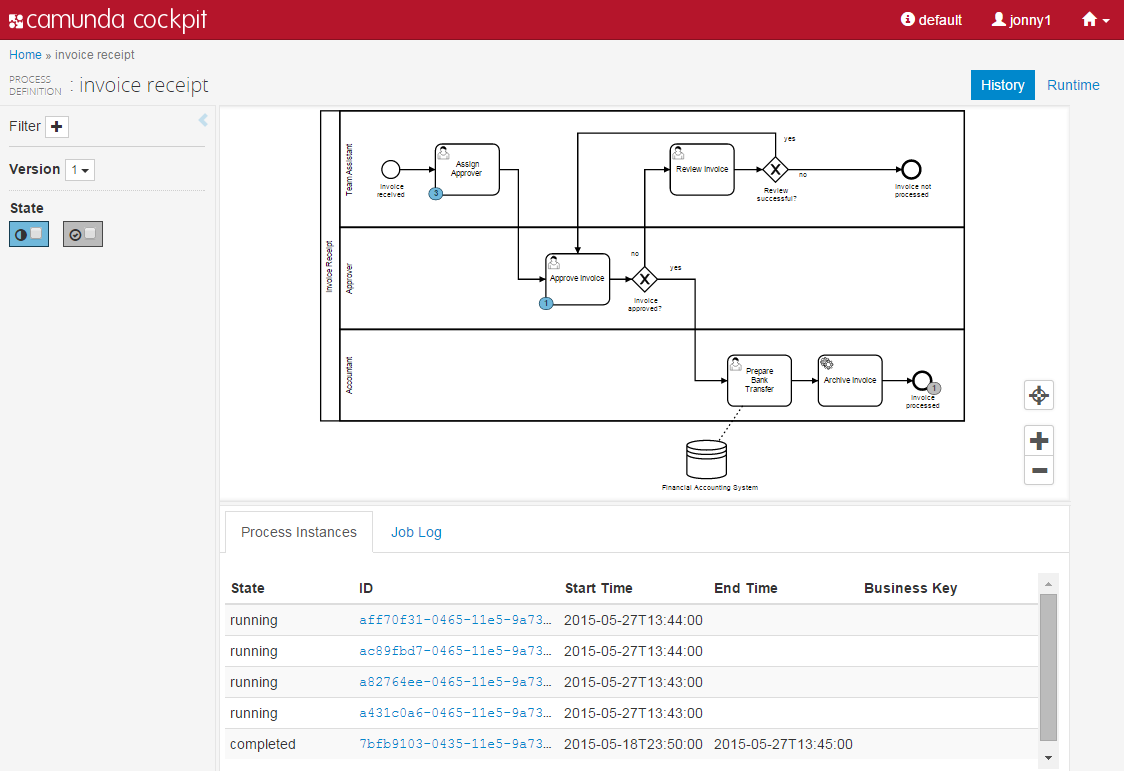

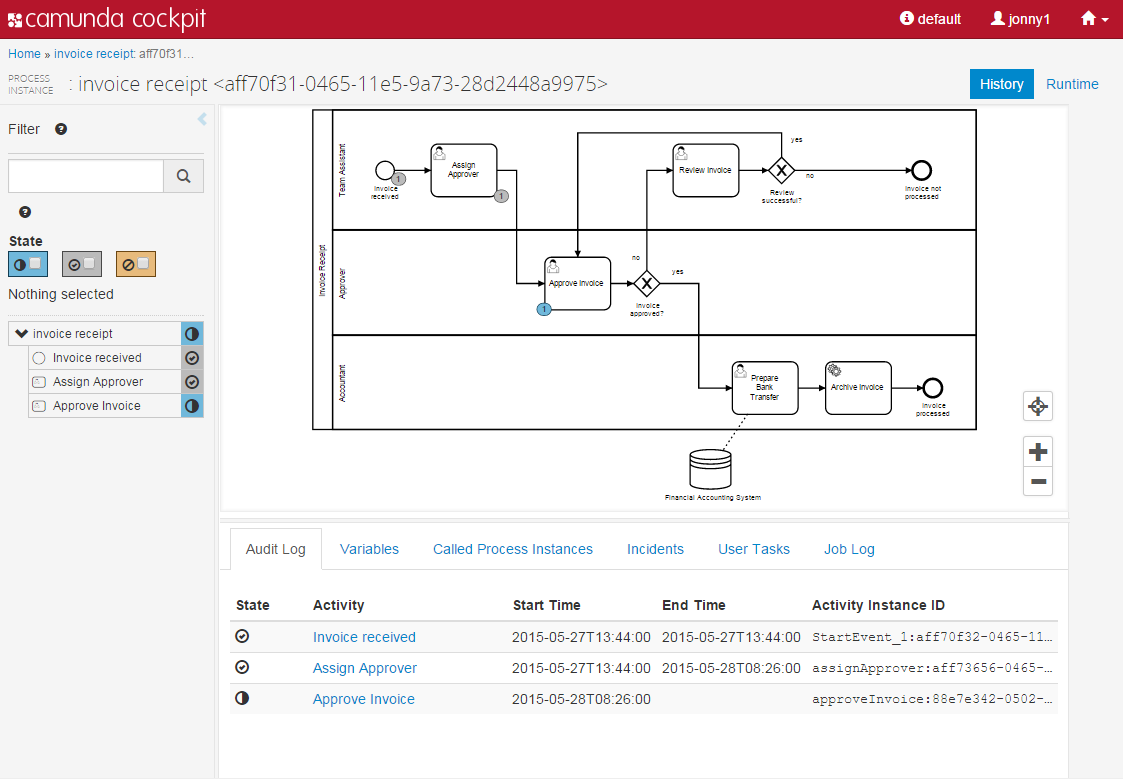

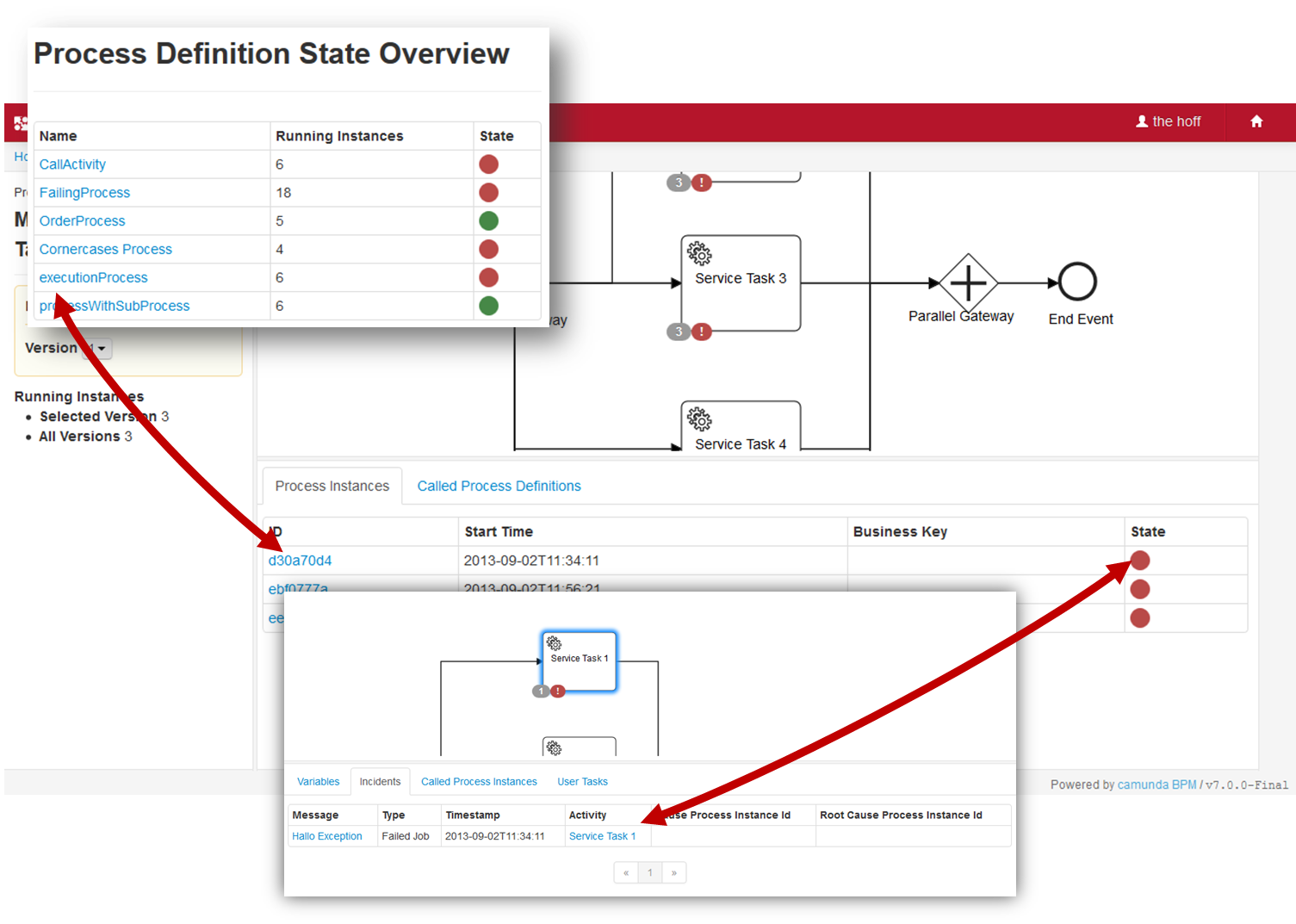

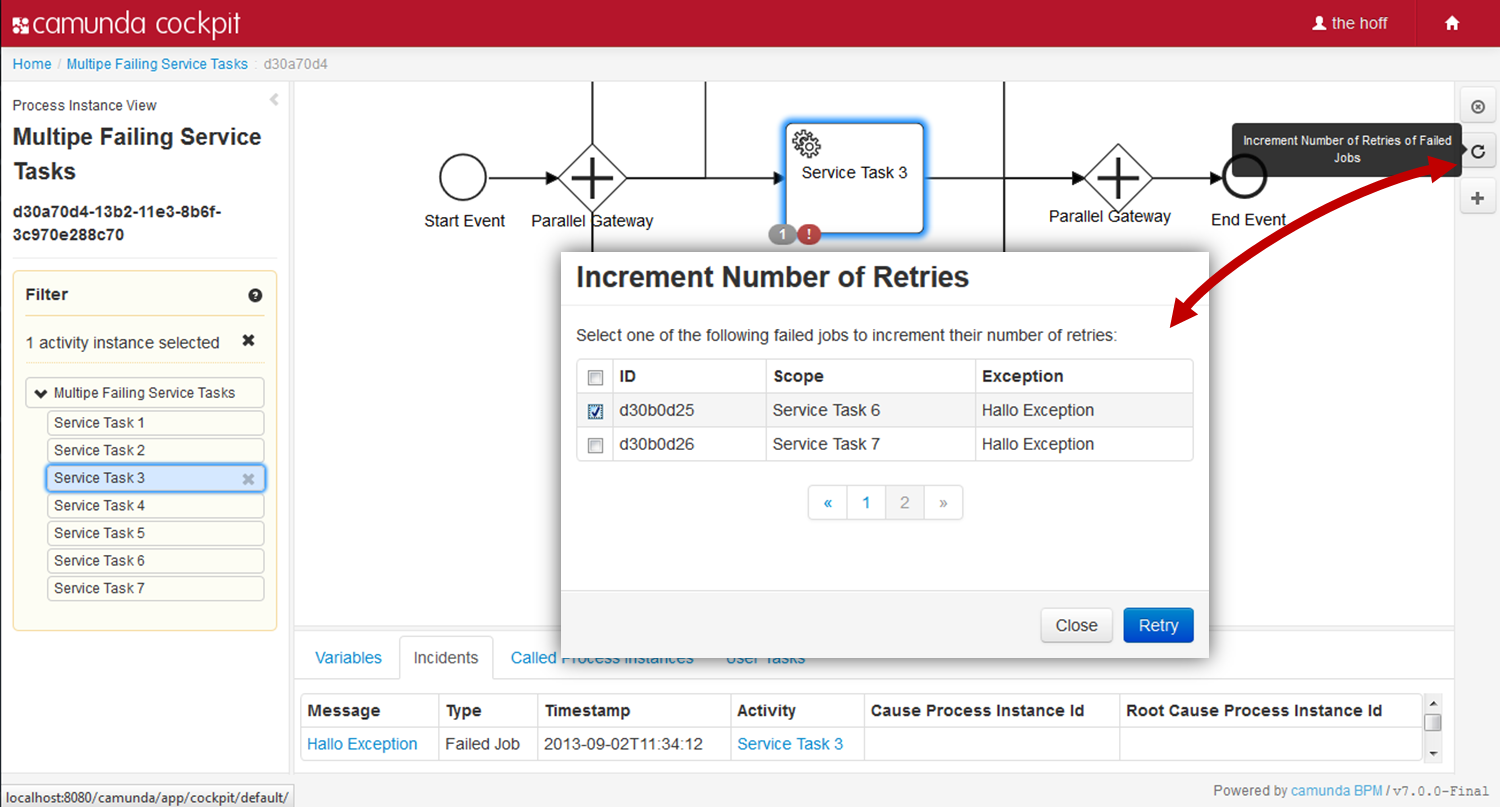

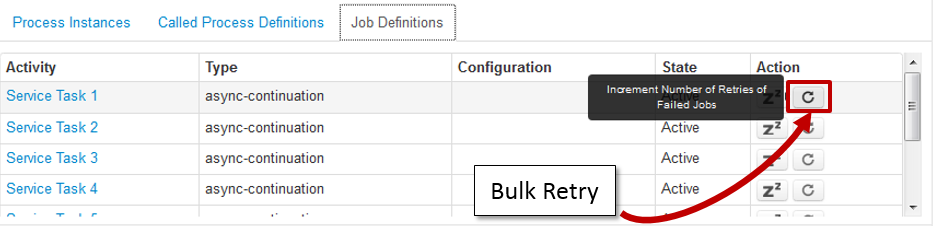

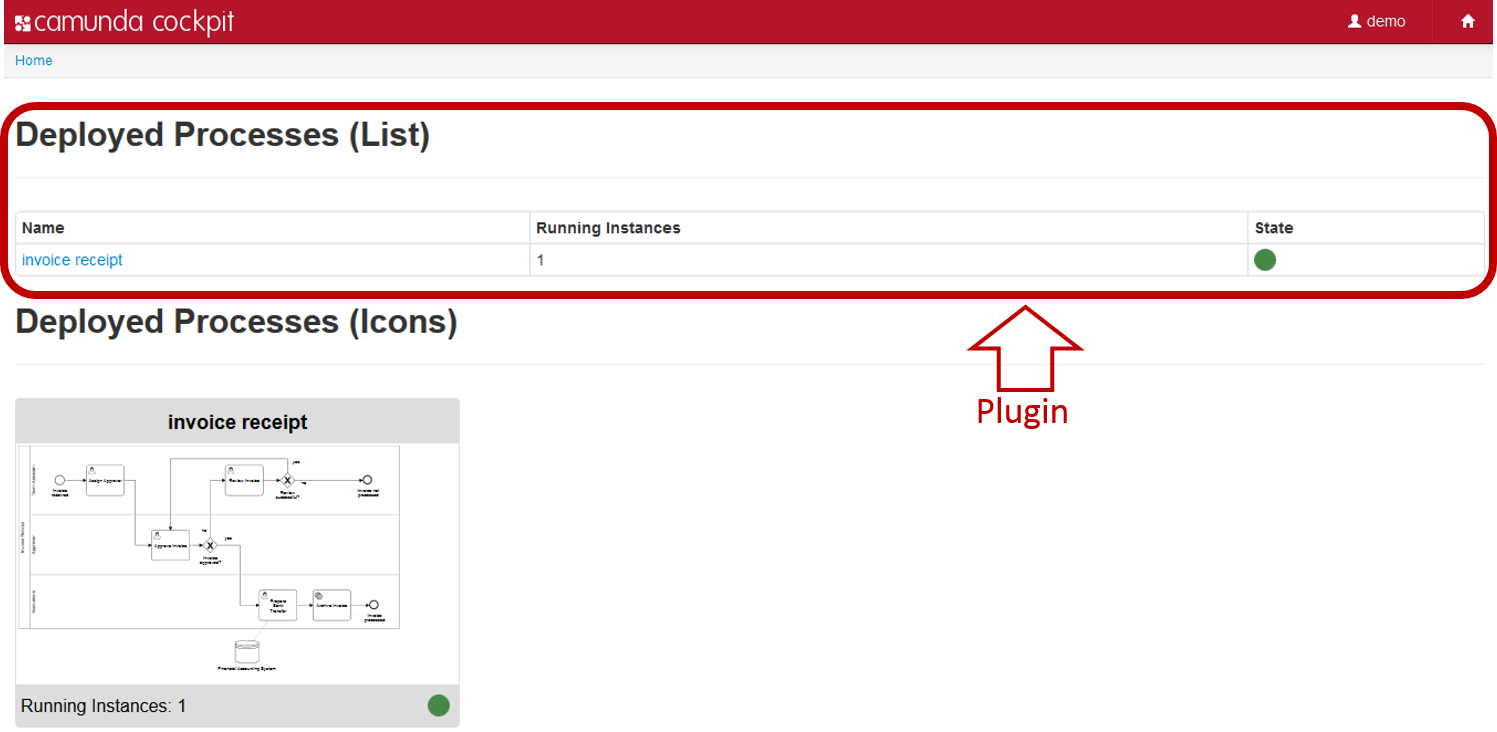

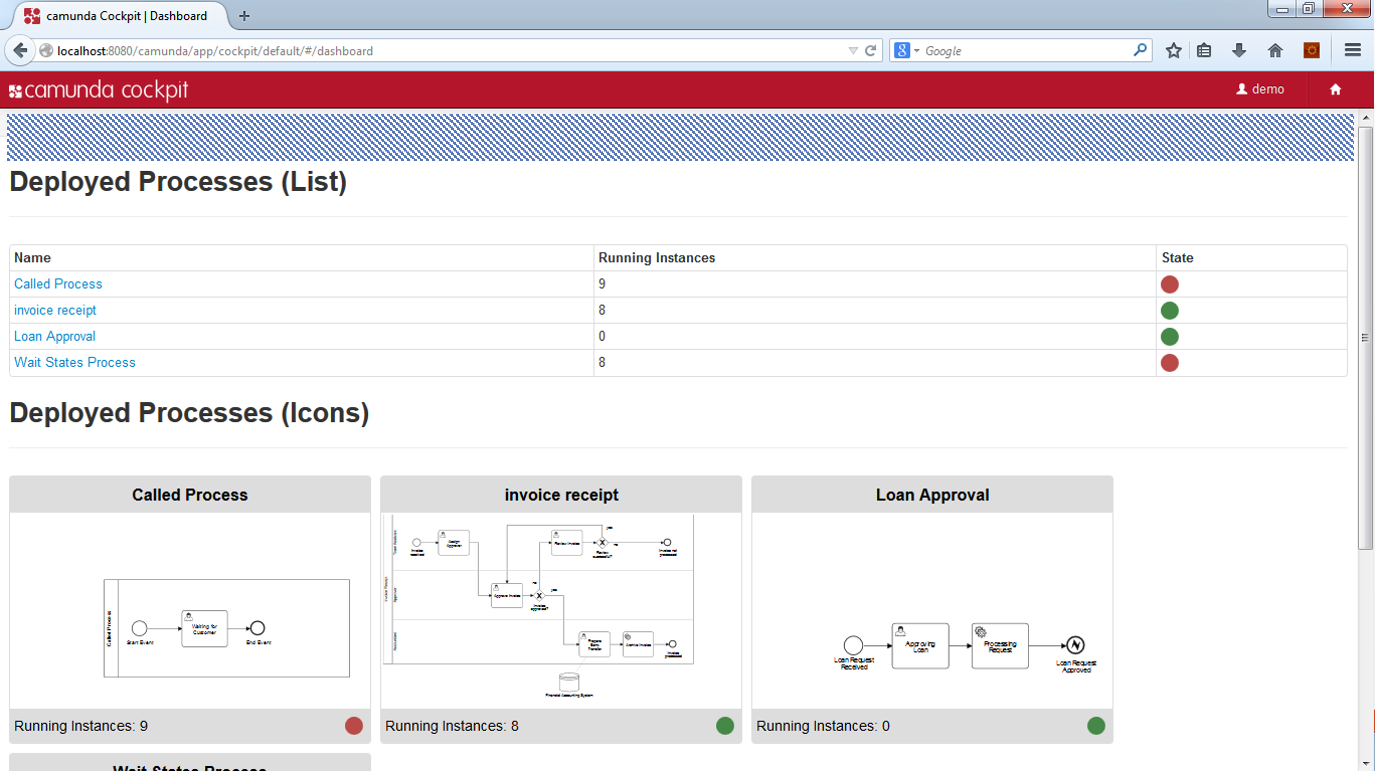

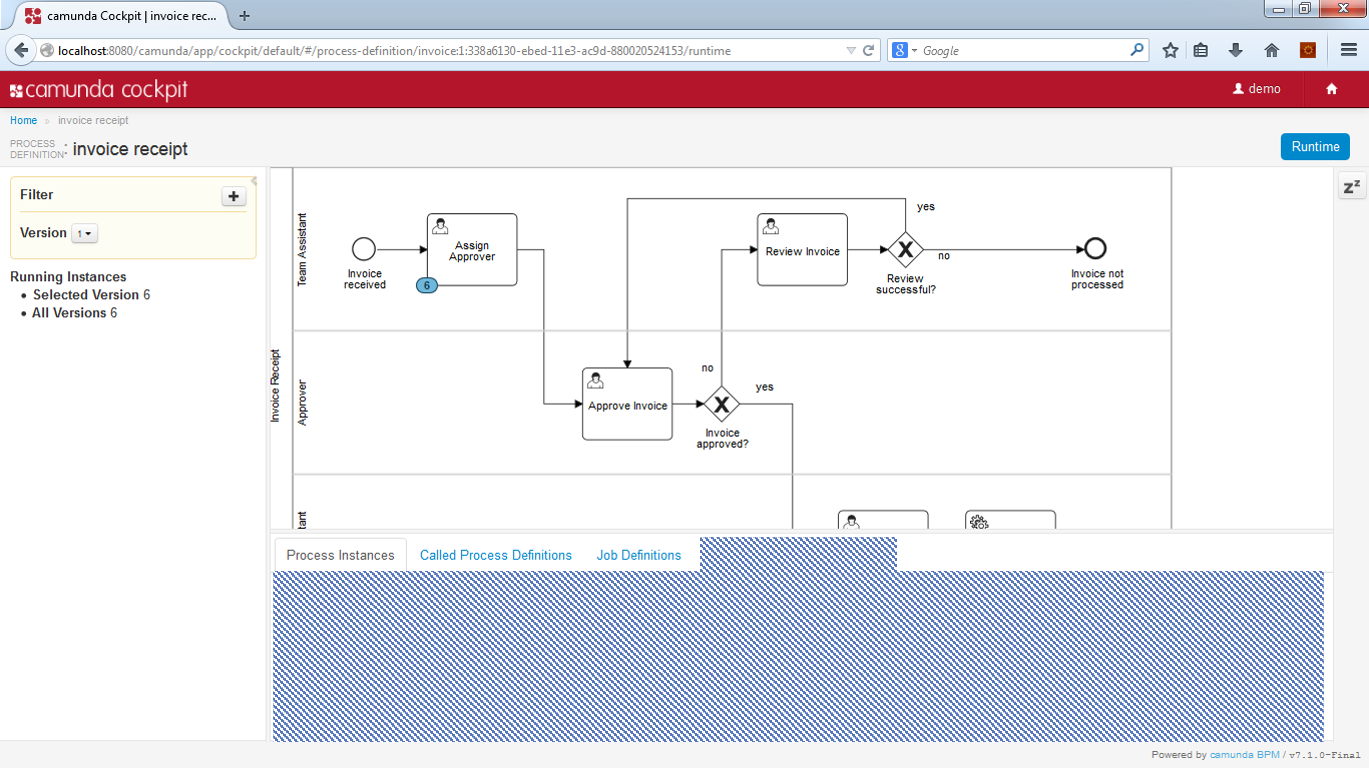

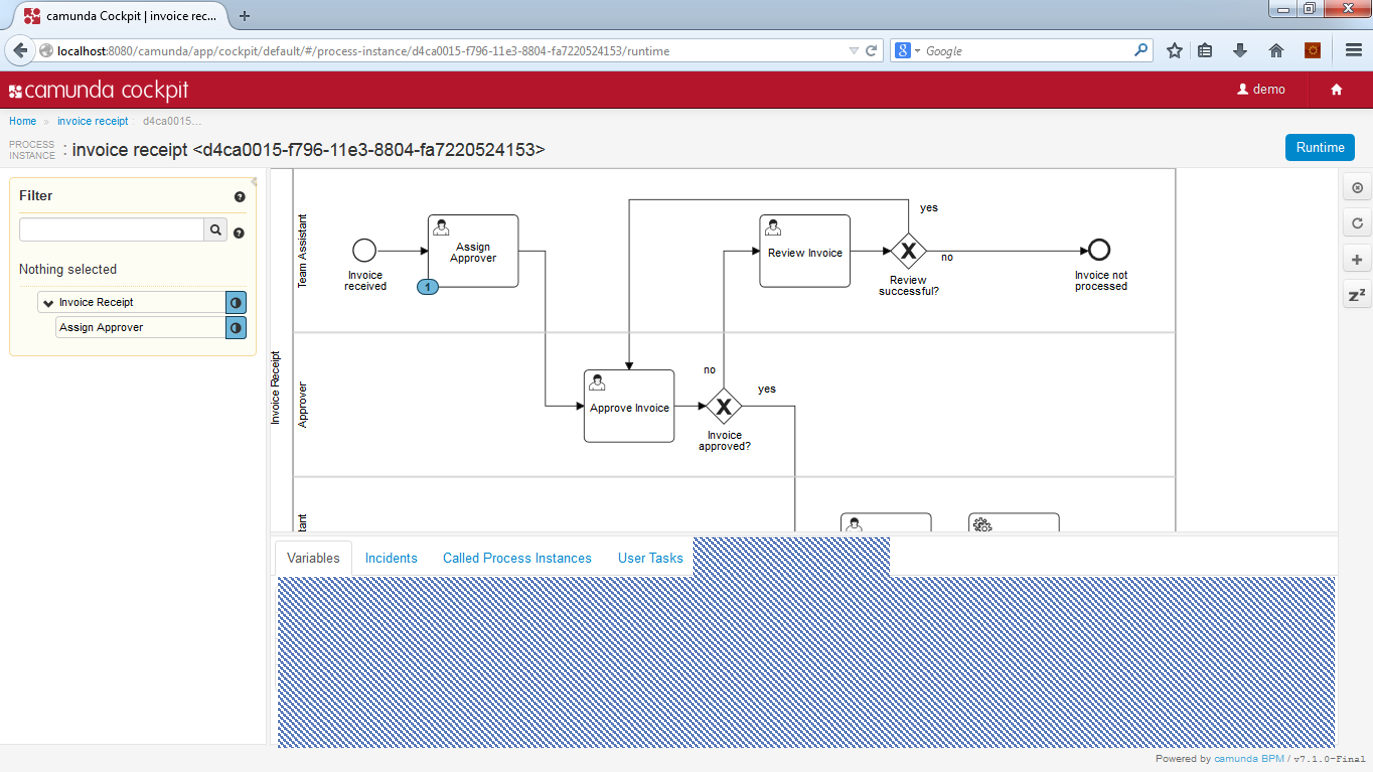

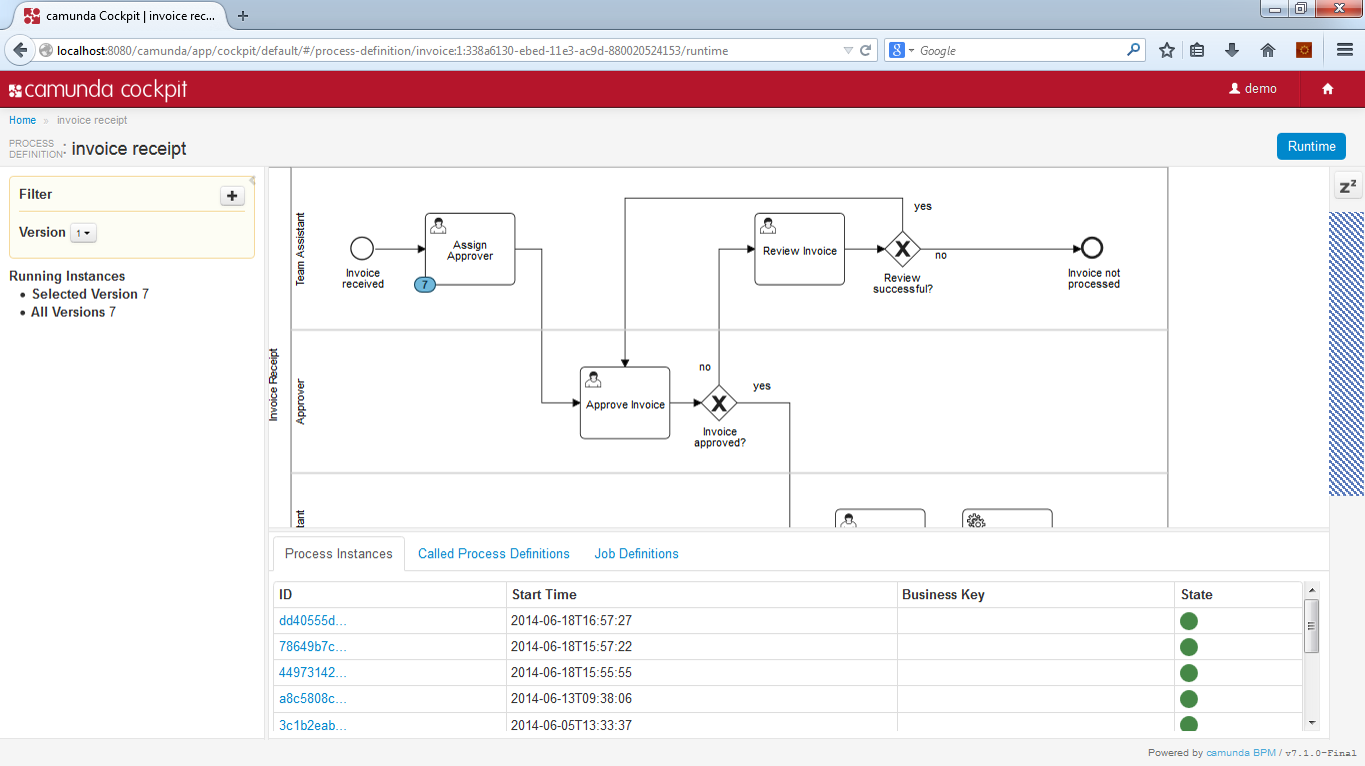

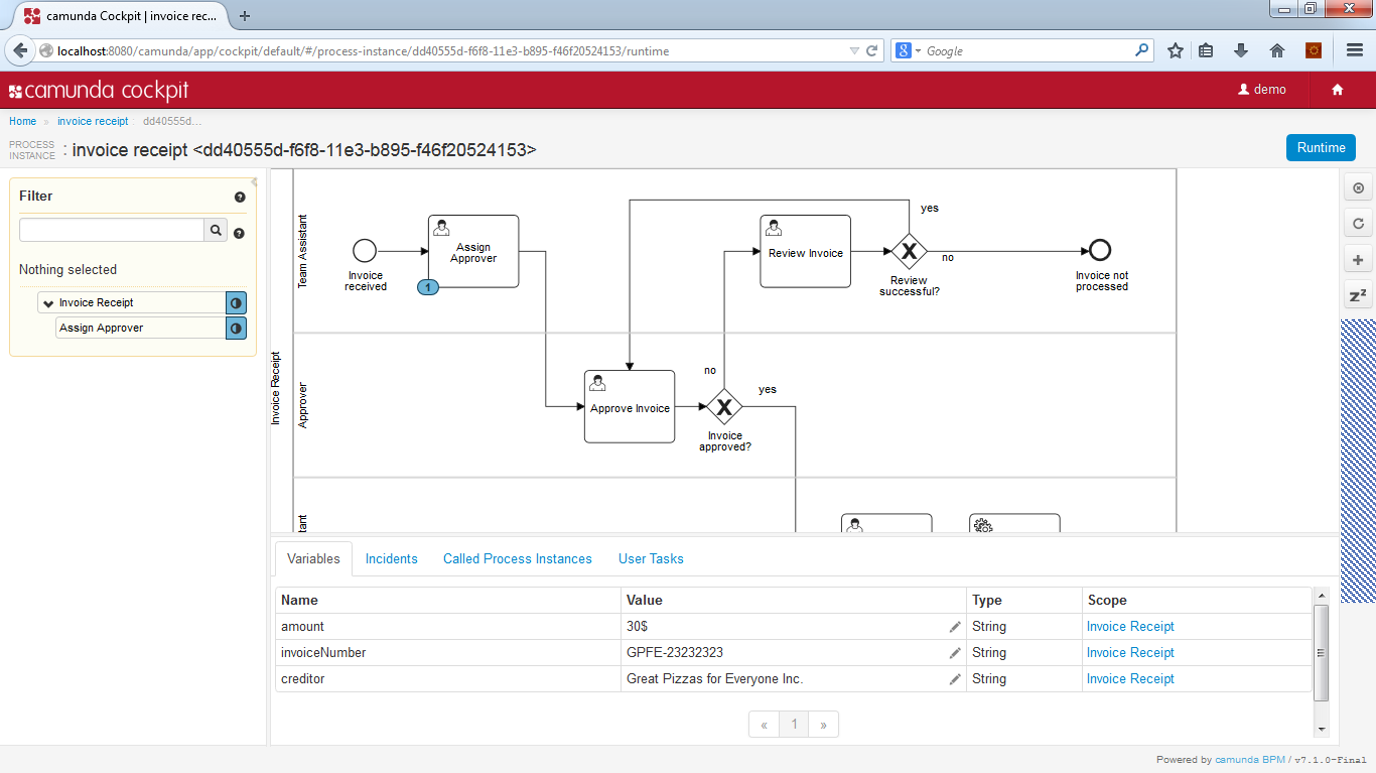

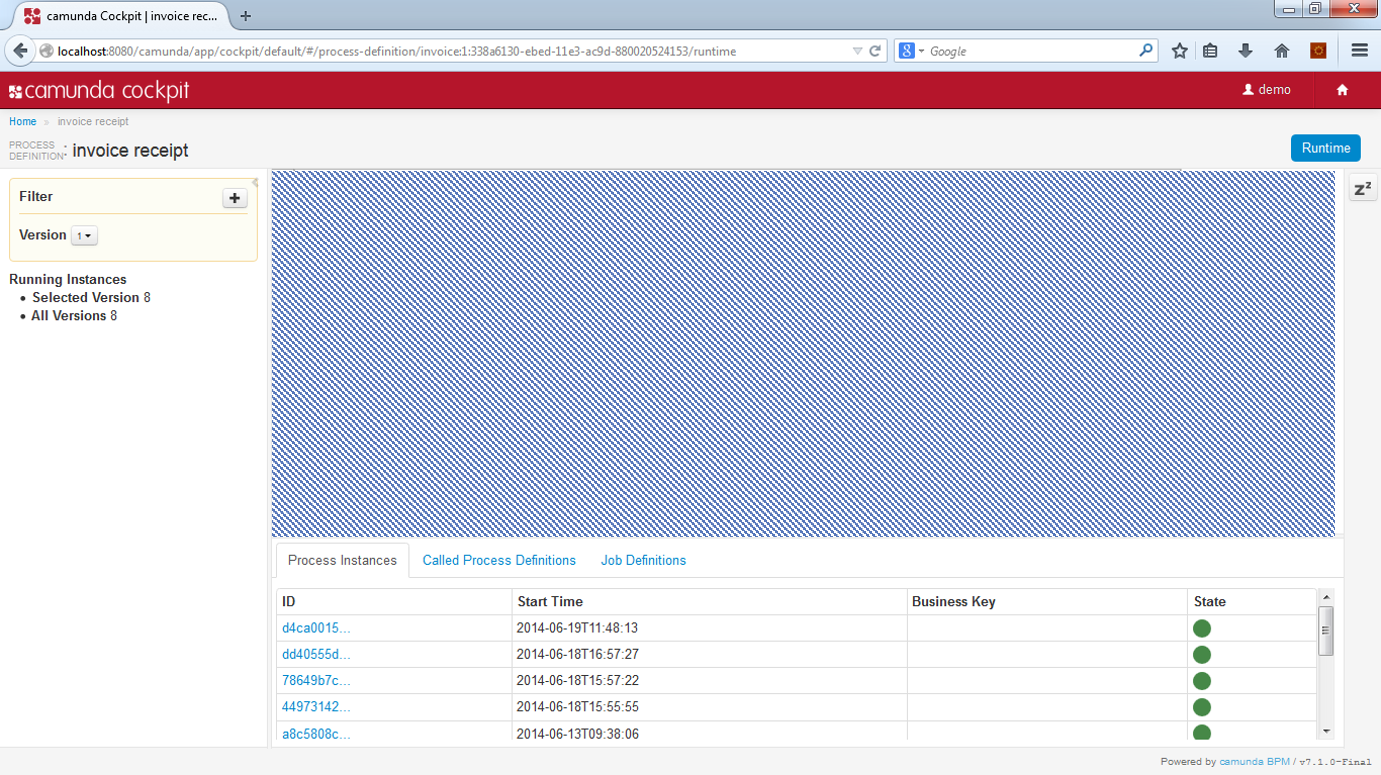

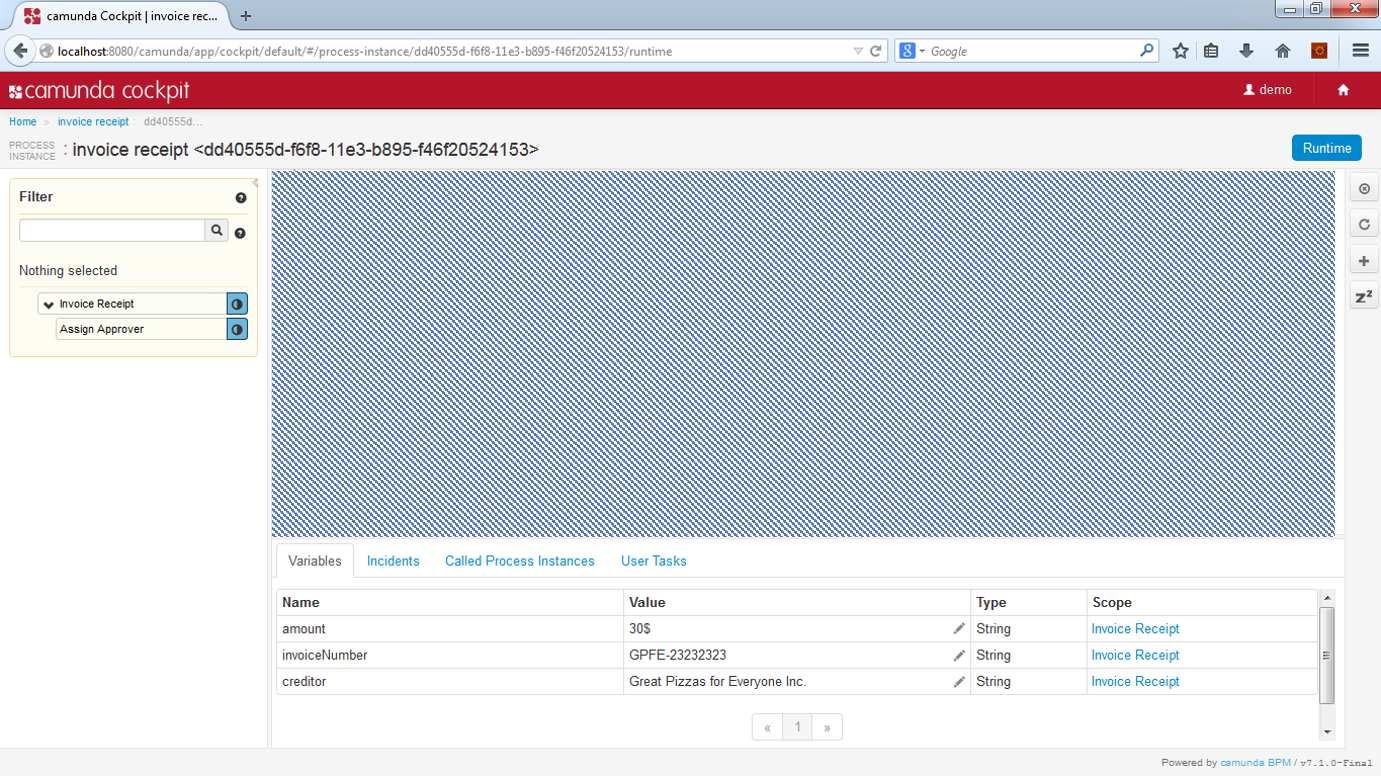

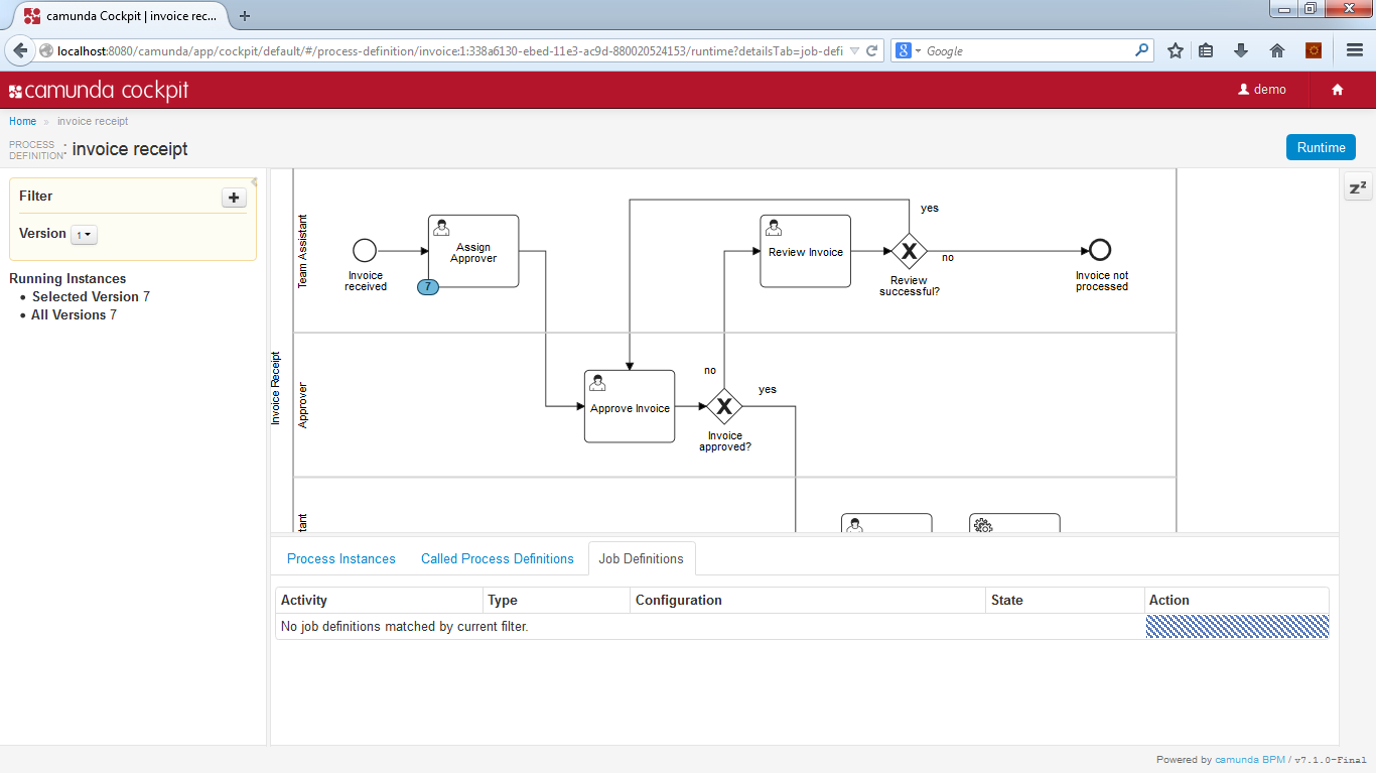

- Camunda Cockpit A web application for process monitoring and operations that allows you to search for process instances, inspect their state and repair broken instances.

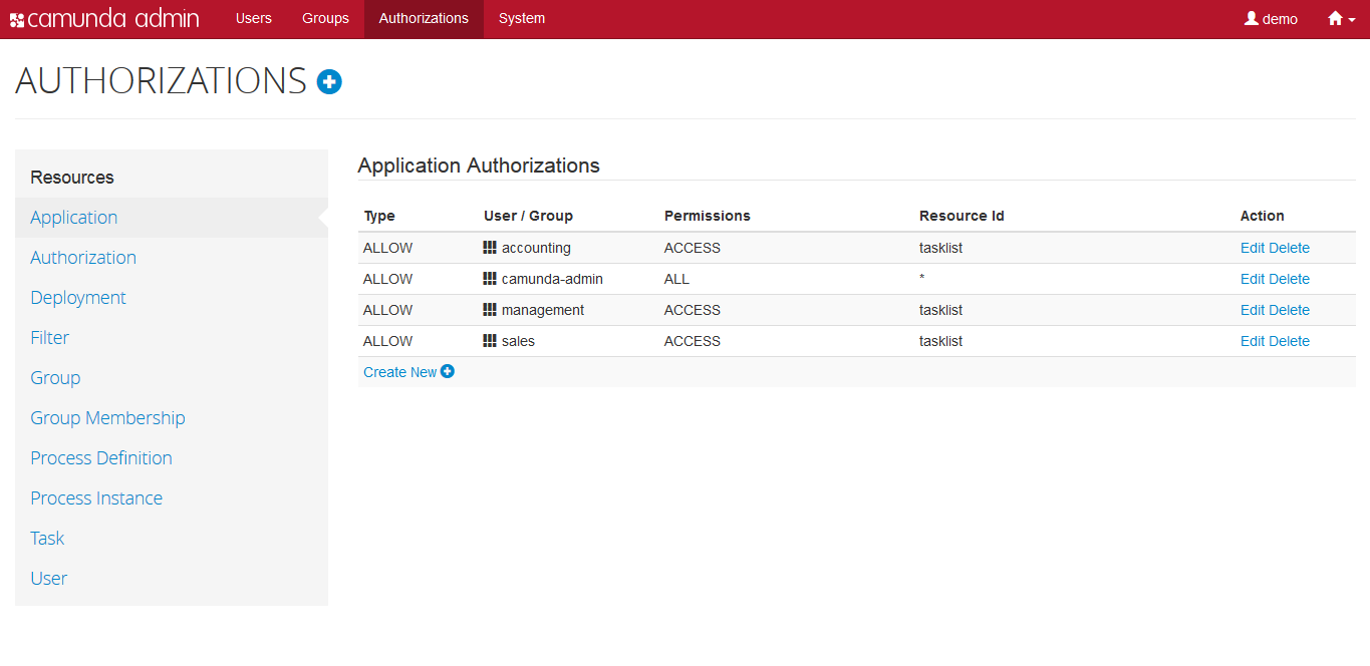

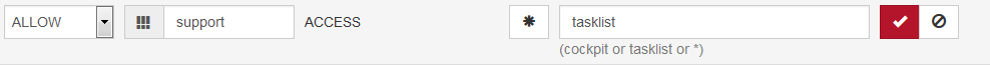

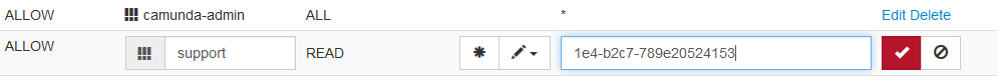

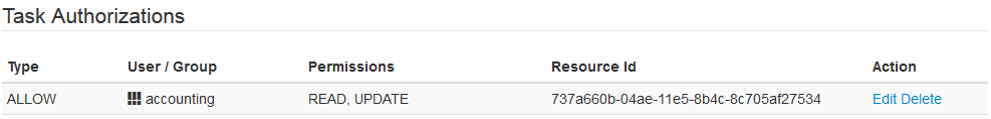

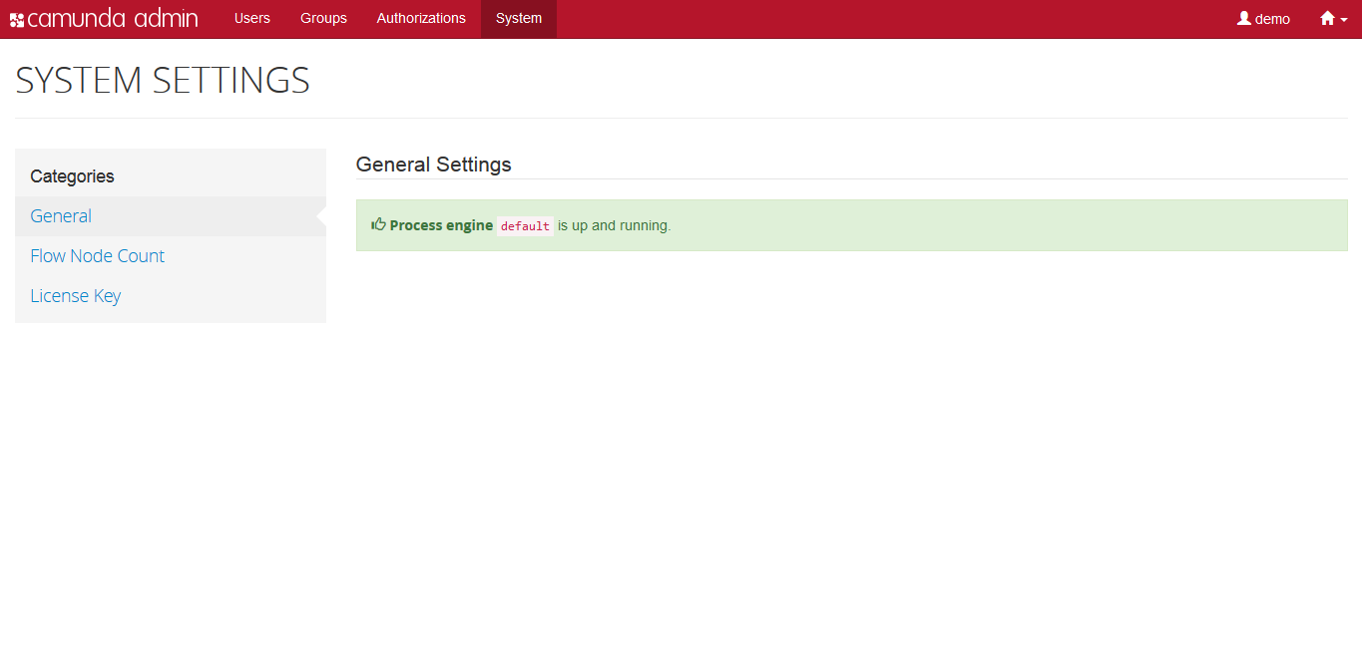

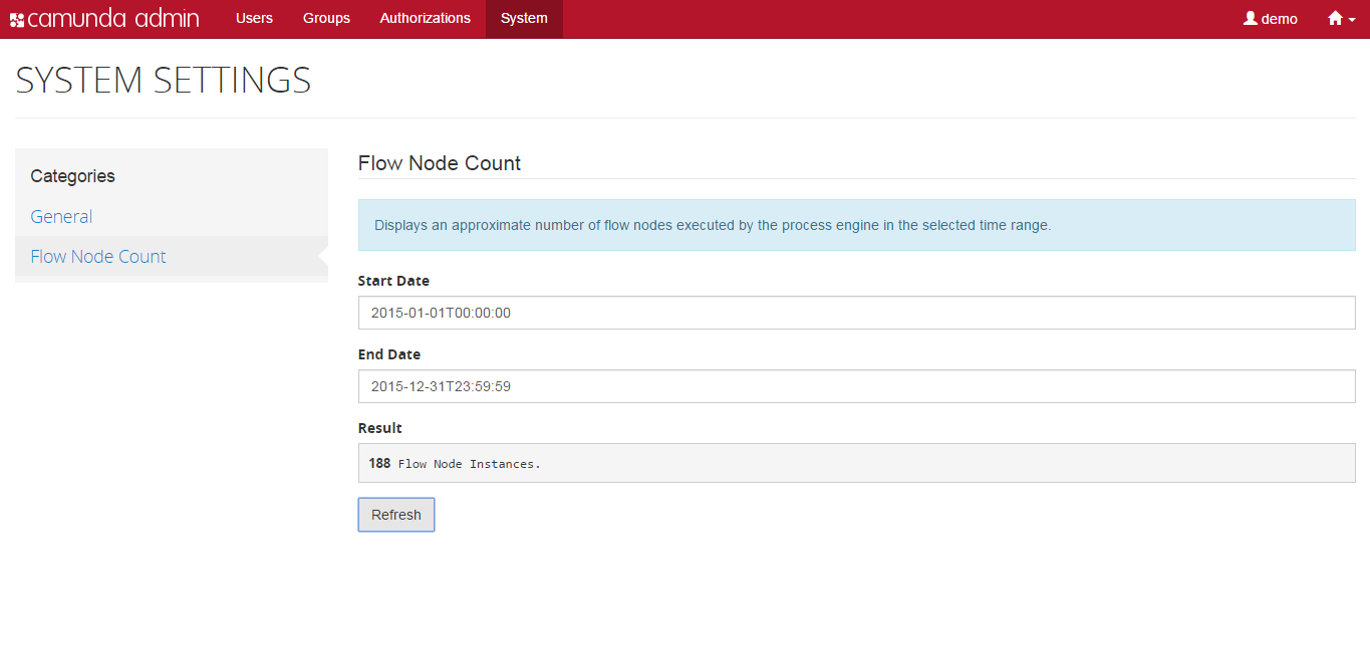

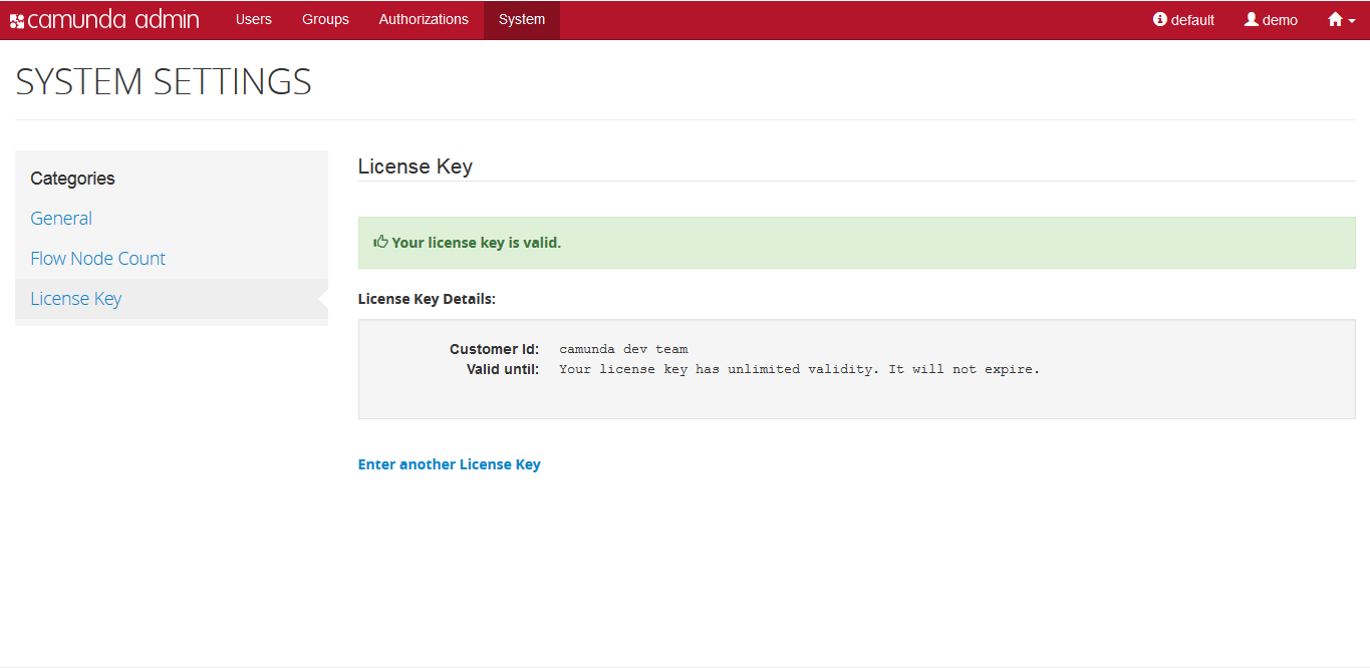

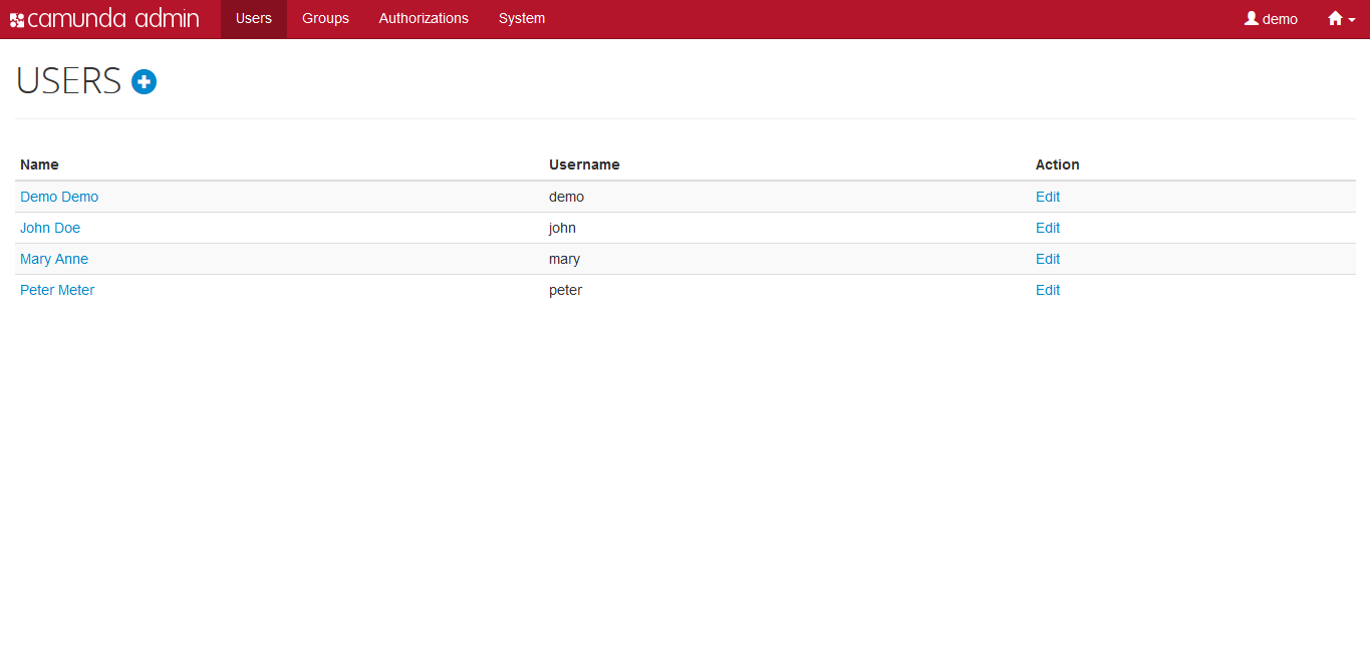

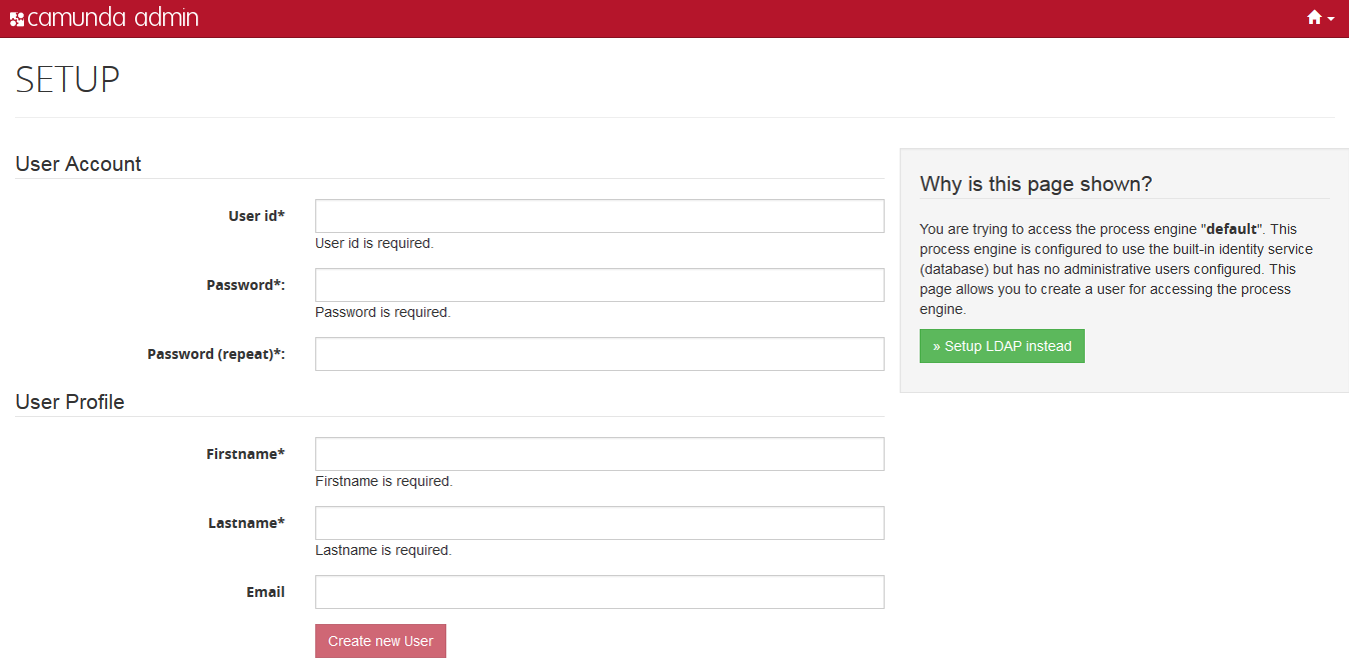

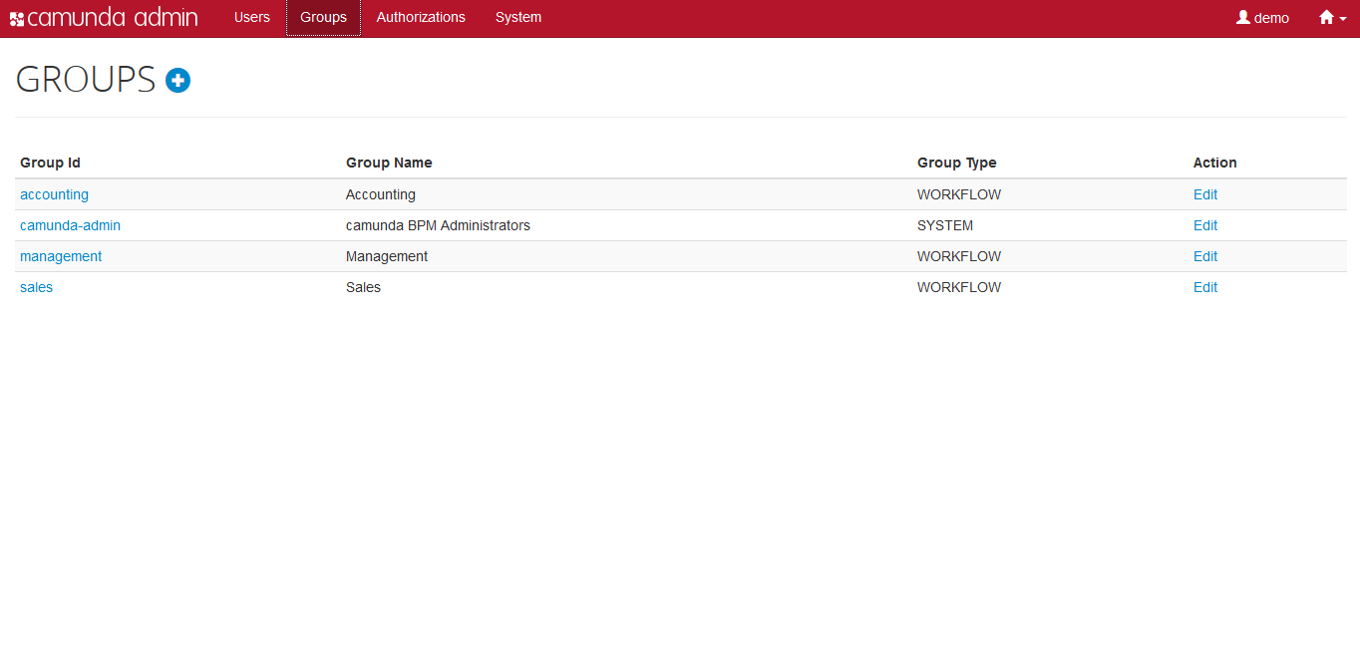

- Camunda Admin A web application for user management that allows you to manage users, groups and authorizations.

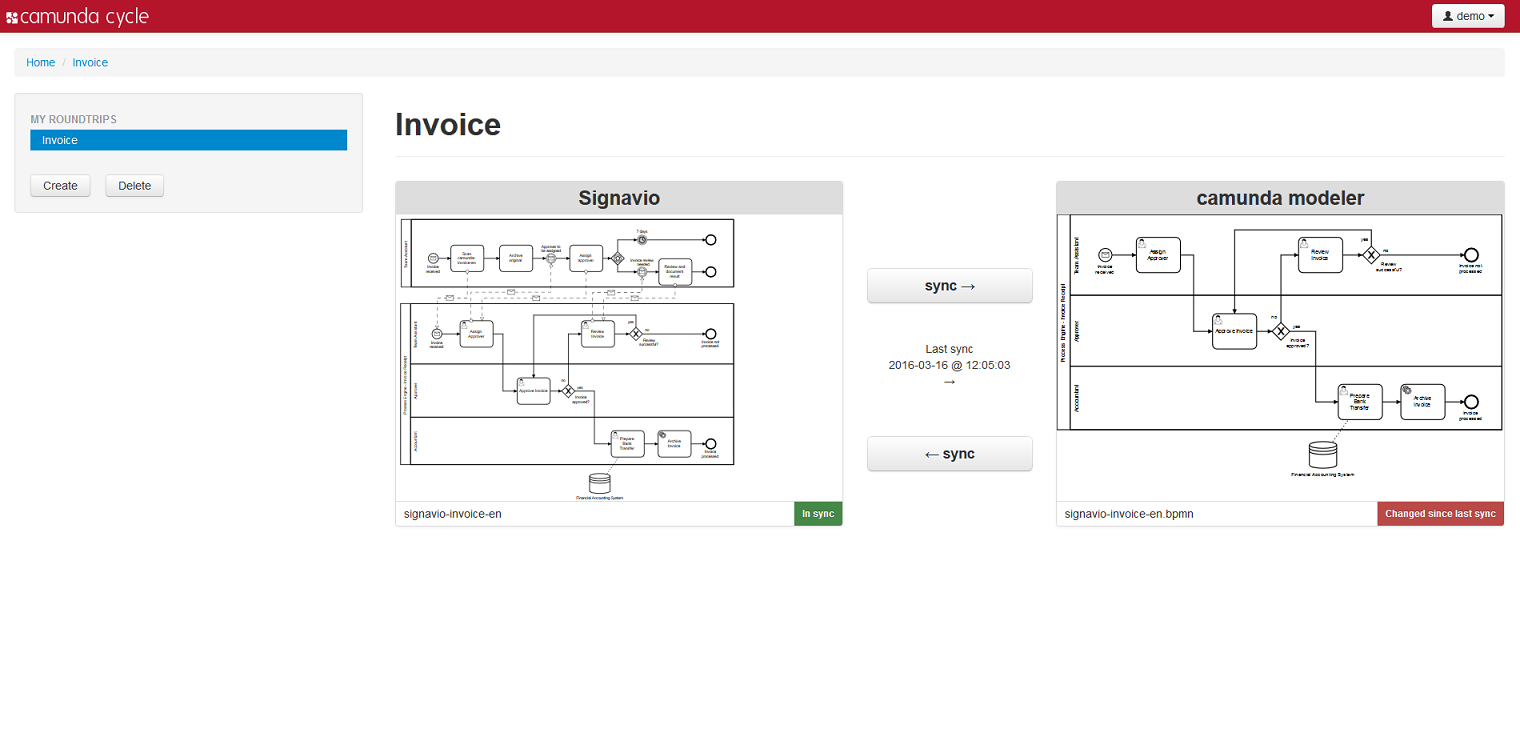

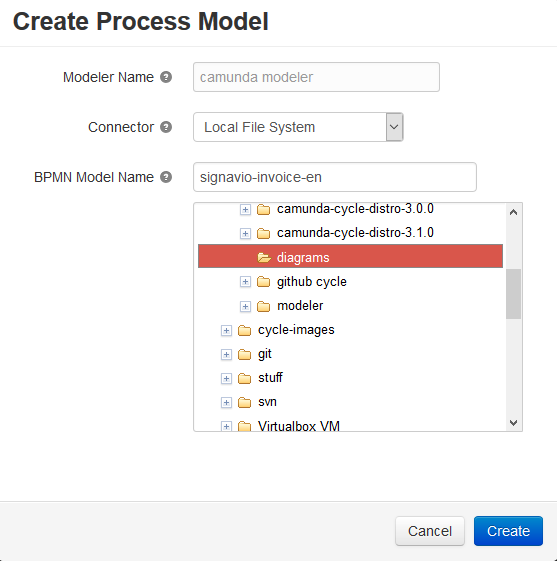

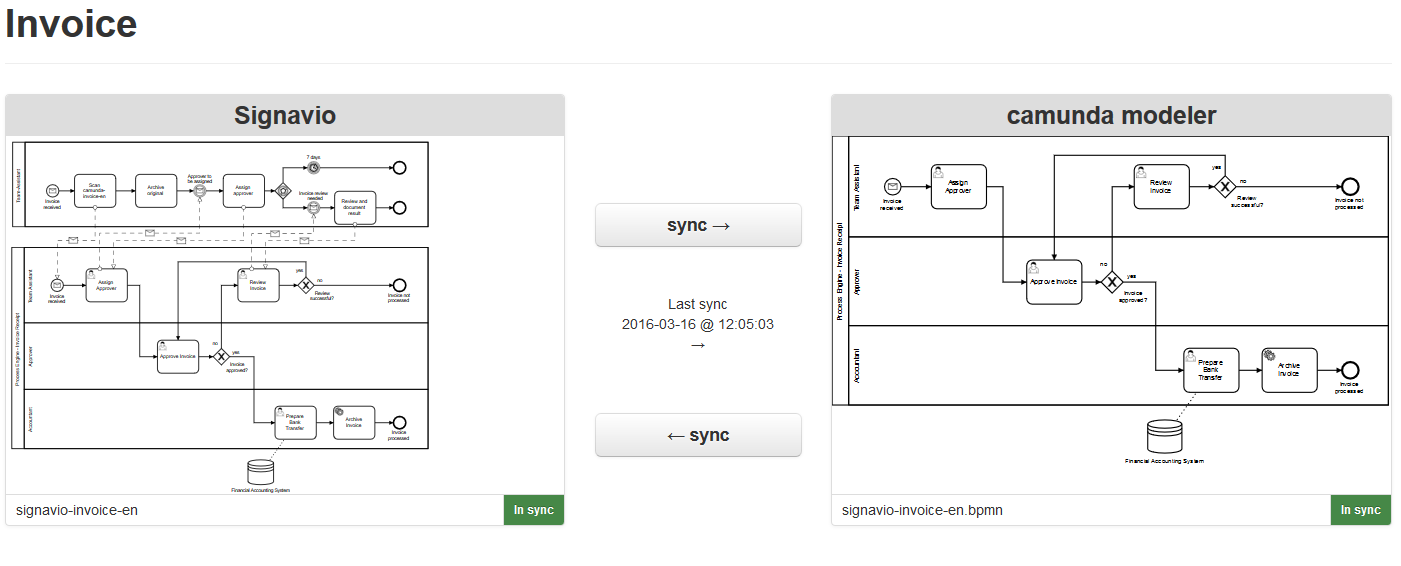

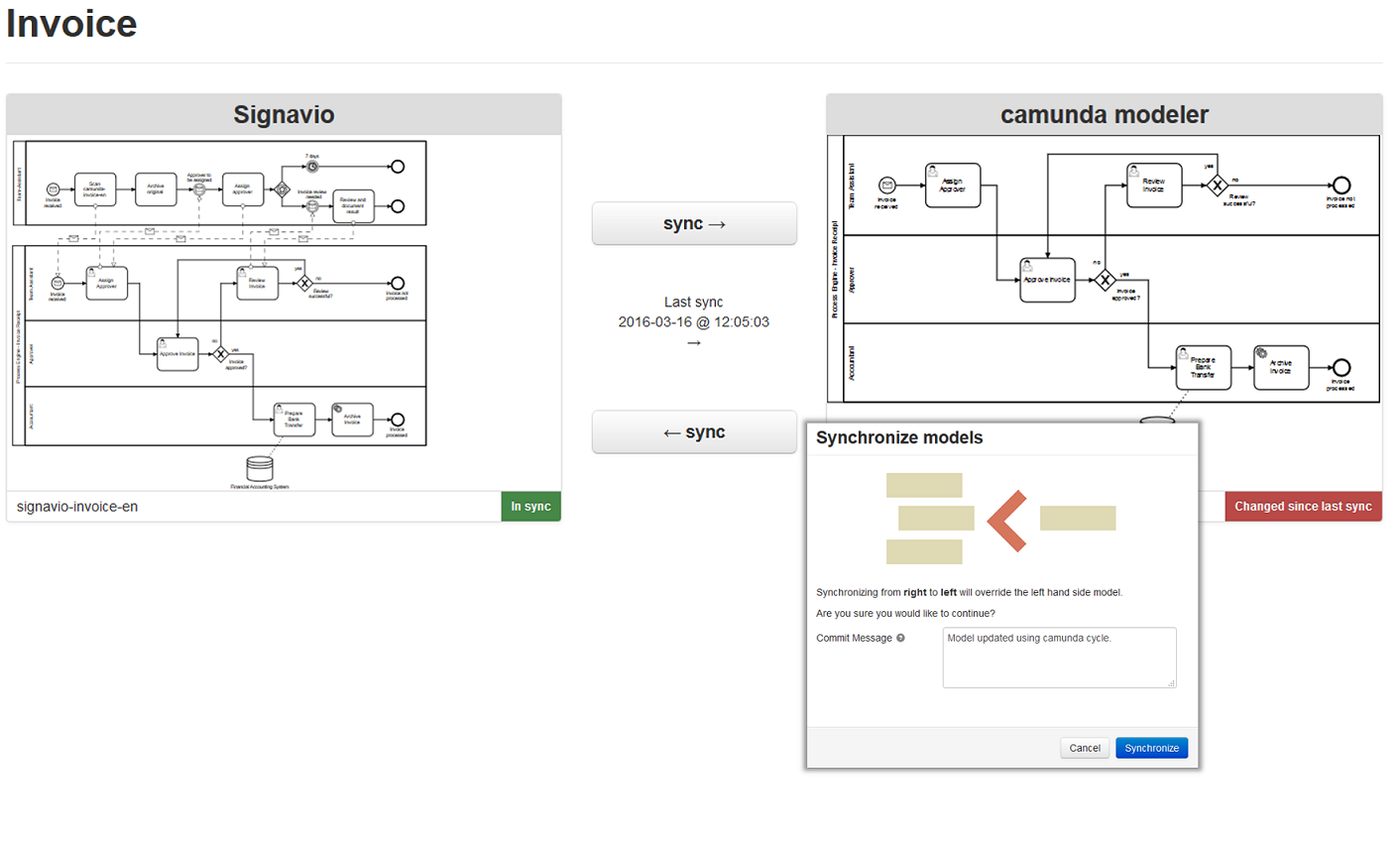

- Camunda Cycle A web application for synchronizing BPMN 2.0 process models between different modeling tools and modelers.

Additional Tools

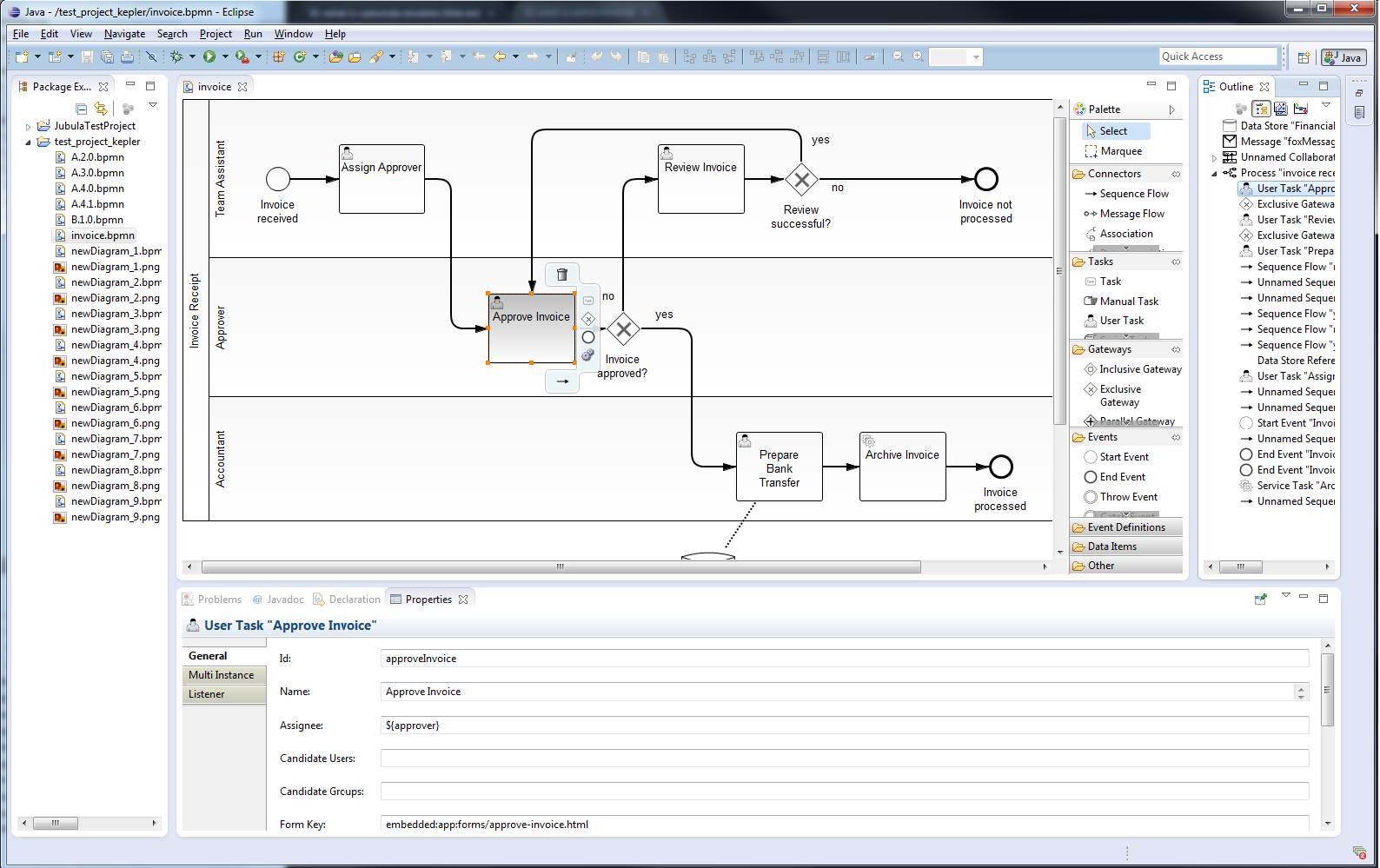

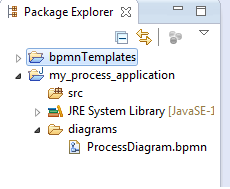

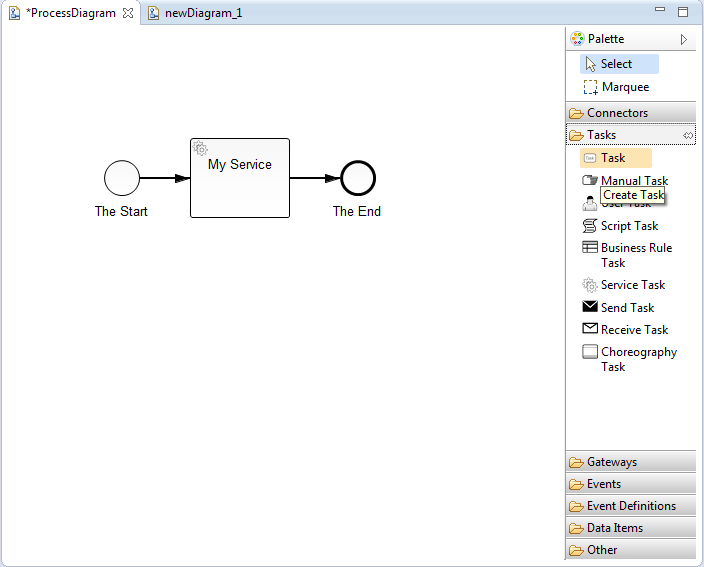

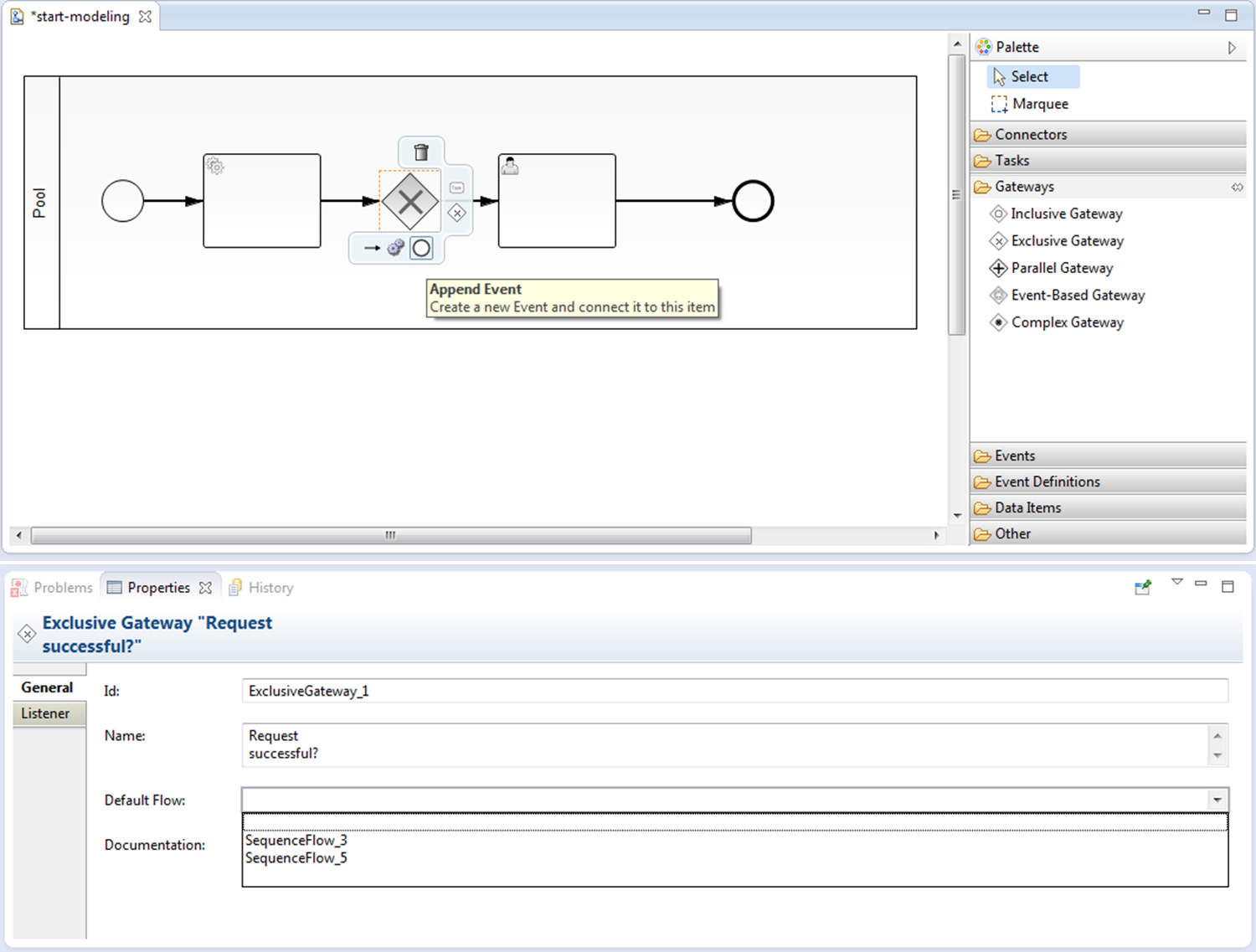

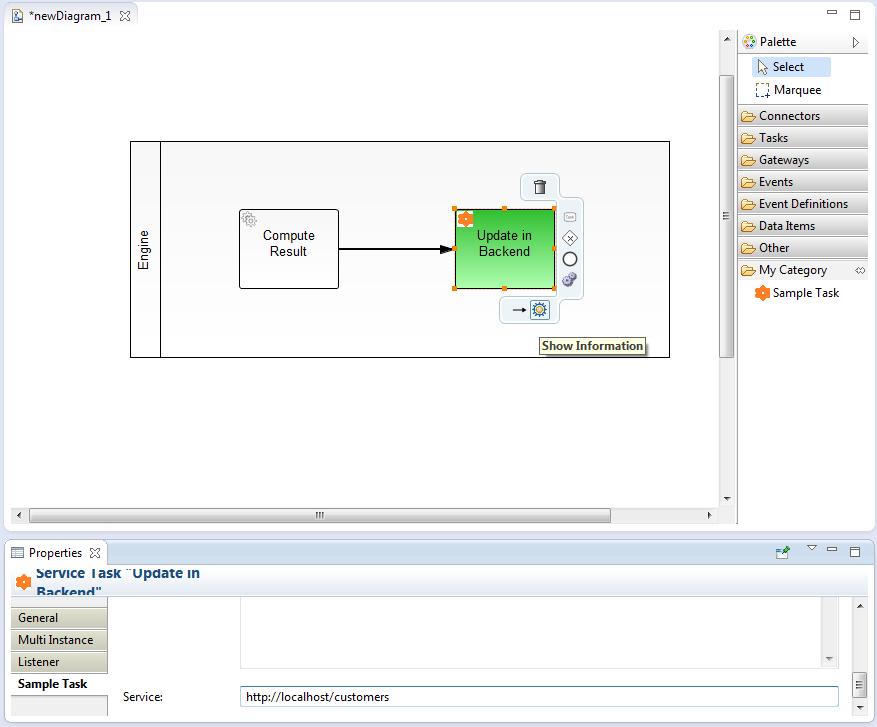

- Camunda Modeler: Eclipse plugin for process modeling.

- bpmn.io: BPMN web modeler which is used in our web applications Cockpit and Tasklist for rendering BPMN 2.0 process models in a browser. Although bpmn.io is still under development, its API is rather stable.

Download

Prerequisites

Before downloading Camunda, make sure you have a JRE (Java Runtime Environment) or better a JDK (Java Development Kit) installed. We recommend using Java 8 unless your container / application server does not support Java 8 (like JBoss Application Server 7).

Download the Runtime

Camunda is a flexible framework which can be used in different contexts. See Architecture Overview for more details. Based on how you want to use camunda, you can choose a different distribution.

Community vs. Enterprise Edition

Camunda provides separate runtime downloads for community users and enterprise subscription customers:

Full Distribution

Download the full distribution if you want to use a shared process engine or if you want to get to know camunda quickly, without any additional setup or installation steps required*.

The full distribution bundles

- Process Engine configured as shared process engine,

- Runtime Web Applications (Tasklist, Cockpit, Admin),

- Rest Api,

- Container / Application Server itself*.

* Note that if you download the full distribution for an open source application server/container, the container itself is included. For example, if you download the tomcat distribution, tomcat itself is included and the camunda binaries (process engine and webapplications) are pre-installed into the container. This is not true for the the Oracle Weblogic and IBM WebSphere downloads. These downloads do not include the application servers themselves.

See Installation Guide for additional details.

Standalone Web Application Distribution

Download the standalone web application distribution if you want to use Cockpit, Tasklist, Admin applications as a self-contained WAR file with an embedded process engine.

The standalone web application distribution bundles

- Process engine configured as embedded process engine,

- Runtime Web Applications (Tasklist, Cockpit, Admin),

- Rest Api,

The standalone web application can be deployed to any of the supported application servers.

The Process engine configuration is based on the Spring Framework. If you want to change the

database configuration, edit the WEB_INF/applicationContext.xml file inside the WAR file.

See Installation Guide for additional details.

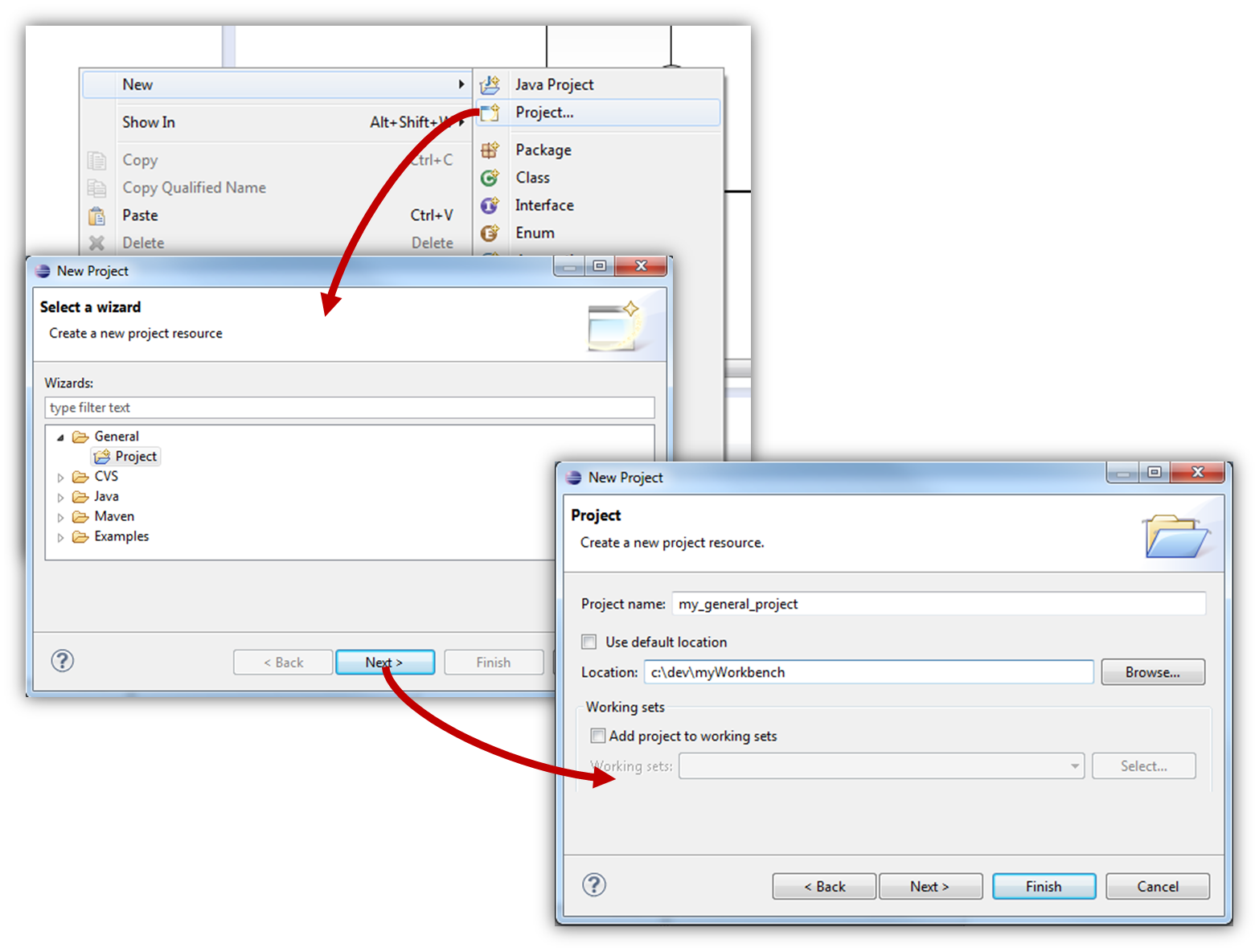

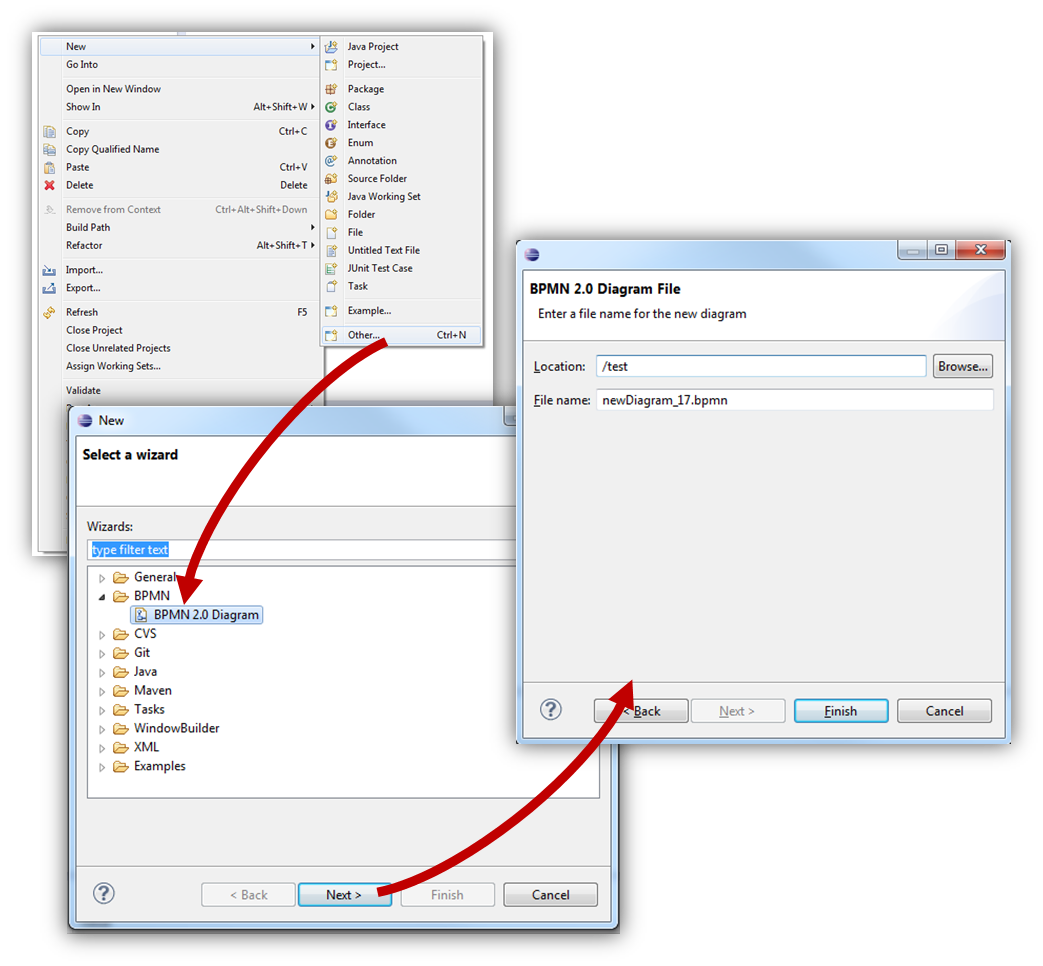

Download Camunda Modeler

Camunda Modeler is an Eclipse based modeling Tool for BPMN 2.0. Camunda Modeler can be downloaded from the community download page.

Download Camunda Cycle

Camunda Cycle is a tool for BPMN 2.0 interchange and Roundtrip. Camunda Cycle can be downloaded from the community download page.

Getting Started

The getting started tutorials can be found at http://docs.camunda.org/guides/getting-started-guides/.

Architecture Overview

Camunda BPM is a Java-based framework. The main components are written in Java and we have a general focus on providing Java developers with the tools they need for designing, implementing and running business processes and workflows on the JVM. Nevertheless, we also want to make the process engine technology available to Non-Java developers. This is why Camunda BPM also provides a REST API which allows you to build applications connecting to a remote process engine.

Camunda BPM can be used both as a standalone process engine server or embedded inside custom Java applications. The embeddability requirement is at the heart of many architecture decisions within Camunda BPM. For instance, we work hard to make the process engine component a lightweight component with as little dependencies on third-party libraries as possible. Furthermore, the embeddability motivates programming model choices such as the capabilities of the process engine to participate in Spring Managed or JTA transactions and the threading model.

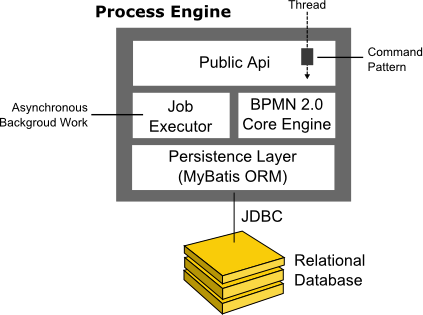

Process Engine Architecture

- Process Engine Public API: Service-oriented API allowing Java applications to interact with the process engine. The different responsibilities of the process engine (i.e., Process Repository, Runtime Process Interaction, Task Management, ...) are separated into individual services. The public API features a command-style access pattern: Threads entering the process engine are routed through a Command Interceptor which is used for setting up Thread Context such as Transactions.

- BPMN 2.0 Core Engine: this is the core of the process engine. It features a lightweight execution engine for graph structures (PVM - Process Virtual Machine), a BPMN 2.0 parser which transforms BPMN 2.0 XML files into Java Objects and a set of BPMN Behavior implementations (providing the implementation for BPMN 2.0 constructs such as Gateways or Service Tasks).

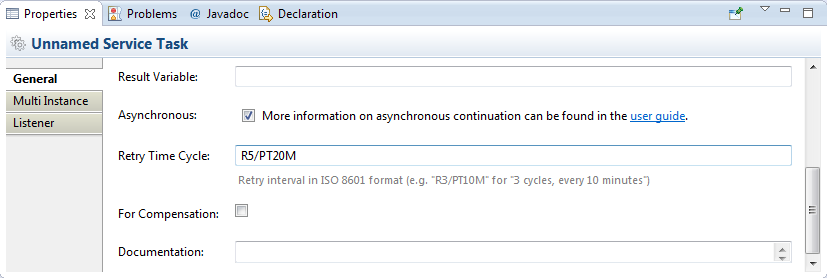

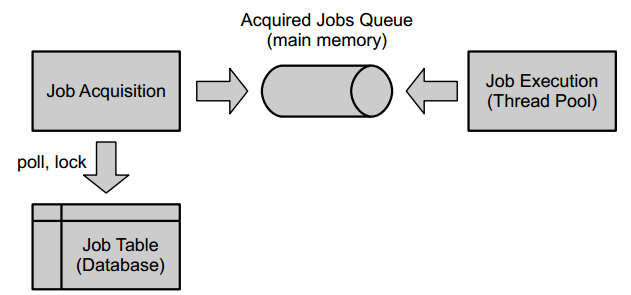

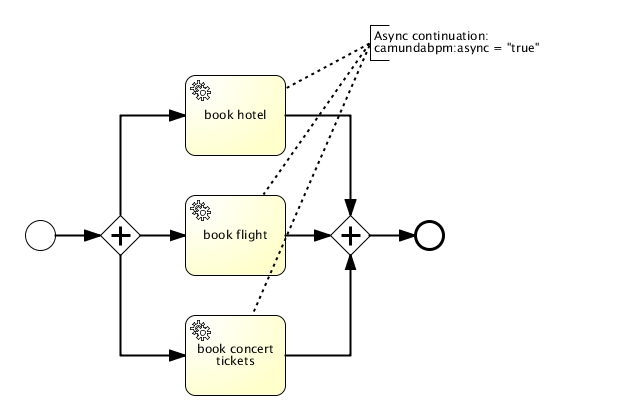

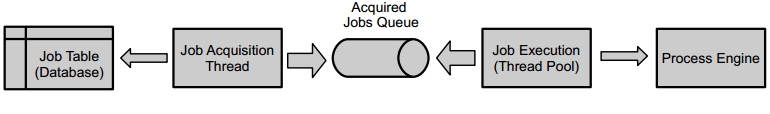

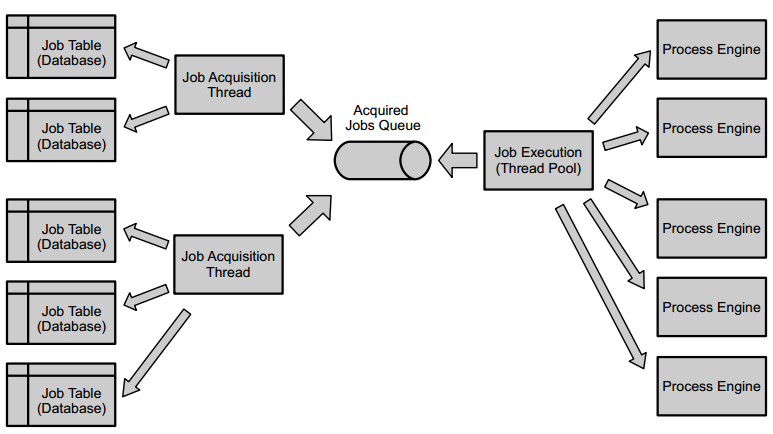

- Job Executor: the Job Executor is responsible for processing asynchronous background work such as Timers or asynchronous continuations in a process.

- The Persistence Layer: the process engine features a persistence layer responsible for persisting process instance state to a relational database. We use the MyBatis mapping engine for object relational mapping.

Required third-party libraries

See section on third-party libraries.

Camunda BPM platform architecture

Camunda BPM platform is a flexible framework which can be deployed in different scenarios. This section provides an overview of the most common deployment scenarios.

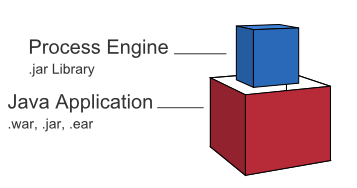

Embedded Process Engine

In this case the process engine is added as an application library to a custom application. This way the process engine can easily be started and stopped with the application lifecycle. It is possible to run multiple embedded process engines on top of a shared database.

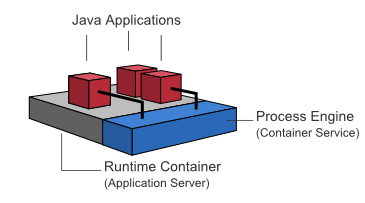

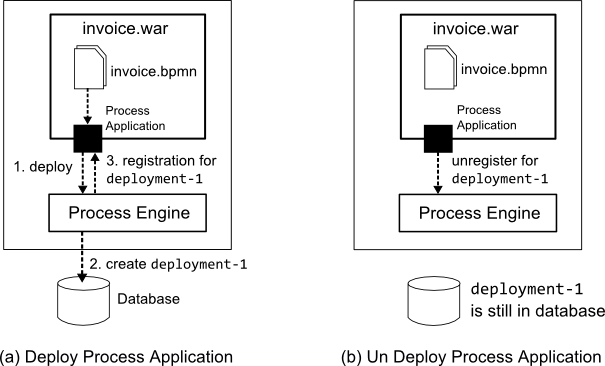

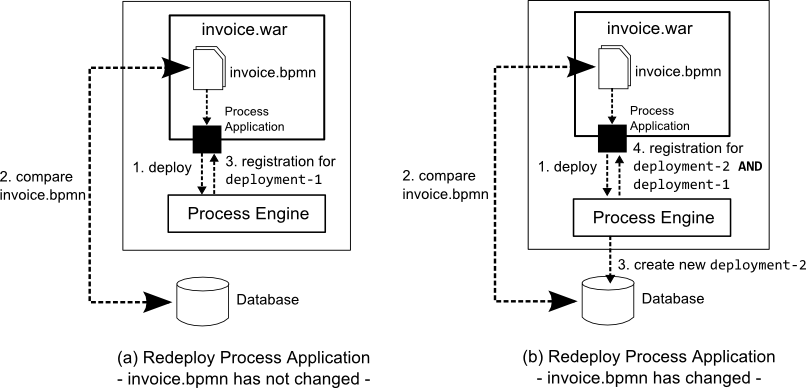

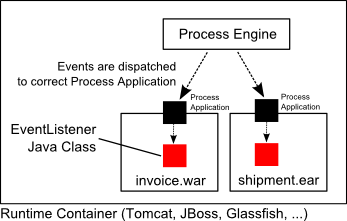

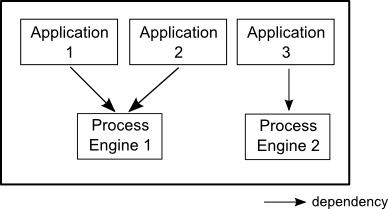

Shared, container-managed Process Engine

In this case the process engine is started inside the runtime container (Servlet Container, Application Server, ...). The process engine is provided as a container service and can be shared by all applications deployed inside the container. The concept can be compared to a JMS Message Queue which is provided by the runtime and can be used by all applications. There is a one-to-one mapping between process deployments and applications: the process engine keeps track of the process definitions deployed by an application and delegates execution to the application in question.

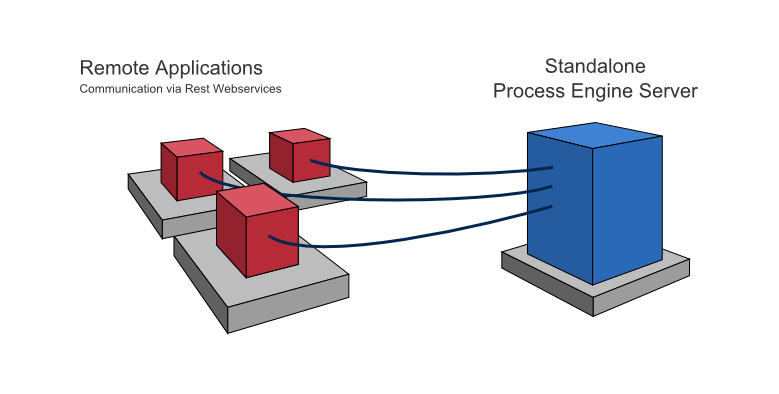

Standalone (Remote) Process Engine Server

In this case the process engine is provided as a network service. Different applications running on the network can interact with the process engine through a remote communication channel. The easiest way for making the process engine accessible remotely is to use the built-in REST API. Different communication channels such as SOAP Webservices or JMS are possible but need to be implemented by users.

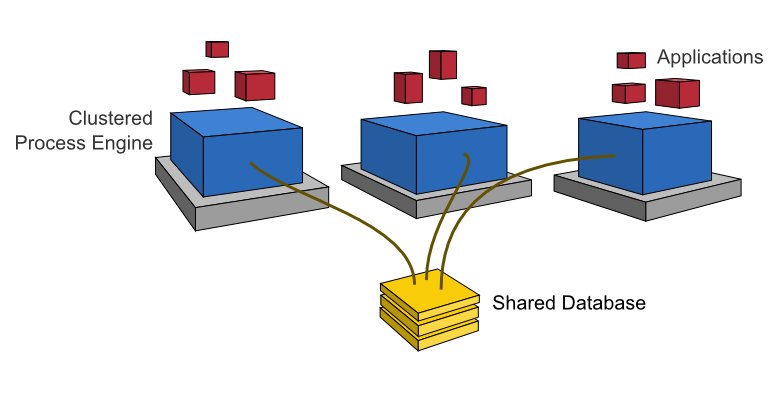

Clustering Model

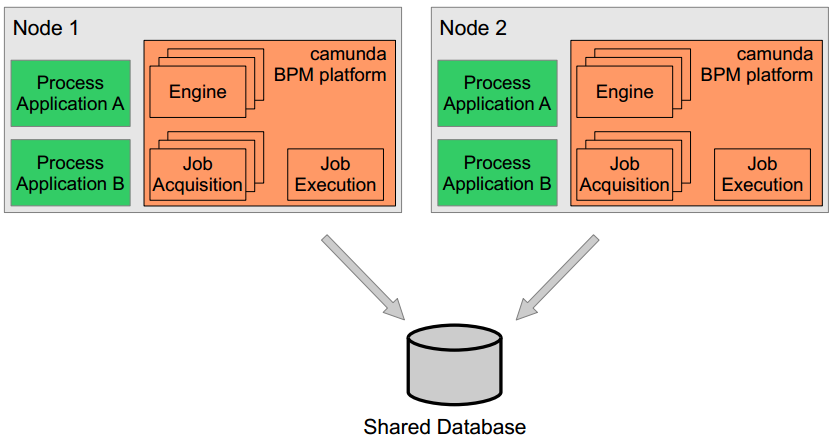

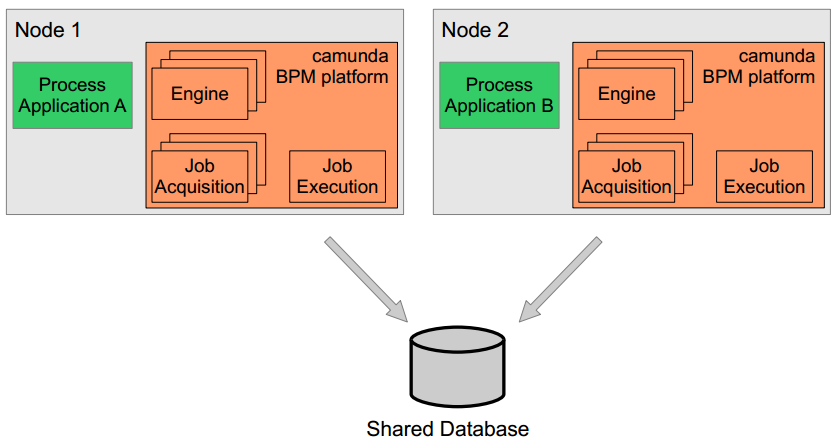

In order to provide scale-up or fail-over capabilities, the process engine can be distributed to different nodes in a cluster. Each process engine instance must then connect to a shared database.

The individual process engine instances do not maintain session state across transactions. Whenever the process engine runs a transaction, the complete state is flushed out to the shared database. This makes it possible to route subsequent requests which do work in the same process instance to different cluster nodes. This model is very simple and easy to understand and imposes limited restrictions when it comes to deploying a cluster installation. As far as the process engine is concerned there is also no difference between setups for scale-up and setups for fail-over (as the process engine keeps no session state between transactions).

The process engine job executor is also clustered and runs on each node. This way, there is no single point of failure as far as the process engine is concerned. The job executor can run in both homogeneous and heterogeneous clusters.

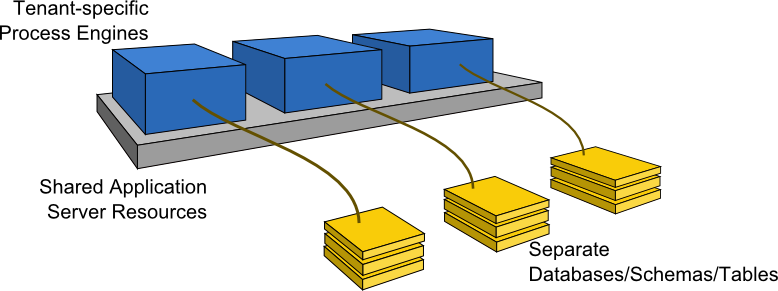

Multi-Tenancy Model

To serve multiple, independent parties with one Camunda installation, the process engine supports multi-tenancy. The following multi tenancy models are supported:

- Table-level data separation by using different database schemas or databases,

- Row-level data separation by using a tenant marker.

Users should choose the model which fits their data separation needs. Camunda's APIs provide access to processes and related data specific to each tenant. More details can be found in the multi-tenancy section.

Web Application Architecture

The Camunda BPM web applications are based on a RESTful architecture.

Frameworks used:

- JAX-RS based Rest API

- AngularJS

- RequireJS

- jQuery

- Twitter Bootstrap

Additional custom frameworks developed by camunda hackers:

- camunda-bpmn.js: Camunda BPMN 2.0 JavaScript libraries

- ngDefine: integration of AngularJS into RequireJS powered applications

- angular-data-depend: toolkit for implementing complex, data heavy AngularJS applications

Supported Environments

You can run the camunda BPM platform in every Java-runnable environment. camunda BPM is supported with our QA infrastructure in the following environments. Here you can find more information about our enterprise support.

Please find the supported environments for version 7.0 and the supported environments for version 7.1 here.

Container / Application Server for runtime components (excluding camunda Cycle)

- Apache Tomcat 6 / 7

- JBoss Application Server 7.2 and JBoss EAP 6.1 / 6.2 / 6.3 / 6.4

- Wildfly 8.1 / 8.2 Application Server

- GlassFish 3.1

- IBM WebSphere Application Server 8.0 / 8.5 (Enterprise Edition only)

- Oracle WebLogic Server 12c (Enterprise Edition only)

Container for camunda Cycle

- Apache Tomcat 7

Databases

- MySQL 5.1 / 5.5 / 5.6

- MariaDB 10.0

- Oracle 10g / 11g / 12c

- IBM DB2 9.7 / 10.1 / 10.5 (excluding IBM z/OS for all versions)

- PostgreSQL 9.1 / 9.3 / 9.4

- Microsoft SQL Server 2008 R2 / 2012 / 2014 (see Configuration Note)

- H2 1.3

Database Clustering & Replication

Clustered or Replicated databases are not supported unless they behave exactly like the corresponding non-clustered / non-replicated configuration. The configuration needs to guarantee READ-COMMITTED isolation level.

The MySQL/MariaDB based Galera Cluster is not supported.

Webbrowser

- Google Chrome latest

- Mozilla Firefox latest

- Internet Explorer 9 / 10 / 11

Java

- Java 6 / 7

- Java 8 (if supported by your application server / container)

Java Runtime

- Sun / Oracle Hot Spot 6 / 7 / 8

- IBM® J9 virtual machine (JVM) 6 / 7 / 8

- OpenJDK 6 / 7

- Oracle JRockit 6 - R28.2.7

Eclipse (for camunda modeler)

- Eclipse Indigo / Juno / Kepler

Community Extensions

Camunda BPM is developed by Camunda as an open source project in collaboration with the community. The "core project" (namely "Camunda BPM platform") is the basis for the Camunda BPM product which is provided by Camunda as a commercial offering. The commercial Camunda BPM product contains additional (non-open source) features and is provided to Camunda BPM customers with service offerings such as enterprise support and bug fix releases.

Camunda supports the community in its effort to build additional community extensions under the Camunda BPM umbrella. Such community extensions are maintained by the community and are not part of the commercial Camunda BPM product. Camunda does not support community extensions as part of its commercial services to enterprise subscription customers.

List of Community Extensions

The following is a list of current (unsupported) community extensions:

- Apache Camel Integration

- AssertJ Testing Library

- Grails Plugin

- Needle Testing Library

- OSGi Integration

- Elastic Search Extension

- PHP SDK

- Tasklist Translations

- Cycle Connectors

- Single Sign On for JBoss

- Camunda BPM Platform Docker Images

- Camunda Process Test Coverage

Building a Community Extension

Do you have a great idea around open source BPM you want to share with the world? Awesome! Camunda will support you in building your own community extension. Have a look at our contribution guidelines to find out how to propose a community project.

Enterprise Extensions

XSLT extension

The XSLT extension depends on the following third-party libraries:

Third-Party Libraries

In the following section all third-party libraries are listed on which components of the camunda platform depend.

Process Engine

The process engine depends on the following third-party libraries:

- MyBatis mapping framework (Apache License 2.0) for object-relational mapping.

- Joda Time (Apache License 2.0) for parsing date formats.

- Java Uuid Generator (JUG) (Apache License 2.0) Id Generator. See documentation on Id-Generators.

Additional optional dependencies:

- Apache Commons Email (Apache License 2.0) for mail task support.

- Spring Framework Spring-Beans (Apache License 2.0) for configuration using camunda.cfg.xml.

- Spring Framework Spring-Core (Apache License 2.0) for configuration using camunda.cfg.xml.

- Spring Framework Spring-ASM (Apache License 3.0) for configuration using camunda.cfg.xml.

- Groovy (Apache License 2.0) for groovy script task support.

- Jython (Python License) for Python script task support.

- JRuby (Ruby License or GPL) for Ruby script task support.

REST API

The REST API depends on the following third-party libraries:

- Jackson JAX-RS provider for JSON content type (Apache License 2.0)

- Apache Commons FileUpload (Apache License 2.0)

Additional optional dependencies:

- RESTEasy (Apache License 2.0) on Apache Tomcat only.

Spring Support

The Spring support depends on the following third-party libraries:

- Apache Commons DBCP (Apache License 2.0)

- Apache Commons Lang (Apache License 2.0)

- Spring Framework Spring-Beans (Apache License 2.0)

- Spring Framework Spring-Core (Apache License 2.0)

- Spring Framework Spring-ASM (Apache License 2.0)

- Spring Framework Spring-Context (Apache License 2.0)

- Spring Framework Spring-JDBC (Apache License 2.0)

- Spring Framework Spring-ORM (Apache License 2.0)

- Spring Framework Spring-TX (Apache License 2.0)

camunda Wepapp

The camunda Webapp (Cockpit, Tasklist, Admin) depends on the following third-party libraries:

- AngularJS (MIT)

- AngularUI (MIT)

- bpmn-js (Custom license)

- domReady (MIT or new BSD)

- Placeholder.js (MIT)

- jQuery (MIT)

- jQuery UI (MIT)

- RequireJS (MIT)

- Snap.svg (Apache License 2.0)

- Twitter Bootstrap (Apache License 2.0)

Most of those libraries are used in the Camunda commons UI library which is aimed to ease the development of browser based user interfaces.

camunda Cycle

Cycle depends on the following third-party libraries:

Javascript dependencies:

Java dependencies:

- Hibernate (GNU Lesser General Public License)

- Apache Commons Codec (Apache License 2.0)

- NekoHTML (Apache License 2.0)

- SAXON (Mozilla Public License 1.0)

- Apache Commons Virtual File System (Apache License 2.0)

- AspectJ runtime (Eclipse Public License 1.0)

- AspectJ weaver (Eclipse Public License 1.0)

- Jasypt (Apache License 2.0)

- SLF4J JCL (MIT)

- Spring Framework Spring-AOP (Apache License 2.0)

- Spring Framework Spring-ORM (Apache License 2.0)

- Spring Framework Spring-Web (Apache License 2.0)

- Thymeleaf (Apache License 2.0)

- Thymeleaf-Spring3 (Apache License 2.0)

- Tigris SVN Client Adapter (Apache License 2.0)

- SVNKit (TMate Open Source License)

- SVNKit JavaHL (TMate Open Source License)

- Gettext Commons (Apache License 2.0)

camunda Modeler

The camunda Modeler depends on the following third-party libraries:

- Eclipse Modeling Framework Project (EMF) (Eclipse Public License 1.0)

- Eclipse OSGi (Eclipse Public License 1.0)

- Eclipse Graphiti (Eclipse Public License 1.0)

- Eclipse Graphical Editing Framework (GEF) (Eclipse Public License 1.0)

- Eclipse XSD (Eclipse Public License 1.0)

- Eclipse UI (Eclipse Public License 1.0)

- Eclipse Core (Eclipse Public License 1.0)

- Eclipse Java development tools (JDT) (Eclipse Public License 1.0)

- Eclipse JFace (Eclipse Public License 1.0)

- Eclipse BPMN2 (Eclipse Public License 1.0)

- Eclipse WST (Eclipse Public License 1.0)

- Apache Xerces (Eclipse Public License 1.0)

- Eclipse WST SSE UI (Eclipse Public License 1.0)

Public API

The camunda platform provides a public API. This section covers the definition of the public API and backwards compatibility for version updates.

Definition of Public API

camunda BPM public API is limited to the following items:

Java API:

camunda-engine: all non implementation Java packages (package name does not containimpl)camunda-engine-spring: all non implementation Java packages (package name does not containimpl)camunda-engine-cdi: all non implementation Java packages (package name does not containimpl)

HTTP API (REST API):

camunda-engine-rest: HTTP interface (set of HTTP requests accepted by the REST API as documented in REST API reference). Java classes are not part of the public API.

Backwards Compatibility for Public API

The camunda versioning scheme follows the MAJOR.MINOR.PATCH pattern put forward by Semantic Versioning. camunda will maintain public API backwards compatibility for MINOR version updates. Example: Update from version 7.1.x to 7.2.x will not break the public API.

Process Engine

Process Engine Bootstrapping

You have a number of options to configure and create a process engine depending on whether you use a application managed or a shared, container managed process engine.

Application Managed Process Engine

You manage the process engine as part of your application. The following ways exist to configure it:

Shared, Container Managed Process Engine

A container of your choice (e.g., Tomcat, JBoss, GlassFish or IBM WebSphere) manages the process engine for you. The configuration is carried out in a container specific way, see Runtime Container Integration for details.

ProcessEngineConfiguration bean

The camunda engine uses the ProcessEngineConfiguration bean to configure and construct a standalone Process Engine. There are multiple subclasses available that can be used to define the process engine configuration. These classes represent different environments, and set defaults accordingly. It's a best practice to select the class that matches (most of) your environment to minimize the number of properties needed to configure the engine. The following classes are currently available:

org.camunda.bpm.engine.impl.cfg.StandaloneProcessEngineConfigurationThe process engine is used in a standalone way. The engine itself will take care of the transactions. By default the database will only be checked when the engine boots (an exception is thrown if there is no database schema or the schema version is incorrect).org.camunda.bpm.engine.impl.cfg.StandaloneInMemProcessEngineConfigurationThis is a convenience class for unit testing purposes. The engine itself will take care of the transactions. An H2 in-memory database is used by default. The database will be created and dropped when the engine boots and shuts down. When using this, probably no additional configuration is needed (except, for example, when using the job executor or mail capabilities).org.camunda.bpm.engine.spring.SpringProcessEngineConfigurationTo be used when the process engine is used in a Spring environment. See the Spring integration section for more information.org.camunda.bpm.engine.impl.cfg.JtaProcessEngineConfigurationTo be used when the engine runs in standalone mode, with JTA transactions.

Bootstrap a Process Engine using Java API

You can configure the process engine programmatically by creating the right ProcessEngineConfiguration object or by using some pre-defined one:

ProcessEngineConfiguration.createStandaloneProcessEngineConfiguration();

ProcessEngineConfiguration.createStandaloneInMemProcessEngineConfiguration();Now you can call the buildProcessEngine() operation to create a Process Engine:

ProcessEngine processEngine = ProcessEngineConfiguration.createStandaloneInMemProcessEngineConfiguration()

.setDatabaseSchemaUpdate(ProcessEngineConfiguration.DB_SCHEMA_UPDATE_FALSE)

.setJdbcUrl("jdbc:h2:mem:my-own-db;DB_CLOSE_DELAY=1000")

.setJobExecutorActivate("true")

.buildProcessEngine();Configure Process Engine using Spring XML

The easiest way to configure your Process Engine is through an XML file called camunda.cfg.xml. Using that you can simply do:

ProcessEngine processEngine = ProcessEngines.getDefaultProcessEngine()The camunda.cfg.xml must contain a bean that has the id processEngineConfiguration, select the best fitting ProcessEngineConfiguration class suiting your needs:

<bean id="processEngineConfiguration" class="org.camunda.bpm.engine.impl.cfg.StandaloneProcessEngineConfiguration">This will look for an camunda.cfg.xml file on the classpath and construct an engine based on the configuration in that file. The following snippet shows an example configuration:

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans.xsd">

<bean id="processEngineConfiguration" class="org.camunda.bpm.engine.impl.cfg.StandaloneProcessEngineConfiguration">

<property name="jdbcUrl" value="jdbc:h2:mem:camunda;DB_CLOSE_DELAY=1000" />

<property name="jdbcDriver" value="org.h2.Driver" />

<property name="jdbcUsername" value="sa" />

<property name="jdbcPassword" value="" />

<property name="databaseSchemaUpdate" value="true" />

<property name="jobExecutorActivate" value="false" />

<property name="mailServerHost" value="mail.my-corp.com" />

<property name="mailServerPort" value="5025" />

</bean>

</beans>Note that the configuration XML is in fact a Spring configuration. This does not mean that the camunda engine can only be used in a Spring environment! We are simply leveraging the parsing and dependency injection capabilities of Spring internally for building up the engine.

The ProcessEngineConfiguration object can also be created programmatically using the configuration file. It is also possible to use a different bean id:

ProcessEngineConfiguration.createProcessEngineConfigurationFromResourceDefault();

ProcessEngineConfiguration.createProcessEngineConfigurationFromResource(String resource);

ProcessEngineConfiguration.createProcessEngineConfigurationFromResource(String resource, String beanName);

ProcessEngineConfiguration.createProcessEngineConfigurationFromInputStream(InputStream inputStream);

ProcessEngineConfiguration.createProcessEngineConfigurationFromInputStream(InputStream inputStream, String beanName);It is also possible to not use a configuration file and create a configuration based on defaults (see the different supported classes for more information).

ProcessEngineConfiguration.createStandaloneProcessEngineConfiguration();

ProcessEngineConfiguration.createStandaloneInMemProcessEngineConfiguration();All these ProcessEngineConfiguration.createXXX() methods return a ProcessEngineConfiguration that can further be tweaked if needed. After calling the buildProcessEngine() operation, a ProcessEngine is created as explained above.

Configure Process Engine in bpm-platform.xml

The bpm-platform.xml file is used to configure the camunda BPM platform in the following distributions:

- Apache Tomcat

- GlassFish Application Server

- IBM WebSphere Application Server

- Oracle WebLogic Application Server

The <process-engine ... /> xml tag allows you to define a process engine:

<?xml version="1.0" encoding="UTF-8"?>

<bpm-platform xmlns="http://www.camunda.org/schema/1.0/BpmPlatform"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://www.camunda.org/schema/1.0/BpmPlatform http://www.camunda.org/schema/1.0/BpmPlatform">

<job-executor>

<job-acquisition name="default" />

</job-executor>

<process-engine name="default">

<job-acquisition>default</job-acquisition>

<configuration>org.camunda.bpm.engine.impl.cfg.StandaloneProcessEngineConfiguration</configuration>

<datasource>java:jdbc/ProcessEngine</datasource>

<properties>

<property name="history">full</property>

<property name="databaseSchemaUpdate">true</property>

<property name="authorizationEnabled">true</property>

</properties>

</process-engine>

</bpm-platform>See Deployment Descriptor Reference for complete documentation of the syntax of the bpm-platform.xml file.

Configure Process Engine in processes.xml

The process engine can also be configured and bootstrapped using the META-INF/processes.xml file. See Section on processes.xml file for details.

See Deployment Descriptor Reference for complete documentation of the syntax of the processes.xml file.

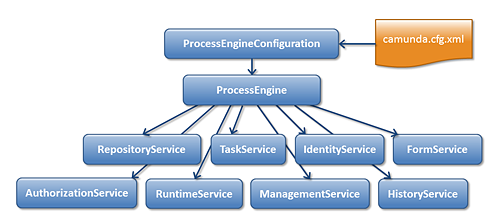

Process Engine API

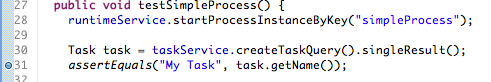

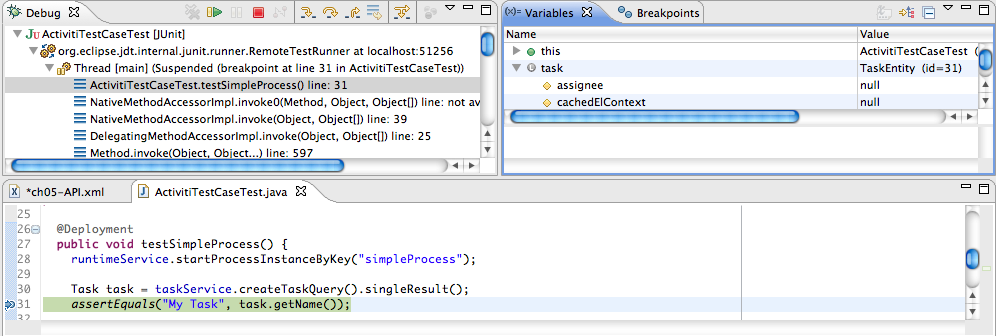

Services API

The Java API is the most common way of interacting with the engine. The central starting point is the ProcessEngine, which can be created in several ways as described in the configuration section. From the ProcessEngine, you can obtain the various services that contain the workflow/BPM methods. ProcessEngine and the services objects are thread safe. So you can keep a reference to 1 of those for a whole server.

ProcessEngine processEngine = ProcessEngines.getDefaultProcessEngine();

RuntimeService runtimeService = processEngine.getRuntimeService();

RepositoryService repositoryService = processEngine.getRepositoryService();

TaskService taskService = processEngine.getTaskService();

ManagementService managementService = processEngine.getManagementService();

IdentityService identityService = processEngine.getIdentityService();

HistoryService historyService = processEngine.getHistoryService();

FormService formService = processEngine.getFormService();ProcessEngines.getDefaultProcessEngine() will initialize and build a process engine the first time it is called and afterwards always returns the same process engine. Proper creation and closing of all process engines can be done with ProcessEngines.init() and ProcessEngines.destroy().

The ProcessEngines class will scan for all camunda.cfg.xml and activiti.cfg.xml files. For all camunda.cfg.xml files, the process engine will be built in the typical way: ProcessEngineConfiguration.createProcessEngineConfigurationFromInputStream(inputStream).buildProcessEngine(). For all activiti.cfg.xml files, the process engine will be built in the Spring way: First the Spring application context is created and then the process engine is obtained from that application context.

All services are stateless. This means that you can easily run Camunda BPM on multiple nodes in a cluster, each going to the same database, without having to worry about which machine actually executed previous calls. Any call to any service is idempotent regardless of where it is executed.

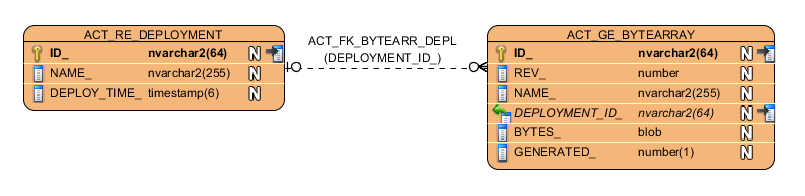

The RepositoryService is probably the first service needed when working with the Camunda engine. This service offers operations for managing and manipulating deployments and process definitions. Without going into much detail here, a process definition is a Java counterpart of BPMN 2.0 process. It is a representation of the structure and behavior of each of the steps of a process. A deployment is the unit of packaging within the engine. A deployment can contain multiple BPMN 2.0 xml files and any other resource. The choice of what is included in one deployment is up to the developer. It can range from a single process BPMN 2.0 xml file to a whole package of processes and relevant resources (for example the deployment 'hr-processes' could contain everything related to hr processes). The RepositoryService allows to deploy such packages. Deploying a deployment means it is uploaded to the engine, where all processes are inspected and parsed before being stored in the database. From that point on, the deployment is known to the system and any process included in the deployment can now be started.

Furthermore, this service allows to

- Query on deployments and process definitions known to the engine.

- Suspend and activate process definitions. Suspending means no further operations can be done on them, while activation is the opposite operation.

- Retrieve various resources such as files contained within the deployment or process diagrams that were automatically generated by the engine.

While the RepositoryService is about static information (i.e., data that doesn't change, or at least not a lot), the RuntimeService is quite the opposite. It deals with starting new process instances of process definitions. As said above, a process definition defines the structure and behavior of the different steps in a process. A process instance is one execution of such a process definition. For each process definition there typically are many instances running at the same time. The RuntimeService is also the service which is used to retrieve and store process variables. This is data specific to the given process instance and can be used by various constructs in the process (e.g., an exclusive gateway often uses process variables to determine which path is chosen to continue the process). The RuntimeService also allows to query on process instances and executions. Executions are a representation of the 'token' concept of BPMN 2.0. Basically an execution is a pointer pointing to where the process instance currently is. Lastly, the RuntimeService is used whenever a process instance is waiting for an external trigger and the process needs to be continued. A process instance can have various wait states and this service contains various operations to 'signal' the instance that the external trigger is received and the process instance can be continued.

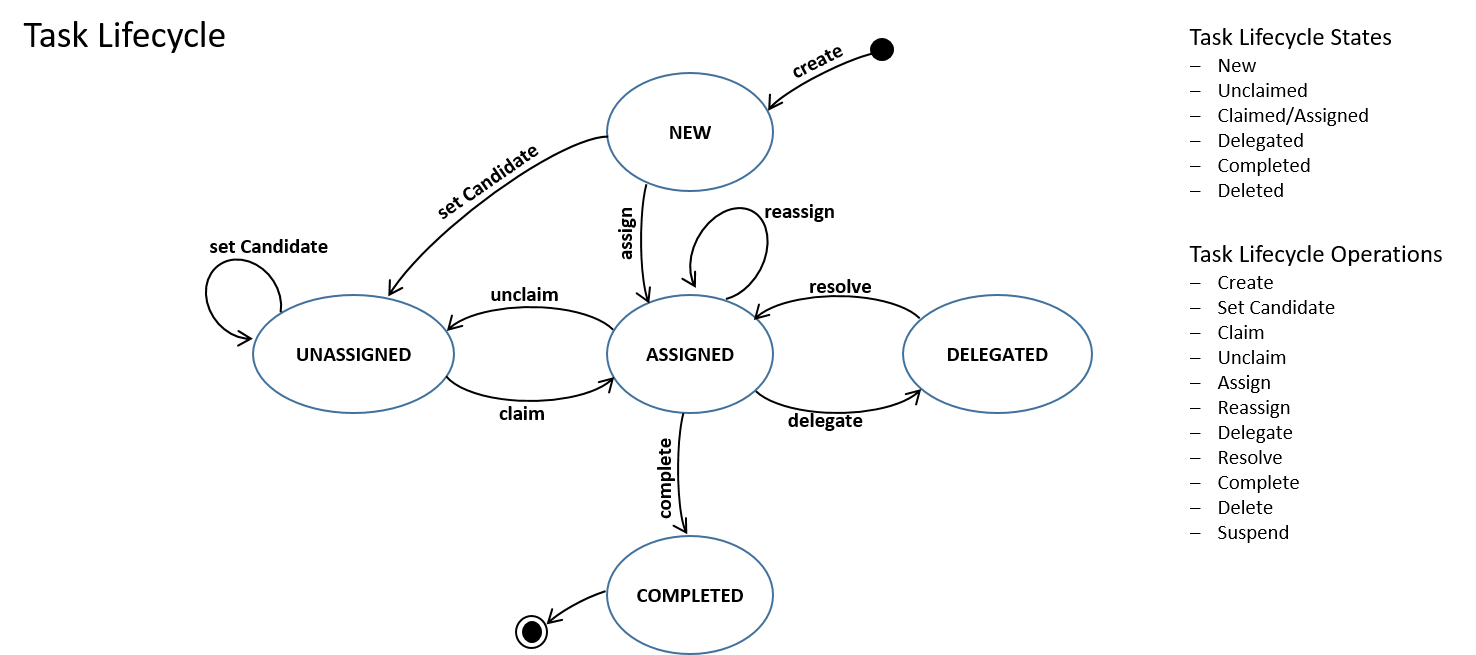

Tasks that need to be performed by actual human users of the system are core to the process engine. Everything around tasks is grouped in the TaskService, such as

- Querying tasks assigned to users or groups.

- Creating new standalone tasks. These are tasks that are not related to a process instances.

- Manipulating to which user a task is assigned or which users are in some way involved with the task.

- Claiming and completing a task. Claiming means that someone decided to be the assignee for the task, meaning that this user will complete the task. Completing means 'doing the work of the tasks'. Typically this is filling in a form of sorts.

The IdentityService is pretty simple. It allows the management (creation, update, deletion, querying, ...) of groups and users. It is important to understand that the core engine actually doesn't do any checking on users at runtime. For example, a task could be assigned to any user, but the engine does not verify if that user is known to the system. This is because the engine can also used in conjunction with services such as LDAP, active directory, etc.

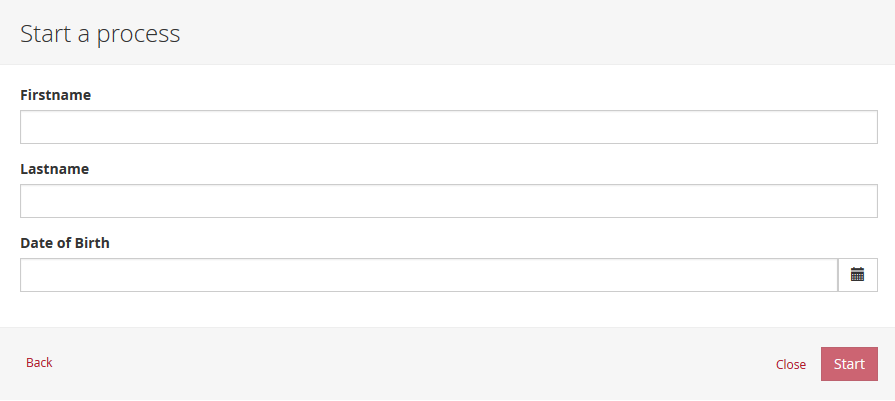

The FormService is an optional service. Meaning that the Camunda engine can perfectly be used without it, without sacrificing any functionality. This service introduces the concept of a start form and a task form. A start form is a form that is shown to the user before the process instance is started, while a task form is the form that is displayed when a user wants to complete a form. You can define these forms in the BPMN 2.0 process definition. This service exposes this data in an easy way to work with. But again, this is optional as forms don't need to be embedded in the process definition.

The HistoryService exposes all historical data gathered by the engine. When executing processes, a lot of data can be kept by the engine (this is configurable) such as process instance start times, who did which tasks, how long it took to complete the tasks, which path was followed in each process instance, etc. This service exposes mainly query capabilities to access this data.

The ManagementService is typically not needed when coding custom applications. It allows to retrieve information about the database tables and table metadata. Furthermore, it exposes query capabilities and management operations for jobs. Jobs are used in the engine for various things such as timers, asynchronous continuations, delayed suspension/activation, etc. Later on, these topics will be discussed in more detail.

For more detailed information on the service operations and the engine API, see the Javadocs.

Query API

To query data from the engine there are multiple possibilities:

- Java Query API: Fluent Java API to query engine entities (like ProcessInstances, Tasks, ...).

- REST Query API: REST API to query engine entities (like ProcessInstances, Tasks, ...).

- Native Queries: Provide own SQL queries to retrieve engine entities (like ProcessInstances, Tasks, ...) if the Query API lacks the possibilities you need (e.g. OR conditions).

- Custom Queries: Use completely customized queries and an own MyBatis mapping to retrieve own value objects or join engine with domain data.

- SQL Queries: Use database SQL queries for use cases like Reporting.

The recommended way is to use one of the Query APIs.

The Java Query API allows to program completely typesafe queries with a fluent API. You can add various conditions to your queries (all of which are applied together as a logical AND) and precisely one ordering. The following code shows an example:

List<Task> tasks = taskService.createTaskQuery()

.taskAssignee("kermit")

.processVariableValueEquals("orderId", "0815")

.orderByDueDate().asc()

.list();You can find more information on this in the Javadocs.

REST Query API

The Java Query API is exposed as REST service as well, see REST documentation for details.

Native Queries

Sometimes you need more powerful queries, e.g. queries using an OR operator or restrictions you can not express using the Query API. For these cases, we introduced native queries, which allow you to write your own SQL queries. The return type is defined by the Query object you use and the data is mapped into the correct objects, e.g. Task, ProcessInstance, Execution, etc. Since the query will be fired at the database you have to use table and column names as they are defined in the database, this requires some knowledge about the internal data structure and it is recommended to use native queries with care. The table names can be retrieved via the API to keep the dependency as small as possible.

List<Task> tasks = taskService.createNativeTaskQuery()

.sql("SELECT count(*) FROM " + managementService.getTableName(Task.class) + " T WHERE T.NAME_ = #{taskName}")

.parameter("taskName", "aOpenTask")

.list();

long count = taskService.createNativeTaskQuery()

.sql("SELECT count(*) FROM " + managementService.getTableName(Task.class) + " T1, "

+ managementService.getTableName(VariableInstanceEntity.class) + " V1 WHERE V1.TASK_ID_ = T1.ID_")

.count();Custom Queries

For performance reasons it might sometimes be desirable not to query the engine objects but some own value or DTO objects collecting data from different tables - maybe including your own domain classes.

SQL Queries

The table layout is pretty straightforward - we focused on making it easy to understand. Hence it is OK to do SQL queries for e.g. reporting use cases. Just make sure that you do not mess up the engine data by updating the tables without exactly knowing what you are doing.

Process Engine Concepts

This section explains some core process engine concepts that are used in both the process engine API and the internal process engine implementation. Understanding these fundamentals makes it easier to use the process engine API.

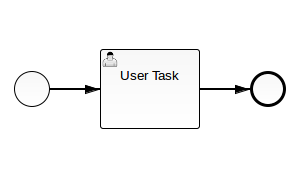

Process Definitions

A process definition defines the structure of a process. You could say that the process definition is the process. Camunda BPM uses BPMN 2.0 as its primary modeling language for modeling process definitions.

Camunda BPM comes with two BPMN 2.0 References:

- The BPMN 2.0 Modeling Reference introduces the fundamentals of BPMN 2.0 and helps you to get started modeling processes. (Make sure to read the Tutorial as well.)

- The BPMN 2.0 Implementation Reference covers the implementation of the individual BPMN 2.0 constructs in Camunda BPM. You should consult this reference if you want to implement and execute BPMN processes.

In Camunda BPM you can deploy processes to the process engine in BPMN 2.0 XML format. The XML files are parsed and transformed into a process definition graph structure. This graph structure is executed by the process engine.

Querying for Process Definitions

You can query for all deployed process definitions using the Java API and the ProcessDefinitionQuery made available through the RepositoryService. Example:

List<ProcessDefinition> processDefinitions = repositoryService.createProcessDefinitionQuery()

.processDefinitionKey("invoice")

.orderByProcessDefinitionVersion()

.asc()

.list();The above query returns all deployed process definitions for the key invoice ordered by their version property.

You can also query for process definitions using the REST API.

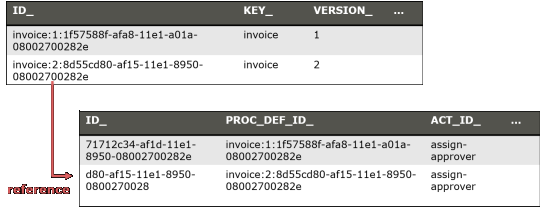

Keys and Versions

The key of a process definition (invoice in the example above) is the logical identifier of the process. It is used throughout the API, most prominently for starting process instances (see section on process instances). The key of a process definition is defined using the id property of the corresponding <process ... > element in the BPMN 2.0 XML file:

<process id="invoice" name="invoice receipt" isExecutable="true">

...

</process>If you deploy multiple processes with the same key, they are treated as individual versions of the same process definition by the process engine.

Suspending Process Definitions

Suspending a process definition disables it temporarily, i.e., it cannot be instantiated while it is suspended. The RuntimeService Java API can be used to suspend a process definition. Similarly, you can activate a process definition to undo this effect.

Process Instances

A process instance is an individual execution of a process definition. The relation of the process instance to the process definition is the same as the relation between Object and Class in Object Oriented Programming (the process instance playing the role of the object and the process definition playing the role of the class in this analogy).

The process engine is responsible for creating process instances and managing their state. If you start a process instance which contains a wait state, for example a user task, the process engine must make sure that the state of the process instance is captured and stored inside a database until the wait state is left (the user task is completed).

Starting a Process Instance

The simplest way to start a process instance is by using the startProcessInstanceByKey(...) method offered by the RuntimeService:

ProcessInstance instance = runtimeService.startProcessInstanceByKey("invoice");

You may optionally pass in a couple of variables:

Map<String, Object> variables = new HashMap<String,Object>();

variables.put("creditor", "Nice Pizza Inc.");

ProcessInstance instance = runtimeService.startProcessInstanceByKey("invoice", variables);

Process variables are available to all tasks in a process instance and are automatically persisted to the database in case the process instance reaches a wait state.

It is also possible to start a process instance using the REST API.

Starting a Process Instance at Any Set of Activities

The startProcessInstanceByKey and startProcessInstanceById methods start the process instance at their default initial activity, which is typically the single blank start event of the process definition. It is also possible to start anywhere in a process instance by using the fluent builder for process instances. The fluent builder can be accessed via the RuntimeService methods createProcessInstanceByKey and createProcessInstanceById.

The following starts a process instance before the activity SendInvoiceReceiptTask and the embedded sub process DeliverPizzaSubProcess:

ProcessInstance instance = runtimeService.createProcessInstanceByKey("invoice")

.startBeforeActivity("SendInvoiceReceiptTask")

.setVariable("creditor", "Nice Pizza Inc.")

.startBeforeActivity("DeliverPizzaSubProcess")

.setVariableLocal("destination", "12 High Street")

.execute();

The fluent builder allows to submit any number of so-called instantiation instructions. When calling execute, the process engine performs these instructions in the order they are specified. In the above example, the engine first starts the task SendInvoiceReceiptTask and executes the process until it reaches a wait state and then starts DeliverPizzaTask and does the same. After these two instructions, the execute call returns.

Querying for Process Instances

You can query for all currently running process instances using the ProcessInstanceQuery offered by the RuntimeService:

runtimeService.createProcessInstanceQuery()

.processDefinitionKey("invoice")

.variableValueEquals("creditor", "Nice Pizza Inc.")

.list();

The above query would select all process instances for the invoice process where the creditor is Nice Pizza Inc..

You can also query for process instances using the REST API.

Interacting with a Process Instance

Once you have performed a query for a particular process instance (or a list of process instances), you may want to interact with it. There are multiple possibilities to interact with a process instance, most prominently:

- Triggering it (make it continue execution):

- Through a Message Event

- Through a Signal Event

- Canceling it:

- Using the

RuntimeService.deleteProcessInstance(...)method.

- Using the

- Starting/Canceling any activity:

- Using the process instance modification feature

If your process uses at least one User Task, you can also interact with the process instance using the TaskService API.

Suspending Process Instances

Suspending a process instance is helpful, if you want ensure that it is not executed any further. For example, if process variables are in an undesired state, you can suspend the instance and change the variables safely.

In detail, suspension means to disallow all actions that change token state (i.e., the activities that are currently executed) of the instance. For example, it is not possible to signal an event or complete a user task for a suspended process instance, as these actions will continue the process instance execution subsequently. Nevertheless, actions like setting or removing variables are still allowed, as they do not change the token state.

Also, when suspending a process instance, all tasks belonging to it will be suspended. Therefore, it will no longer be possible to invoke actions that have effects on the task's lifecycle (i.e., user assignment, task delegation, task completion, ...). However, any actions not touching the lifecycle like setting variables or adding comments will still be allowed.

A process instance can be suspended by using the suspendProcessInstanceById(...) method of the RuntimeService. Similarly it can be reactivated again.

If you would like to suspend all process instances of a given process definition, you can use the method suspendProcessDefinitionById(...) of theRepositoryService and specify the suspendProcessInstances option.

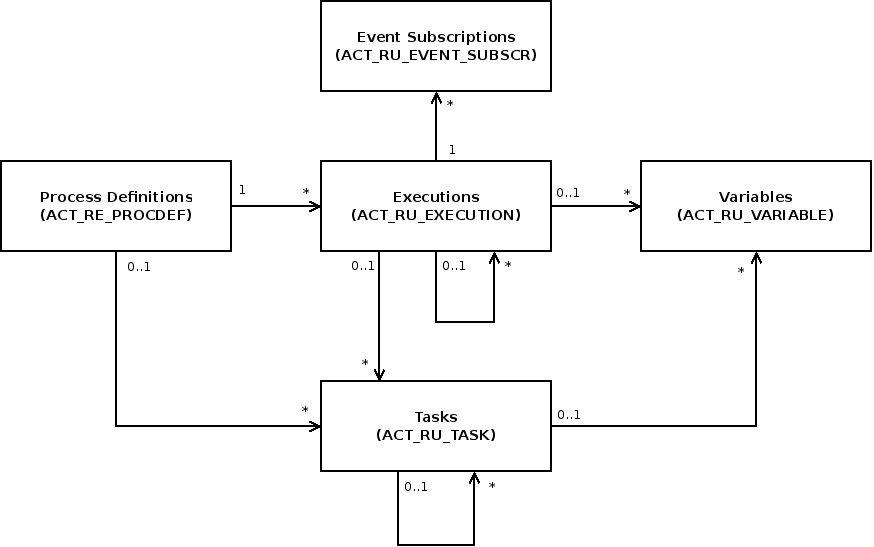

Executions

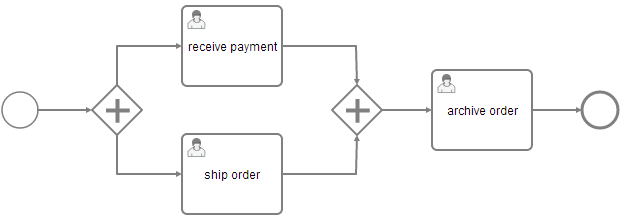

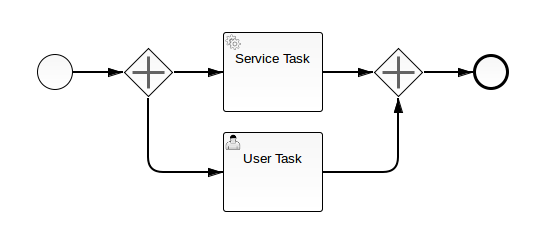

If your process instance contains multiple execution paths (like for instance after a parallel gateway), you must be able to differentiate the currently active paths inside the process instance. In the following example, two user tasks receive payment and ship order can be active at the same time.

Internally, the process engine creates two concurrent executions inside the process instance, one for each concurrent path of execution. Executions are also created for scopes, for example if the process engine reaches a Embedded Sub Process or in case of Multi Instance.

Executions are hierarchical and all executions inside a process instance span a tree, the process instance being the root-node in the tree. Note: the process instance itself is an execution. Executions are variable scopes, meaning that dynamic data can be associated with them.

Querying for executions

You can query for executions using the ExecutionQuery offered by the RuntimeService:

runtimeService.createExecutionQuery()

.processInstanceId(someId)

.list();

The above query returns all executions for a given process instance.

You can also query for executions using the REST API.

Activity Instances

The activity instance concept is similar to the execution concept but takes a different perspective. While an execution can be imagined as a token moving through the process, an activity instance represents an individual instance of an activity (task, subprocess, ...). The concept of the activity instance is thus more state-oriented.

Activity instances also span a tree, following the scope structure provided by BPMN 2.0. Activities that are "on the same level of subprocess" (i.e., part of the same scope, contained in the same subprocess) will have their activity instances at the same level in the tree

Examples:

- Process with two parallel user tasks after parallel Gateway: in the activity instance tree you will see two activity instances below the root instance, one for each user task.

- Process with two parallel Multi Instance user tasks after parallel Gateway: in the activity instance tree, all instances of both user tasks will be listed below the root activity instance. Reason: all activity instances are at the same level of subprocess.

- Usertask inside embedded subprocess: the activity instance tree will have 3 levels: the root instance representing the process instance itself, below it an activity instance representing the instance of the embedded subprocess, and below this one, the activity instance representing the usertask.

Retrieving an Activity Instance

Currently activity instances can only be retrieved for a process instance:

ActivityInstance rootActivityInstance = runtimeService.getActivityInstance(processInstance.getProcessInstanceId());

You can retrieve the activity instance tree using the REST API as well.

Identity & Uniqueness

Each activity instance is assigned a unique ID. The ID is persistent, if you invoke this method multiple times, the same activity instance IDs will be returned for the same activity instances. (However, there might be different executions assigned, see below)

Relation to Executions

The Execution concept in the process engine is not completely aligned with the activity instance concept because the execution tree is generally not aligned with the activity / scope concept in BPMN. In general, there is a n-1 relationship between Executions and ActivityInstances, i.e., at a given point in time, an activity instance can be linked to multiple executions. In addition, it is not guaranteed that the same execution that started a given activity instance will also end it. The process engine performs several internal optimizations concerning the compacting of the execution tree which might lead to executions being reordered and pruned. This can lead to situations where a given execution starts an activity instance but another execution ends it. Another special case is the process instance: if the process instance is executing a non-scope activity (for example a user task) below the process definition scope, it will be referenced by both the root activity instance and the user task activity instance.

Note: If you need to interpret the state of a process instance in terms of a BPMN process model, it is usually easier to use the activity instance tree as opposed to the execution tree.

Jobs and Job Definitions

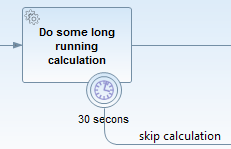

The Camunda process engine includes a component named the Job Executor. The Job Executor is a scheduling component, responsible for performing asynchronous background work. Consider the example of a Timer Event: whenever the process engine reaches the timer event, it will stop execution, persist the current state to the database and create a job to resume execution in the future. A job has a due date which is calculated using the timer expression provided in the BPMN XML.

When a process is deployed, the process engine creates a Job Definition for each activity in the process which will create jobs at runtime. This allows you to query information about timers and asynchronous continuations in your processes.

Querying for jobs

Using the management service, you can query for jobs. The following selects all jobs which are due after a certain date:

managementService.createJobQuery()

.duedateHigherThan(someDate)

.list()It is possible to query for jobs using the REST API.

Querying for Job Definitions

Using the management service, you can also query for job definitions. The following selects all job definitions from a specific process definition:

managementService.createJobDefinitionQuery()

.processDefinitionKey("orderProcess")

.list()The result will contain information about all timers and asynchronous continuations in the order process.

It is also possible to query for job definitions using the REST API.

Suspending and Activating Job Execution

Job suspension prevents jobs from being executed. Suspension of job execution can be controlled on different levels:

- Job Instance Level: individual Jobs can be suspended either directly through the

managementService.suspendJob(...)API or transitively when suspending a Process Instance or a Job Definition. - Job Definition Level: all instances of a certain Timer or Activity can be suspended.

Job suspension by Job Definition allows you to suspend all instances of a certain timer or an asynchronous continuation. Intuitively, this allows you to suspend a certain activity in a process in a way that all process instances will advance until they have reached this activity and then not continue since the activity is suspended.

Let's assume there is a process deployed with key orderProcess, which contains a service task named processPayment. The service task has an asynchronous continuation configured which causes it to be executed by the job executor. The following example shows how you can prevent the processPayment service from being executed:

List<JobDefinition> jobDefinitions = managementService.createJobDefinitionQuery()

.processDefinitionKey("orderProcess")

.activityIdIn("processPayment")

.list();

for (JobDefinition jobDefinition : jobDefinitions) {

managementService.suspendJobDefinitionById(jobDefinition.getId(), true);

}Process Variables

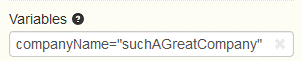

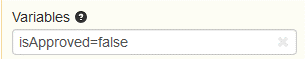

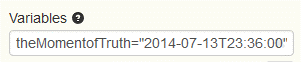

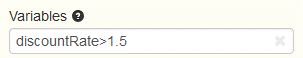

This section describes the concepts of variables in processes. Variables can be used to add data to process runtime state or, more particular, variable scopes. Various API methods that change the state of these entities allow updating of the attached variables. In general, a variable consists of a name and a value. The name is used for identification across process constructs. For example, if one activity sets a variable named var, a follow-up activity can access it by using this name. The value of a variable is a Java object.

Variable Scopes and Variable Visibility

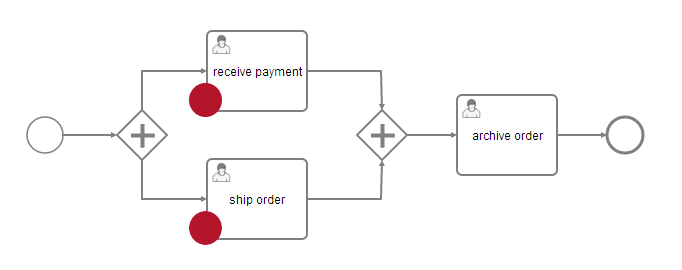

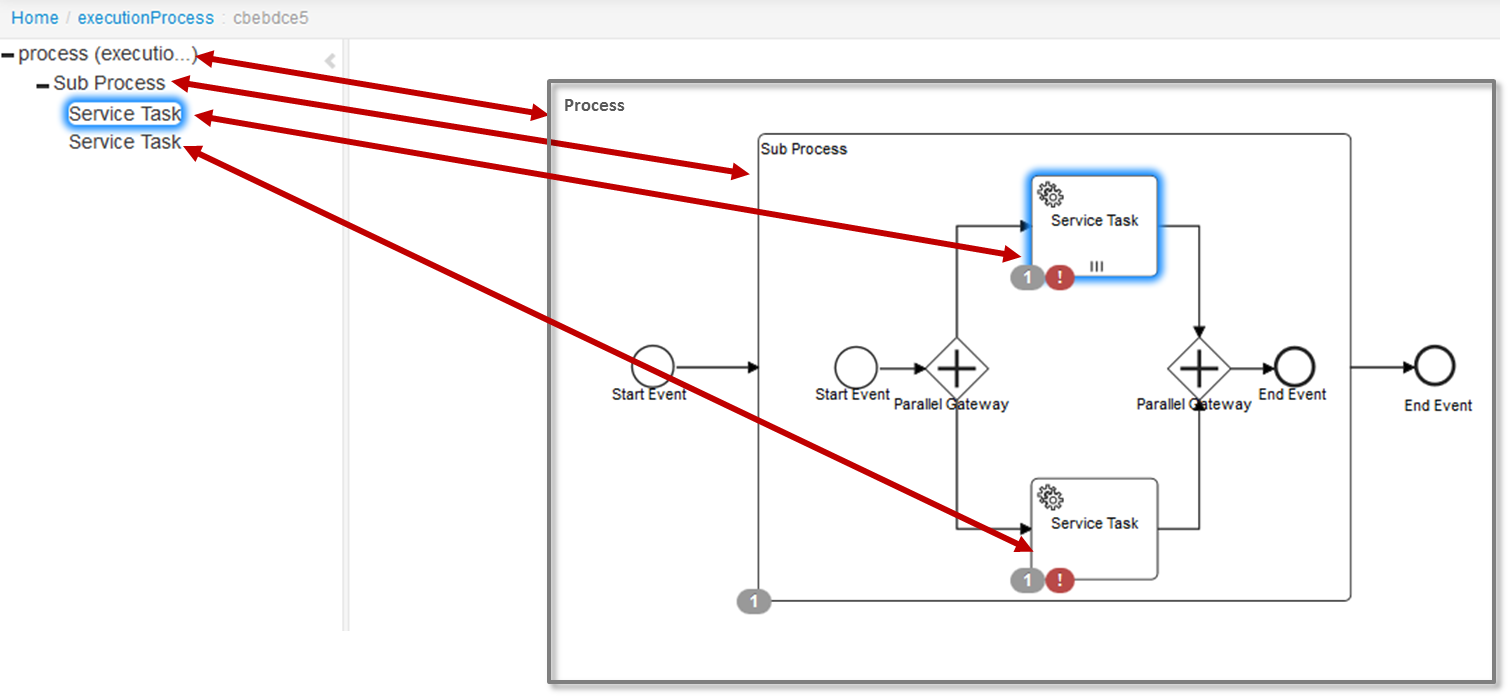

All entities that can have variables are called variable scopes. These are executions (which include process instances) and tasks. As described in the Concepts section, the runtime state of a process instance is represented by a tree of executions. Consider the following process model where the red dots mark active tasks:

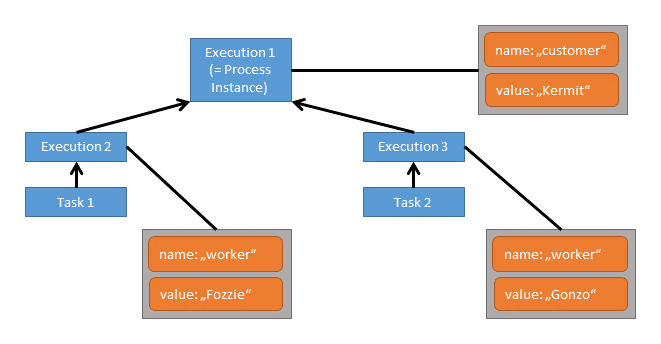

The runtime structure of this process is as follows:

There is a process instance with two child executions, each of which has created a task. All these five entities are variable scopes and the arrows mark a parent-child relationship. A variable that is defined on a parent scope is accessible in every child scope unless a child scope defines a variable of the same name. The other way around, child variables are not accessible from a parent scope. Variables that are directly attached to the scope in question are called local variables. Consider the following assignment of variables to scopes:

In this case, when working on Task 1 the variables worker and customer are accessible. Note that due to the structure of scopes, the variable worker can be defined twice, so that Task 1 accesses a different worker variable than Task 2. However, both share the variable customer which means that if that variable is updated by one of the tasks, this change is also visible to the other.

Both tasks can access two variables each while none of these is a local variable. All three executions have one local variable each.

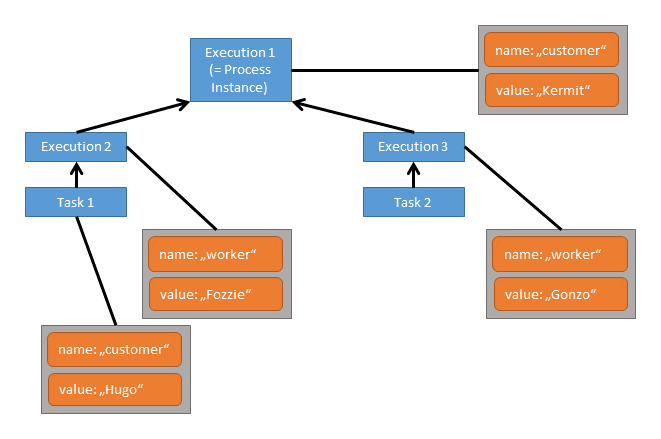

Now let's say, we set a local variable customer on Task 1:

While two variables named customer and worker can still be accessed from Task 1, the customer variable on Execution 1 is hidden, so the accessible customer variable is the local variable of Task 1.

In general, variables are accessible in the following cases:

- Instantiating processes

- Delivering messages

- Task lifecycle transitions, such as completion or resolution

- Setting/getting variables from outside

- Setting/getting variables in a Delegate

- Expressions in the process model

- Scripts in the process model

- (Historic) Variable queries

Setting and Retrieving Variables - Overview

To set and retrieve variables, the process engine offers a Java API that allows setting of variables from Java objects and retrieving them in the same form. Internally, the engine persists variables to the database and therefore applies serialization. For most applications, this is a detail of no concern. However, sometimes, when working with custom Java classes, the serialized value of a variable is of interest. Imagine the case of a monitoring application that manages many process applications. It is decoupled from those applications' classes and therefore cannot access custom variables in their Java representation. For these cases, the process engine offers a way to retrieve and manipulate the serialized value. This boils down to two APIs:

- Java Object Value API: Variables are represented as Java objects. These objects can be directly set as values and retrieved in the same form. This is the more simple API and is the recommended way when implementing code as part of a process application.

- Typed Value API: Variable values are wrapped in so-called typed values that are used to set and retrieve variables. A typed value offers access to meta data such as the way the engine has serialized the variable and, depending on the type, the serialized representation of the variable.

As an example, the following code retrieves and sets two integer variables using both APIs:

// Java Object API: Get Variable

Integer val1 = (Integer) execution.getVariable("val1");

// Typed Value API: Get Variable

IntegerValue typedVal2 = execution.getVariableTyped("val2");

Integer val2 = typedVal2.getValue();

Integer diff = val1 - val2;

// Java Object API: Set Variable

execution.setVariable("diff", diff);

// Typed Value API: Set Variable

IntegerValue typedDiff = Variables.integerValue(diff);

execution.setVariable("diff", typedDiff);The specifics of this code are described in more detail in the sections on the Java Object Value API and the Typed Value API.

Supported Variable Values

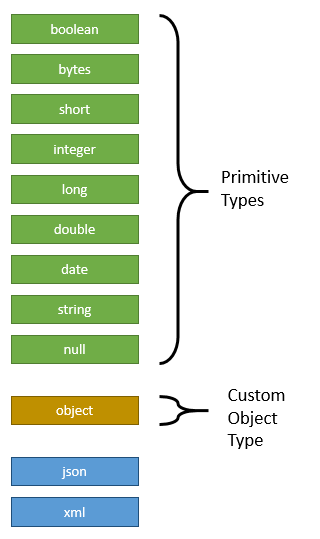

The process engine supports the following variable value types:

Depending on the actual value of a variable, a different type is assigned. Out of the available types, there are nine primitive value types, meaning that they correspond to standard JDK classes:

boolean: Instances ofjava.lang.Booleanbytes: Instances ofbyte[]short: Instances ofjava.lang.Shortinteger: Instances ofjava.lang.Integerlong: Instances ofjava.lang.Longdouble: Instances ofjava.lang.Doubledate: Instances ofjava.util.Datestring: Instances ofjava.lang.Stringnull:nullreferences

Furthermore, the value type object represents custom Java objects. When such a variable is persisted, its value is serialized according to a serialization procedure. These procedures are configurable and exchangeable.

If you prefer to store your variables in XML or JSON format you can use the types xml or json. Both are special because the engine provides constants for them in the enumeration SerializationDataFormats but doesn't provide any serializers out of the box. You could either write your own TypedValueSerializers for those types and register them in your process engine configuration or you could use camunda Spin, which provides support for the two types (see here).

Object Value Serialization

When an object value is passed to the process engine, a serialization format can be specified to tell the process engine to store the value in a specific format. Based on this format, the engine looks up a serializer. The serializer is able to serialize a Java object to the specified format and deserialize it from a representation in that format. That means, there may be different serializers for different formats and it is possible to implement custom serializers in order to store custom objects in a specific format.

The process engine ships one built-in object serializer for the format application/x-java-serialized-object. It is able to serialize Java objects that implement the interface java.io.Serializable and applies standard Java object serialization.

The desired serialization format can be specified when setting a variable using the Typed Value API:

CustomerData customerData = new CustomerData();

ObjectValue customerDataValue = Variables.objectValue(customerData)

.serializationDataFormat(Variables.SerializationDataFormats.JAVA)

.create();

execution.setVariable("someVariable", customerDataValue); On top of that, the process engine configuration has an option defaultSerializationFormat that is used when no specific format is requested. This option defaults to application/x-java-serialized-object.

Using Custom Objects in Task Forms:

Note that the built-in serializer converts objects to byte streams that can only be interpreted with the Java class at hand. When implementing task forms that are based on complex objects, a text-based serialization format should be used since the tasklist cannot interpret these byte streams. See the box Serializing Objects to XML and JSON for details on how to integrate serialization formats like XML and JSON.

Serializing Objects to XML and JSON:

The camunda Spin plugin provides serializers that are capable of serializing object values to XML and JSON. They can be used when it is desired that the serialized objects values can be interpreted by humans or when the serialized value should be meaningful without having the corresponding Java class.

When using a pre-built camunda distribution, camunda Spin is already preconfigured and you can try these formats without further configuration.

Java Object API

The most convenient way of working with process variables from Java is to use their Java object representation. Wherever the process engine offers variable access, process variables can be accessed in this representation given that for custom objects the engine is aware of the involved classes. For example, the following code sets and retrieves a variable for a given process instance:

com.example.Order order = new com.example.Order();

runtimeService.setVariable(execution.getId(), "order", order);

com.example.Order retrievedOrder = (com.example.Order) runtimeService.getVariable(execution.getId(), "order");Note that this code sets a variable at the highest possible point in the hierarchy of variable scopes. This means, if the variable is already present (whether in this execution or any of its parent scopes), it is updated. If the variable is not yet present, it is created in the highest scope, i.e. the process instance. If a variable is supposed to be set exactly on the provided execution, the local methods can be used. For example:

com.example.Order order = new com.example.Order();

runtimeService.setVariableLocal(execution.getId(), "order", order);

com.example.Order retrievedOrder = (com.example.Order) runtimeService.getVariable(execution.getId(), "order");

com.example.Order retrievedOrder = (com.example.Order) runtimeService.getVariableLocal(execution.getId(), "order");

// both methods return the variableWhenever a variable is set in its Java representation, the process engine automatically determines a suitable value serializer or raises an exception if the provided value cannot be serialized.

Typed Value API

In cases in which it is important to access a variable's serialized representation or in which the engine has to be hinted to serialize a value in a certain format, the typed-value-based API can be used. In comparison to the Java-Object-based API, it wraps a variable value in a so-called Typed Value. Such a typed value allows richer representation of variable values.

In order to easily construct typed values, Camunda BPM offers the class org.camunda.bpm.engine.variable.Variables. This class contains static methods that allow creation of single typed values as well as creation of a map of typed values in a fluent way.

Primitive Values

The following code sets a single String variable by specifying it as a typed value:

StringValue typedStringValue = Variables.stringValue("a string value");

runtimeService.setVariable(execution.getId(), "stringVariable", typedStringValue);

StringValue retrievedTypedStringValue = runtimeService.getVariableTyped(execution.getId(), "order");

String stringValue = retrievedTypedStringValue.getValue(); // equals "a string value"Note that with this API, there is one more level of abstraction around the variable value. Thus, in order to access the true value, it is necessary to unwrap the actual value.

Object Values

Of course, for plain String values, the Java-Object-based API is more concise. Let us therefore consider an example with a custom object value:

com.example.Order order = new com.example.Order();

ObjectValue typedObjectValue = Variables.objectValue(order).create();

runtimeService.setVariableLocal(execution.getId(), "order", typedObjectValue);

ObjectValue retrievedTypedObjectValue = runtimeService.getVariableTyped(execution.getId(), "order");

com.example.Order retrievedOrder = (com.example.Order) retrievedTypedObjectValue.getValue();This again is equivalent to the Java-Object-based API. However, it is now possible to tell the engine which serialization format to use when persisting the value. For example, ObjectValue typedObjectValue = Variables.objectValue(order).serializationDataFormat(Variables.SerializationDataFormats.JAVA).create(); creates a value that gets serialized by the engine's built-in Java object serializer. Also, a retrieved ObjectValue instance provides additional variable details:

// returns true

boolean isDeserialized = retrievedTypedObjectValue.isDeserialized();

// returns the format used by the engine to serialize the value into the database

String serializationDataFormat = retrievedTypedObjectValue.getSerializationDateFormat();

// returns the serialized representation of the variable; the actual value depends on the serialization format used

String serializedValue = retrievedTypedObjectValue.getValueSerialized();

// returns the class com.example.Order

Class<com.example.Order> valueClass = retrievedTypedObjectValue.getObjectType();

// returns the String "com.example.Order"

String valueClassName = retrievedTypedObjectValue.getObjectTypeName();The serialization details are useful when the calling application does not possess the classes of the actual variable value (i.e. com.example.Order is not known). In these cases, runtimeService.getVariableTyped(execution.getId(), "order") will raise an exception since it immediately tries to deserialize the variable value. In such a case, the invocation runtimeService.getVariableTyped(execution.getId(), "order", false) can be used. The additional boolean parameter tells the process engine to not attempt deserialization. In this case, the invocation isDeserialized() will return false and invocations like getValue() and getObjectType() will raise exceptions. Calling getValueSerialized() and getObjectTypeName() is a way to access the variable nonetheless.

Similarly, it is possible to set a variable from its serialized representation:

String serializedOrder = "...";

ObjectValue serializedValue =

Variables

.serializedObjectValue(serializedOrder)

.serializationDataFormat(Variables.SerializationDataFormats.JAVA)

.objectTypeName("com.example.Order")

.create();

runtimeService.setVariableLocal(execution.getId(), "order", serializedValue);

ObjectValue retrievedTypedObjectValue = runtimeService.getVariableTyped(execution.getId(), "order");

com.example.Order retrievedOrder = (com.example.Order) retrievedTypedObjectValue.getValue();Be Aware of Inconsistent Variable State:

When setting a serialized variable value, no checking is done whether the structure of the serialized value is compatible with the class the variable value is supposed to be an instance of. When setting the variable from the above example, the supplied serialized value is not validated against the structure of com.example.Order. Thus, an invalid variable value will only be detected when runtimeService#getVariableTyped is called.

Setting Multiple Typed Values

Similar to the Java-Object-based API, it is also possible to set multiple typed values in one API call. The Variables class offers a fluent API to construct a map of typed values:

com.example.Order order = new com.example.Order();

VariableMap variables =

Variables.create()

.putValueTyped("order", Variables.objectValue(order))

.putValueTyped("string", Variables.stringValue("a string value"));

runtimeService.setVariablesLocal(execution.getId(), "order", variables);Interchangeability of APIs

Both APIs offer different views on the same entities and can therefore be combined as is desired. For example, a variable that is set using the Java-Object-based API can be retrieved as a typed value and vice versa. As the class VariableMap implements the Map interface, it is also possible to put plain Java objects as well as typed values into this map.

Which API should you use? The one that fits your purpose best. When you are certain that you always have access to the involved value classes, such as when implementing code in a process application like a JavaDelegate, then the Java-Object-based API is easier to use. When you need to access value-specific meta data such as serialization formats, then the Typed-Value-based API is the way to go.

Input/Output Variable Mapping

To improve the reusability of source code and business logic, camunda BPM offers input/output mapping of process variables. This can be used for tasks, events and subprocesses.

In order to use the variable mapping, the camunda extension element inputOutput has to be added

to the element. It can contain multiple inputParameter and outputParameter elements that

specify which variables should be mapped. The name attribute of an inputParameter denotes

the variable name inside the activity (a local variable to be created), whereas the name attribute of an outputParameter

denotes the variable name outside of the activity.

The content of an input/outputParameter specifies the value that is mapped to the corresponding

variable. It can be a simple constant string or an expression. An empty body sets the variable

to the value null.

<camunda:inputOutput>

<camunda:inputParameter name="x">foo</camunda:inputParameter>

<camunda:inputParameter name="willBeNull"/>

<camunda:outputParameter name="y">${x}</camunda:outputParameter>

<camunda:outputParameter name="z">${willBeNull == null}</camunda:outputParameter>

</camunda:inputOutput>Even complex structures like lists and maps can be used. Both can also be nested.

<camunda:inputOutput>

<camunda:inputParameter name="x">

<camunda:list>

<camunda:value>a</camunda:value>

<camunda:value>${1 + 1}</camunda:value>

<camunda:list>

<camunda:value>1</camunda:value>

<camunda:value>2</camunda:value>

<camunda:value>3</camunda:value>

</camunda:list>

</camunda:list>

</camunda:inputParameter>

<camunda:outputParameter name="y">

<camunda:map>

<camunda:entry key="foo">bar</camunda:entry>

<camunda:entry key="map">

<camunda:map>

<camunda:entry key="hello">world</camunda:entry>

<camunda:entry key="camunda">bpm</camunda:entry>

</camunda:map>

</camunda:entry>

</camunda:map>

</camunda:outputParameter>

</camunda:inputOutput>A script can also be used to provide the variable value. Please see the corresponding section in the scripting chapter for how to specify a script.

A simple example of the benefit of input/output mapping is a complex calculation which should be part of multiple processes definitions. This calculation can be developed as isolated delegation code or a script and be reused in every process, even though the processes use a different variable set. An input mapping is used to map the different process variables to the required input parameters of the complex calculation activity. Accordingly, an output mapping allows to utilize the calculation result in the further process execution.

In more detail, let us assume such a calculation is implemented by a Java Delegate class org.camunda.bpm.example.ComplexCalculation.

This delegate requires a userId and a costSum variable as input

parameters. It then calculates three values, pessimisticForecast, realisticForecast and optimisticForecast,

which are different forecasts of the future costs a customer faces. In a first process, both input variables are available as process variables but with different names (id, sum). From the three results, the process only uses realisticForecast which it depends on by the name forecast in follow-up activities. A corresponding input/output mapping looks as follows:

<serviceTask camunda:class="org.camunda.bpm.example.ComplexCalculation">

<extensionElements>

<camunda:inputOutput>

<camunda:inputParameter name="userId">${id}</camunda:inputParameter>

<camunda:inputParameter name="costSum">${sum}</camunda:inputParameter>

<camunda:outputParameter name="forecast">${realisticForecast}</camunda:outputParameter>

</camunda:inputOutput>

</extensionElements>

</serviceTask>In a second process, let us assume the costSum variable has to be calculated from properties of three different maps. Also, the process

depends on a variable avgForecast as the average value of the three forecasts. In this case, the mapping looks as follows:

<serviceTask camunda:class="org.camunda.bpm.example.ComplexCalculation">

<extensionElements>

<camunda:inputOutput>

<camunda:inputParameter name="userId">${id}</camunda:inputParameter>

<camunda:inputParameter name="costSum">

${mapA[costs] + mapB[costs] + mapC[costs]}

</camunda:inputParameter>

<camunda:outputParameter name="avgForecast">

${(pessimisticForecast + realisticForecast + optimisticForecast) / 3}

</camunda:outputParameter>

</camunda:inputOutput>

</extensionElements>

</serviceTask>Multi-instance IO Mapping

Input mappings can also be used with multi-instance constructs, in which the mapping is applied for every instance that is created. For example, for a multi-instance subprocess with five instances, the mapping is executed five times and the involved variables are created in each of the five subprocess scopes such that they can be accessed independently.

No output mapping for multi-instance constructs:

The engine does not support output mappings for multi-instance constructs. Every instance of the output mapping would overwrite the variables set by the previous instances and the final variable state would become hard to predict.

Process Instance Modification

While the process model contains sequence flows that define in which order activities must be executed, sometimes it is desired to flexibly start an activity again or cancel a running activity. For example, this can be useful when the process model contains an error, such as a wrong sequence flow condition, and running process instances need to be corrected. Use cases for this API may be

- Repairing process instances in which some steps have to be repeated or skipped

- Migrating process instances from one version of a process definition to another

- Testing: Activities can be skipped or repeated for isolated testing of individual process segments

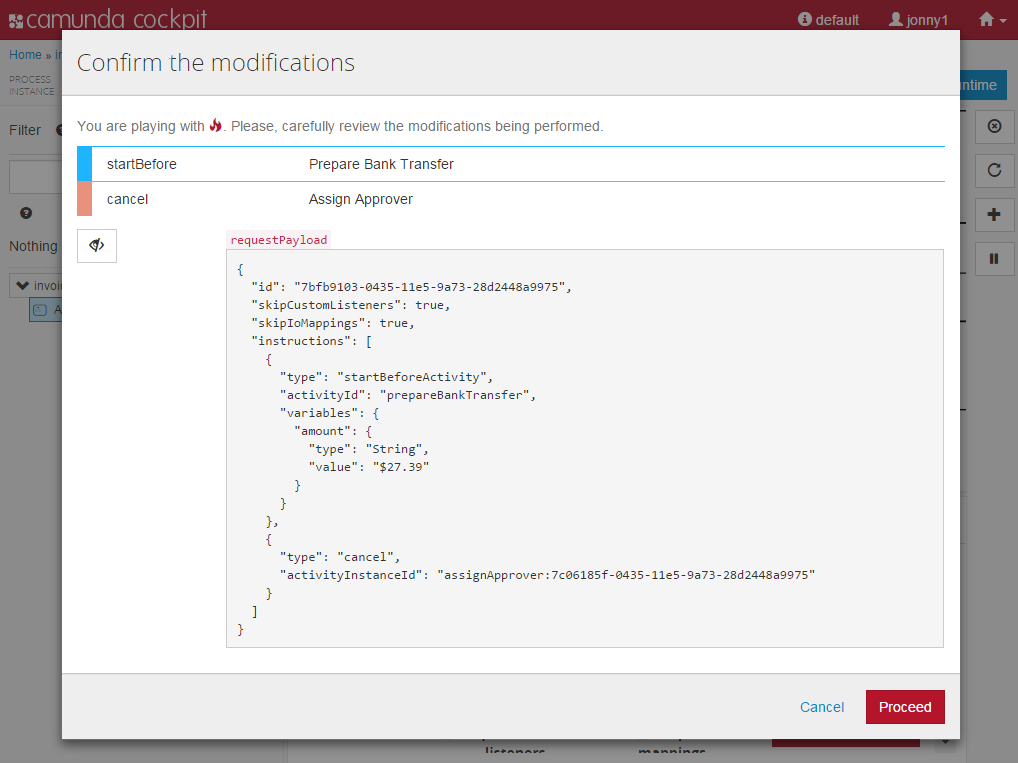

To perform such an operation, the process engine offers the process instance modification API that is entered via RuntimeService.createProcessInstanceModification(...). This API allows to specify multiple modification instructions in one call by using a fluent builder. In particular, it is possible to:

- start execution before an activity

- start execution on a sequence flow leaving an activity

- cancel a running activity instance

- cancel all running instances of a given activity

- set variables with each of the instructions

Process instance modification within the same instance is not recommended! An activity which tries to modify its own process instance can cause undefined behavior, which should be avoided.

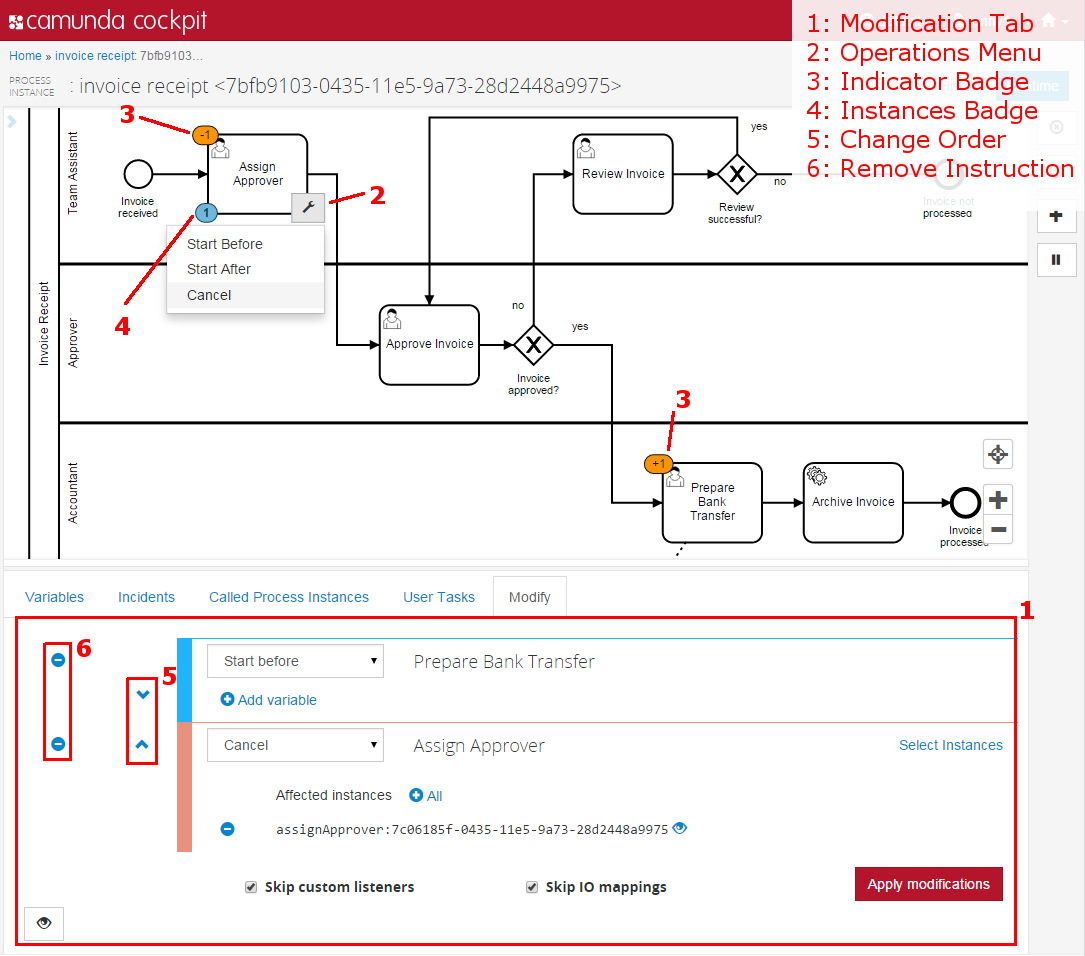

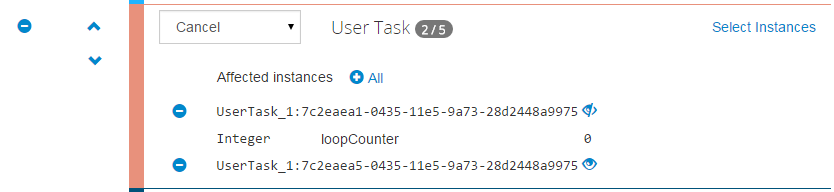

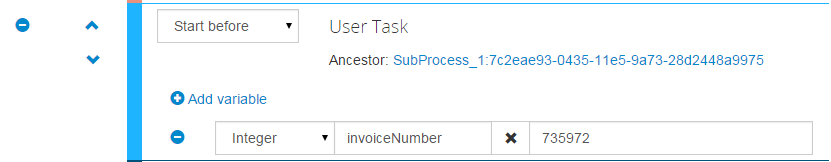

The Camunda enterprise edition provides a user interface to compose process instance modifications visually on the BPMN diagram in Camunda Cockpit.

Process Instance Modification by Example

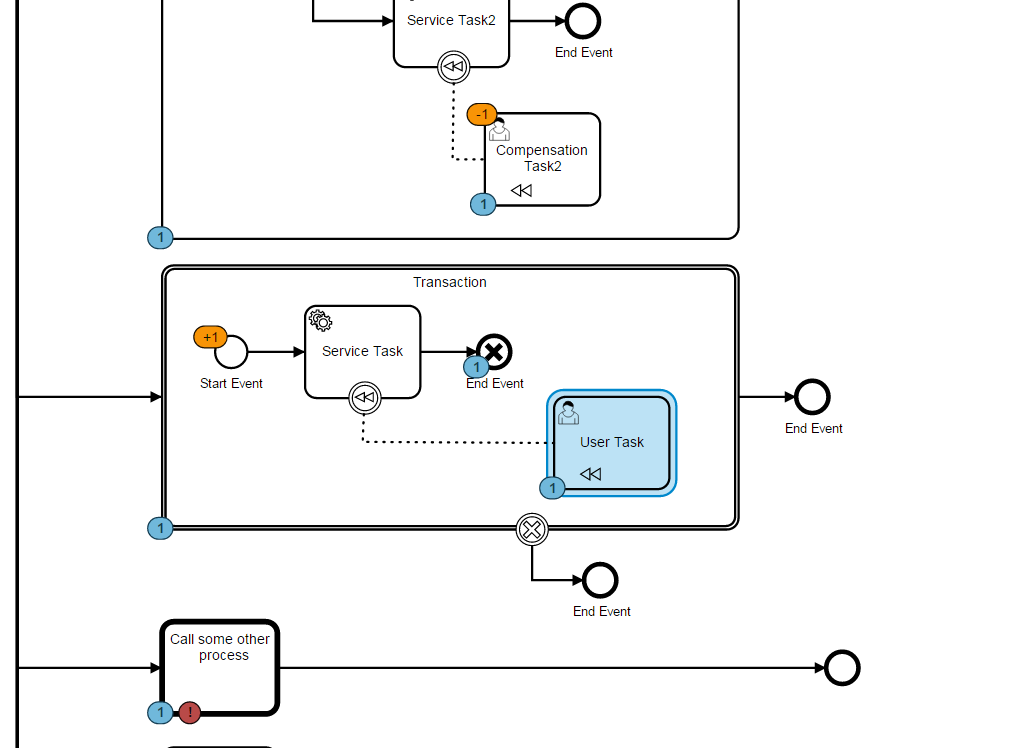

As an example, consider the following process model:

The model shows a simple process for processing a loan application. Let us assume that a loan application has arrived, the loan application has been evaluated, and it was determined to decline the application. That means, the process instance has the following activity instance state:

ProcessInstance

Decline Loan ApplicationNow the worker performing the task Decline Loan Application recognizes an error in the evaluation result and comes to the conclusion that the application should be accepted nevertheless. While such flexibility is not modelled as part of the process, process instance modification allows to correct the running process instance. The following API call does the trick:

ProcessInstance processInstance = runtimeService.createProcessInstanceQuery().singleResult();

runtimeService.createProcessInstanceModification(processInstance.getId())

.startBeforeActivity("acceptLoanApplication")

.cancelAllForActivity("declineLoanApplication")

.execute();This command first starts execution before the activity Accept Loan Application until a wait state - the creation of the user task in this case - is reached. After that, it cancels the running instance of the activity Decline Loan Application. In the worker's task list, the Decline task has been removed and an Accept task has appeared. The resulting activity instance state is:

ProcessInstance